20 Ways for Web Scraping Without Getting Blocked

Expert Network Defense Engineer

Web scraping is a powerful tool for data extraction, but it often faces significant hurdles: getting blocked. This comprehensive guide provides 20 effective strategies to help you overcome anti-bot measures and successfully collect data without interruption.

Whether you're a data analyst, a market researcher, or a developer, understanding these techniques is crucial for efficient and reliable web scraping. We will delve into practical methods, from sophisticated proxy management to advanced browser emulation, ensuring your scraping operations remain undetected and productive. By implementing these strategies, you can significantly improve your success rate and maintain continuous access to the data you need.

Key Takeaways

- Proxy Rotation is Essential: Regularly changing IP addresses prevents detection and blocking.

- Mimic Human Behavior: Emulating realistic user interactions makes your scraper less suspicious.

- Advanced Anti-Bot Bypasses: Techniques like CAPTCHA solving and fingerprinting evasion are crucial for complex sites.

- Utilize Specialized Tools: Web scraping APIs and headless browsers offer robust solutions for challenging targets.

- Continuous Adaptation: Anti-bot measures evolve, requiring scrapers to adapt and update strategies constantly.

1. Master Proxy Management

Effective proxy management is the cornerstone of successful web scraping, ensuring your requests appear to originate from diverse locations and IP addresses. Websites often block IP addresses that make too many requests in a short period, making proxy rotation indispensable. By distributing your requests across a pool of IP addresses, you significantly reduce the likelihood of detection and blocking. This strategy mimics organic user traffic, making it difficult for anti-bot systems to identify automated activity. The web scraping software market is projected to grow significantly, reaching USD 3.52 billion by 2037, underscoring the increasing demand for effective scraping solutions that often rely on robust proxy infrastructure [1].

1.1. Utilize Premium Proxies

Premium proxies offer superior reliability and speed compared to free alternatives, which are often quickly blacklisted. Residential proxies, in particular, are highly effective as they are IP addresses assigned by Internet Service Providers (ISPs) to real homes, making them appear as legitimate user traffic.

Datacenter proxies, while faster, are easier to detect due to their commercial origins. For instance, when scraping e-commerce sites for price monitoring, using residential proxies ensures that your requests blend in with regular customer browsing, preventing IP bans that could disrupt data collection. A common usage of web scraping proxies is masking or hiding the client's IP address, which is beneficial for avoiding detection [2].

1.2. Implement IP Rotation

Rotating your IP addresses with every request, or after a certain number of requests, is crucial. This prevents websites from identifying a single IP address making an unusually high volume of requests. Automated proxy rotators handle this seamlessly, cycling through a large pool of IPs.

This technique is particularly effective when dealing with websites that employ rate limiting based on IP addresses. For example, a market research firm scraping competitor pricing data would use IP rotation to avoid triggering alarms, allowing them to gather comprehensive datasets without interruption.

1.3. Geo-Targeted Proxies

Using geo-targeted proxies allows you to send requests from specific geographical locations. This is vital when scraping region-specific content or bypassing geo-restrictions. If a website serves different content based on the user's location, a geo-targeted proxy ensures you access the correct version. For example, scraping localized product reviews from different countries requires proxies from those respective regions to ensure accurate data collection.

Comparison Summary: Proxy Types

| Feature | Residential Proxies | Datacenter Proxies | Mobile Proxies |

|---|---|---|---|

| Source | Real ISP users | Commercial data centers | Mobile network operators |

| Detection Risk | Low (appear as real users) | High (easier to detect) | Very Low (highly trusted IPs) |

| Speed | Moderate | High | Moderate |

| Cost | High | Low | Very High |

| Use Case | High-stealth scraping, geo-targeting | High-volume, less sensitive scraping | Highly sensitive targets, mobile-specific content |

| Reliability | High | Moderate | High |

2. Mimic Human Behavior

Websites employ sophisticated anti-bot systems that analyze request patterns to distinguish between human users and automated bots. To avoid detection, your scraper must emulate human-like browsing behavior. This involves more than just rotating IPs; it requires simulating realistic interactions, delays, and browser characteristics. Behavioral analysis is a key technique used in bot detection, alongside CAPTCHAs and browser fingerprinting [3].

2.1. Randomize Request Delays

Sending requests at a consistent, rapid pace is a clear indicator of a bot. Implement random delays between requests to mimic human browsing patterns. Instead of a fixed delay, use a range (e.g., 5-15 seconds) to introduce variability. For example, when scraping product pages, a human user would naturally spend time viewing images, reading descriptions, and navigating between pages, not instantly jumping from one page to the next. Randomizing delays makes your scraper appear less robotic and more like a genuine user.

2.2. Use Realistic User-Agents

The User-Agent string identifies the browser and operating system making the request. Many anti-bot systems flag requests with generic or outdated User-Agents. Always use a diverse pool of up-to-date User-Agent strings from popular browsers like Chrome, Firefox, and Safari, across different operating systems. Regularly update this list to reflect current browser versions. A common mistake is using a default User-Agent like python-requests/X.X.X, which immediately signals automated activity.

2.3. Handle Cookies and Sessions

Websites use cookies to manage user sessions and track activity. A scraper that ignores cookies or handles them incorrectly will quickly be identified as a bot. Ensure your scraper accepts and stores cookies, sending them back with subsequent requests within the same session. This maintains a consistent session, making your interactions appear more natural. For instance, logging into a website to access protected content requires proper cookie management to maintain the authenticated session.

2.4. Simulate Mouse Movements and Clicks

For highly protected websites, simply sending HTTP requests might not be enough. Advanced anti-bot systems track mouse movements, clicks, and scrolling behavior. Using headless browsers like Selenium or Playwright, you can programmatically simulate these interactions. This is particularly useful for dynamic websites that load content via JavaScript or require user interaction to reveal data. For example, clicking a load more button or navigating through pagination requires simulating clicks to access all data. While this adds complexity, it significantly enhances your scraper's stealth.

3. Bypass Advanced Anti-Bot Measures

Modern websites deploy sophisticated anti-bot technologies like Cloudflare and DataDome that go beyond simple IP blocking. These systems use a combination of techniques, including CAPTCHAs, browser fingerprinting, and behavioral analysis, to detect and block automated traffic. Overcoming these requires more advanced strategies. Cloudflare Bot Management, for example, utilizes machine learning, behavioral analysis, and fingerprinting to classify bots [4].

3.1. Solve CAPTCHAs Programmatically

CAPTCHAs (Completely Automated Public Turing test to tell Computers and Humans Apart) are designed to prevent bots. While challenging, various services and techniques can help solve them. This includes using CAPTCHA solving services (e.g., Scrapeless) that employ human workers or advanced AI models. For instance, when encountering a reCAPTCHA on a login page, integrating a CAPTCHA solving service allows your scraper to proceed as if a human has solved it. Scrapeless offers a dedicated CAPTCHA solver to automate this process.

3.2. Evade Browser Fingerprinting

Browser fingerprinting involves collecting various data points from your browser (e.g., user agent, installed fonts, plugins, screen resolution, WebGL information) to create a unique identifier. Anti-bot systems use this fingerprint to identify and track scrapers, even if they change IP addresses. To evade this, you need to ensure your headless browser's fingerprint appears consistent and legitimate. This often involves using stealth plugins for Puppeteer or Selenium, or carefully configuring browser properties to match common human browser profiles.

3.3. Manage HTTP Headers

Beyond the User-Agent, other HTTP headers can reveal your scraper's identity. Ensure your requests include a full set of realistic HTTP headers, such as Accept, Accept-Encoding, Accept-Language, and Referer. These headers should match those sent by a real browser. Missing or inconsistent headers are a common red flag for anti-bot systems. For example, a request without an Accept-Language header might be flagged as suspicious, as real browsers always send this information.

3.4. Handle JavaScript Challenges

Many websites use JavaScript to dynamically load content or implement anti-bot challenges. If your scraper doesn't execute JavaScript, it will fail to render the page correctly or bypass these challenges. Headless browsers are essential for this, as they can execute JavaScript just like a regular browser. For instance, a single-page application (SPA) heavily relies on JavaScript to display content, and a scraper that doesn't process JavaScript will only see an empty page.

4. Optimize Request Patterns

How your scraper makes requests can be as important as what it sends. Optimizing your request patterns to appear more natural and less aggressive can significantly reduce the chances of being blocked. This involves careful consideration of request frequency, concurrency, and error handling.

4.1. Implement Request Throttling

Throttling limits the number of requests your scraper makes within a given time frame. This prevents you from overwhelming the target server and appearing as a denial-of-service attack. Instead of sending requests as fast as possible, introduce deliberate pauses. This is different from random delays, as throttling ensures you stay within a predefined request limit, protecting both your scraper and the target website.

4.2. Diversify Crawling Patterns

Predictable crawling patterns (e.g., always scraping pages in sequential order) can be easily detected. Diversify your crawling paths by randomly selecting links, exploring different sections of the website, or even revisiting previously scraped pages. This makes your activity appear more organic and less like a programmed bot. For example, instead of scraping page1, page2, page3, your scraper might visit page5, then page1, then page8.

4.3. Respect robots.txt and sitemap.xml

While not a direct anti-blocking measure, respecting robots.txt and sitemap.xml files demonstrates good scraping etiquette. These files provide guidelines on which parts of a website should not be crawled and which can be. Ignoring robots.txt can lead to your IP being blacklisted or even legal action. Adhering to these guidelines shows respect for the website's policies and can help maintain a good reputation for your scraping activities.

5. Leverage Advanced Tools and Services

For complex web scraping tasks, relying solely on custom-built scripts can be inefficient and prone to blocking. Specialized tools and services are designed to handle the intricacies of anti-bot measures, offering robust and scalable solutions. The web scraping software market is experiencing significant growth, indicating a rising need for such advanced solutions [1].

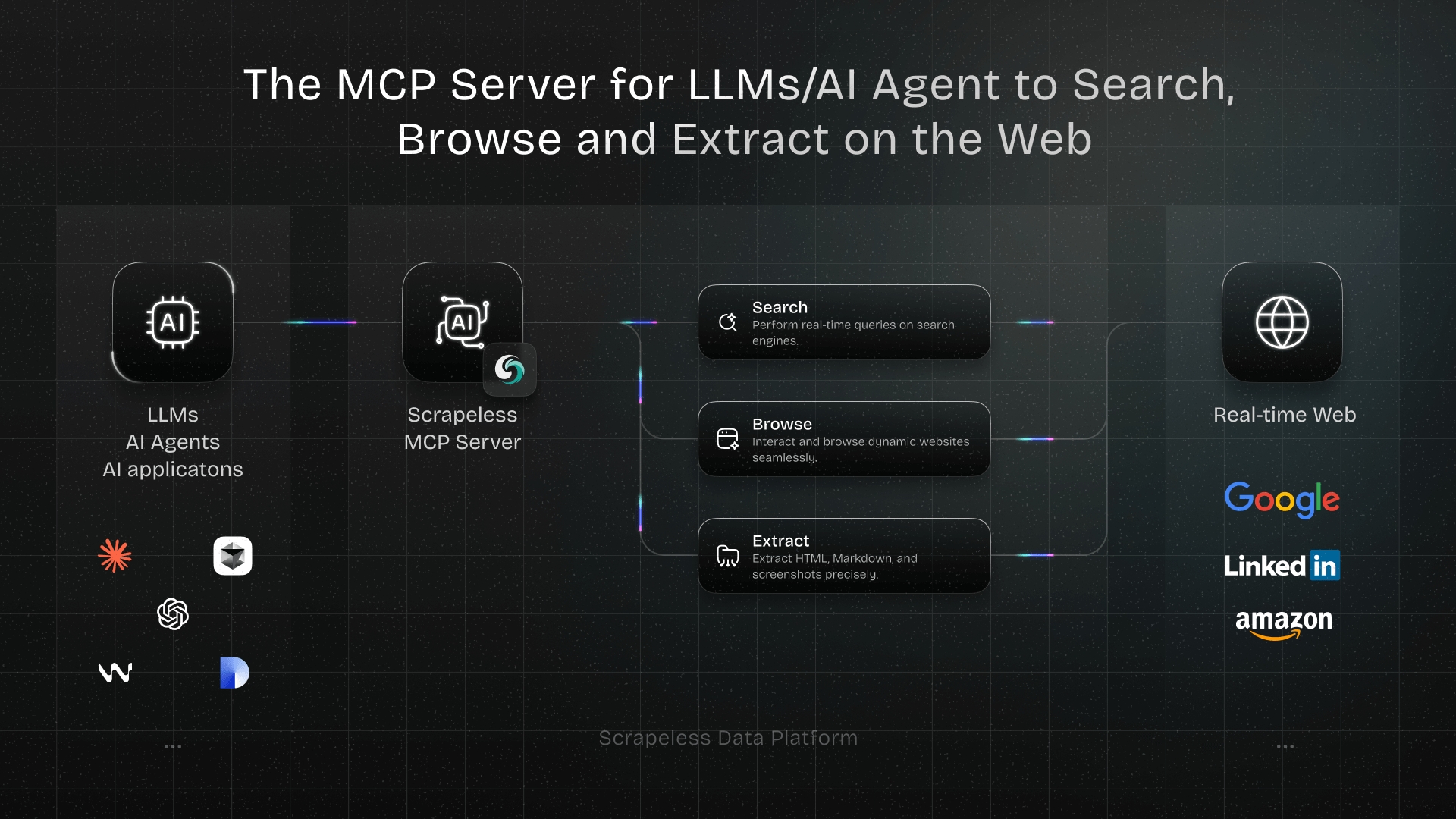

5.1. Use a Web Scraping API

Web scraping APIs, like Scrapeless, abstract away the complexities of proxy management, headless browsers, and anti-bot bypass techniques. You send a URL to the API, and it returns the desired content, handling all the blocking challenges behind the scenes. This allows you to focus on data extraction rather than infrastructure management. For example, when scraping a website protected by Cloudflare or DataDome, a web scraping API can automatically bypass these protections, saving significant development time and effort. Scrapeless offers a Universal Scraping API designed to handle any website without getting blocked.

5.2. Cloud-Based Scraping Solutions

Cloud-based scraping platforms provide a complete environment for running your scrapers, often with built-in anti-blocking features. These platforms manage infrastructure, scaling, and IP rotation, reducing your operational burden. They are ideal for large-scale scraping projects that require high availability and performance. For instance, a company needing to scrape millions of data points daily for competitive intelligence would benefit from a cloud-based solution that can scale on demand.

5.3. Integrate with Browser Automation Frameworks

While headless browsers are powerful, integrating them with robust automation frameworks (e.g., Selenium, Playwright, Puppeteer) allows for more sophisticated interaction and anti-detection strategies. These frameworks provide fine-grained control over browser behavior, enabling you to simulate complex user flows and bypass advanced anti-bot challenges. For example, simulating a user logging into a social media platform and then navigating through their feed requires the precise control offered by these frameworks.

6. Technical Optimizations

Beyond behavioral and tool-based strategies, several technical optimizations can make your scraper more resilient to detection and blocking. These involve fine-tuning your requests and understanding underlying network protocols.

6.1. Use HTTP/2

Many modern websites use HTTP/2, which allows for multiplexing requests over a single connection, improving performance. If your scraper only uses HTTP/1.1, it might stand out. Ensure your scraping library or tool supports HTTP/2 to blend in with contemporary web traffic. This small technical detail can sometimes be enough to avoid detection by more advanced anti-bot systems.

6.2. Handle Retries and Errors Gracefully

Network errors, temporary blocks, or CAPTCHA challenges are inevitable. Implement robust error handling and retry mechanisms with exponential backoff. Instead of immediately retrying a failed request, wait for an increasing amount of time before the next attempt. This prevents your scraper from hammering the server and appearing aggressive. For example, if a request fails, wait 5 seconds, then 10, then 20, and so on, before giving up.

6.3. Cache Responses

For static content or pages that don't change frequently, cache the responses. This reduces the number of requests you send to the target website, minimizing your footprint and reducing the load on their servers. Caching also speeds up your scraping process, making it more efficient. For instance, if you're scraping product categories that rarely change, caching their HTML content can prevent unnecessary repeated requests.

7. Stay Updated and Adapt

The landscape of anti-bot technologies is constantly evolving. What works today might not work tomorrow. Continuous learning and adaptation are crucial for long-term web scraping success.

7.1. Monitor Website Changes

Regularly monitor the target website for changes in its structure, anti-bot measures, or robots.txt file. Websites frequently update their defenses, and your scraper needs to adapt accordingly. This proactive approach helps you identify and address potential blocking issues before they disrupt your data collection.

7.2. Read Anti-Bot Research

Stay informed about the latest research and developments in anti-bot technologies and bypass techniques. Blogs, academic papers, and forums dedicated to web scraping and cybersecurity can provide valuable insights into new detection methods and how to counter them. This knowledge empowers you to build more resilient scrapers.

7.3. Use Open-Source Tools and Communities

Leverage open-source web scraping libraries and frameworks, and participate in online communities. These resources often provide up-to-date solutions, shared experiences, and collaborative problem-solving for common blocking challenges. The collective knowledge of the community can be invaluable when facing a particularly stubborn anti-bot system.

8. Legal and Ethical Considerations

While this article focuses on technical methods to avoid blocking, it's crucial to acknowledge the legal and ethical implications of web scraping. Always ensure your activities comply with relevant laws and website terms of service.

8.1. Review Terms of Service

Before scraping any website, carefully review its terms of service. Some websites explicitly prohibit scraping, while others have specific guidelines. Adhering to these terms can prevent legal disputes and maintain a positive relationship with the website owner. Ignoring terms of service can lead to legal action or permanent IP bans.

8.2. Data Privacy and GDPR

When scraping personal data, ensure compliance with data privacy regulations like GDPR (General Data Protection Regulation) or CCPA (California Consumer Privacy Act). This involves understanding what constitutes personal data, how it can be collected, stored, and processed. Non-compliance can result in significant fines and legal repercussions.

8.3. Ethical Scraping Practices

Beyond legal requirements, adopt ethical scraping practices. This includes avoiding excessive load on servers, not scraping sensitive or private information without consent, and providing clear attribution if you publish scraped data. Ethical scraping builds trust and contributes to a healthier web ecosystem.

9. Advanced Proxy Techniques

Proxies are fundamental, but their effective use extends to more nuanced strategies that can further enhance your scraping success.

9.1. Backconnect Proxies

Backconnect proxies (also known as rotating residential proxies) automatically rotate IP addresses for you, often with every request or after a set time. This eliminates the need for manual proxy management and provides a fresh IP for each interaction, making it extremely difficult for websites to track your activity based on IP addresses. They are particularly useful for large-scale scraping operations where managing thousands of individual proxies would be impractical.

9.2. Proxy Chains

For extreme anonymity and to bypass highly sophisticated detection systems, you can chain multiple proxies together. This routes your request through several proxy servers before reaching the target website, obscuring your origin even further. While this adds latency, it provides an additional layer of security against advanced tracking. This method is typically reserved for very sensitive or challenging scraping tasks.

10. Headless Browser Enhancements

While headless browsers are powerful, specific enhancements can make them even more effective at mimicking human users and evading detection.

10.1. Randomize Viewport Size

Different users have different screen resolutions. Randomizing the viewport size of your headless browser can make your requests appear more diverse and less like a uniform bot. Instead of always using a standard desktop resolution, vary it to simulate different devices (e.g., mobile, tablet, various desktop sizes).

10.2. Manage Browser Extensions

Real browsers often have extensions installed. While not always necessary, simulating the presence of common browser extensions (e.g., ad blockers, dark mode extensions) can add another layer of realism to your headless browser's fingerprint. This is a more advanced technique but can be effective against highly sophisticated fingerprinting algorithms.

10.3. Simulate Browser Events

Beyond basic clicks and scrolls, simulate a wider range of browser events like onmouseover, onkeydown, onfocus, and onblur. These subtle interactions are often tracked by anti-bot systems to build a behavioral profile of the user. By including these events, your scraper's behavior becomes almost indistinguishable from a human's.

11. Network-Level Obfuscation

Some anti-bot measures operate at the network level, analyzing traffic patterns and TLS fingerprints. Obfuscating these can provide an additional layer of protection.

11.1. TLS Fingerprinting Evasion

TLS (Transport Layer Security) fingerprinting analyzes the unique characteristics of your TLS handshake to identify the client software. Different browsers and libraries have distinct TLS fingerprints. To evade this, use libraries or tools that can mimic the TLS fingerprint of a real browser, such as curl-impersonate or specialized scraping APIs. This ensures your network requests don't reveal your automated nature at a low level.

11.2. Randomize HTTP Request Order

While HTTP/2 allows multiplexing, the order in which resources are requested can still be a subtle indicator. Randomizing the order of resource requests (e.g., images, CSS, JavaScript files) can make your traffic less predictable and more human-like. This is a highly advanced technique, but it can be effective against very sophisticated behavioral analysis systems.

12. Content-Based Detection Avoidance

Anti-bot systems can also analyze the content of your requests and responses for bot-like patterns. Avoiding these can prevent detection.

12.1. Avoid Honeypot Traps

Honeypot traps are invisible links or fields designed to catch bots. If your scraper attempts to follow an invisible link or fill an invisible form field, it immediately identifies itself as a bot. Always inspect the HTML for display: none, visibility: hidden, or height: 0, and avoid interacting with such elements. This requires careful parsing of the HTML and CSS.

12.2. Handle Dynamic Content Correctly

Websites often load content dynamically using AJAX or other JavaScript techniques. If your scraper only processes the initial HTML, it will miss significant portions of the data. Ensure your scraper waits for dynamic content to load before attempting to extract data. This often involves using WebDriverWait in Selenium or similar mechanisms in other headless browser frameworks.

13. Infrastructure and Scaling

For large-scale scraping, your infrastructure plays a critical role in avoiding blocks and ensuring efficiency.

13.1. Distributed Scraping Architecture

Distribute your scraping tasks across multiple machines or cloud instances. This allows you to use a wider range of IP addresses and reduces the load on any single machine, making your operations more resilient and less prone to detection. A distributed architecture also provides redundancy and scalability.

13.2. Use Rotating Proxies at Scale

When operating at a large scale, manually managing proxies becomes impossible. Utilize proxy services that offer automatic rotation and a vast pool of IPs. This ensures that even with a high volume of requests, your IP addresses are constantly changing, maintaining a low detection risk. This is where the investment in a premium proxy provider truly pays off.

14. Data Storage and Management

Efficient data storage and management are crucial for any scraping project, especially when dealing with large volumes of data.

14.1. Incremental Scraping

Instead of re-scraping entire websites, implement incremental scraping. Only scrape new or updated content, reducing the number of requests and minimizing your footprint. This is particularly useful for news sites or e-commerce platforms where content changes frequently but not entirely.

14.2. Database Integration

Store your scraped data in a structured database (e.g., SQL, NoSQL). This allows for efficient querying, analysis, and management of large datasets. Proper database design can also help in tracking changes, preventing duplicates, and ensuring data integrity.

15. Monitoring and Alerting

Proactive monitoring of your scraping operations is key to identifying and resolving blocking issues quickly.

15.1. Implement Logging

Comprehensive logging of all requests, responses, and errors helps in debugging and identifying patterns of blocking. Log details such as HTTP status codes, response times, and any anti-bot challenges encountered. This data is invaluable for refining your scraping strategies.

15.2. Set Up Alerts

Configure alerts for critical events, such as a sudden increase in 403 (Forbidden) responses, CAPTCHA occurrences, or significant drops in data collection rates. Early alerts allow you to react quickly to blocking attempts and adjust your scraper before major disruptions occur.

16. User-Agent and Header Rotation

Beyond simply using realistic User-Agents, actively rotating them adds another layer of defense.

16.1. Rotate User-Agents

Just like IP addresses, rotate your User-Agent strings with each request or after a few requests. Maintain a large list of diverse and up-to-date User-Agents to mimic a wide range of real users browsing from different devices and browsers. This makes it harder for anti-bot systems to build a consistent profile of your scraper.

16.2. Randomize Header Order

While less common, some advanced anti-bot systems might analyze the order of HTTP headers. Randomizing the order of headers in your requests can add a subtle layer of obfuscation, making your requests appear less programmatic and more human-like. This is a micro-optimization but can contribute to overall stealth.

17. Referer Header Management

The Referer header indicates the URL of the page that linked to the current request. Proper management of this header can significantly impact your scraper's stealth.

17.1. Set Realistic Referers

Always set a realistic Referer header that reflects a natural browsing path. For example, if you're scraping a product page, the Referer should ideally be the category page or search results page that led to it. An empty or incorrect Referer can be a red flag for anti-bot systems.

17.2. Rotate Referers

Similar to User-Agents, rotate your Referer headers to simulate diverse browsing patterns. This can involve maintaining a list of common entry points to the target website or dynamically generating referers based on your scraping path. This adds to the realism of your simulated browsing behavior.

18. JavaScript Execution Environment

For websites heavily reliant on JavaScript, ensuring your execution environment is robust and indistinguishable from a real browser is paramount.

18.1. Use Real Browser Kernels

Whenever possible, use headless browsers that utilize real browser kernels (e.g., Chromium for Puppeteer, Firefox for Playwright). These provide the most accurate JavaScript execution environment and are less likely to be detected than custom JavaScript engines. This ensures that all client-side scripts run as expected, including those used for anti-bot detection.

18.2. Avoid Common Bot Signatures in JavaScript

Some anti-bot systems inject JavaScript code to detect common bot signatures (e.g., window.navigator.webdriver being true). Use stealth plugins or custom patches to hide these signatures from the website's JavaScript environment. This makes your headless browser appear as a regular, human-controlled browser.

19. IP Blacklist Monitoring

Proactively monitoring IP blacklists can help you identify and replace compromised proxies before they cause significant disruptions.

19.1. Check Proxy Health

Regularly check the health and status of your proxy pool. Remove any proxies that are slow, unresponsive, or have been blacklisted. Many proxy providers offer APIs for this purpose, allowing for automated health checks. A healthy proxy pool is essential for consistent and uninterrupted scraping.

19.2. Diversify Proxy Providers

Avoid relying on a single proxy provider. Diversifying your proxy sources across multiple providers reduces the risk of a single point of failure. If one provider's IPs get widely blacklisted, you have alternatives to fall back on, ensuring continuity of your scraping operations.

20. Continuous Learning and Community Engagement

The fight against anti-bot measures is an ongoing battle. Staying connected and continuously learning from the community is vital.

20.1. Join Web Scraping Forums and Communities

Participate in online forums, subreddits (like r/webscraping), and communities dedicated to web scraping. These platforms are excellent for sharing knowledge, discussing new challenges, and finding solutions to complex blocking issues. The collective experience of the community can provide insights that are not readily available elsewhere.

20.2. Attend Webinars and Conferences

Stay updated on the latest trends and techniques by attending webinars, workshops, and conferences focused on web scraping, data extraction, and cybersecurity. These events often feature experts sharing their insights on advanced anti-bot bypass methods and best practices. Continuous learning is key to staying ahead in this dynamic field.

Why Choose Scrapeless for Unblocked Web Scraping?

Navigating the complexities of anti-bot systems can be a daunting task, even with the most advanced strategies. This is where a specialized service like Scrapeless becomes invaluable. Scrapeless is designed to simplify your web scraping efforts by handling the intricate challenges of bypassing anti-bot measures, allowing you to focus solely on data extraction.

Scrapeless offers a robust solution for scraping any website without getting blocked. It provides advanced capabilities to bypass common anti-bot technologies such as Cloudflare, DataDome, and many others. This means you no longer have to worry about managing proxies, rotating User-Agents, or solving CAPTCHAs manually. Scrapeless automates these processes, ensuring a seamless and efficient scraping experience.

Key Benefits of Scrapeless:

- Bypass Any Anti-Bot: Effortlessly navigate websites protected by Cloudflare, DataDome, PerimeterX, and other sophisticated anti-bot solutions.

- Global Proxy Network: Access a vast network of residential and datacenter proxies with automatic rotation, ensuring your requests always appear legitimate.

- Headless Browser Integration: Seamlessly handle JavaScript-rendered content and dynamic websites without complex configurations.

- Automated CAPTCHA Solving: Integrate with built-in CAPTCHA solving mechanisms to overcome challenges without manual intervention.

- Scalability and Reliability: Designed for large-scale operations, providing consistent performance and high success rates.

Free Trial Available: Experience the power of unblocked web scraping firsthand. Try Scrapeless for free today!

Conclusion

Web scraping without getting blocked is an ongoing challenge that requires a multi-faceted approach. By implementing the 20 strategies outlined in this article—from mastering proxy management and mimicking human behavior to leveraging advanced tools and staying updated on anti-bot trends—you can significantly enhance your scraper's resilience and success rate. The key lies in continuous adaptation and a proactive stance against evolving anti-bot technologies.

For those seeking a streamlined and highly effective solution, consider integrating Scrapeless into your workflow. Scrapeless takes the burden of anti-bot bypass off your shoulders, allowing you to focus on extracting valuable data with unparalleled efficiency. Its robust features and seamless integration make it an indispensable tool for any serious web scraping endeavor.

Ready to experience truly unblocked web scraping?

Frequently Asked Questions (FAQ)

Q1: Why do websites block web scrapers?

Websites block scrapers to protect their data, prevent server overload, maintain fair access to information, and sometimes to enforce their terms of service. They want to ensure that their content is consumed by human users in a controlled manner, not by automated bots that might misuse the data or disrupt their services.

Q2: What is the most effective way to avoid getting blocked?

The most effective approach is a combination of strategies. Using high-quality residential proxies with IP rotation, mimicking human browsing behavior (random delays, realistic User-Agents), and employing headless browsers for JavaScript-heavy sites are crucial. For complex sites, a specialized web scraping API like Scrapeless that handles anti-bot bypass automatically is often the most reliable solution.

Q3: Are web scraping APIs better than building my own scraper?

For many users, especially those dealing with sophisticated anti-bot measures, web scraping APIs offer significant advantages. They abstract away the complexities of proxy management, CAPTCHA solving, and browser fingerprinting, saving considerable development time and resources. While building your own scraper offers maximum control, APIs provide a more efficient and reliable solution for unblocked scraping at scale.

Q4: How often should I rotate my IP addresses?

The optimal frequency for IP rotation depends on the target website and its anti-bot mechanisms. For highly sensitive sites, rotating IPs with every request might be necessary. For less aggressive sites, rotating every few requests or after a certain time interval (e.g., every 30 seconds to 1 minute) can be sufficient. Experimentation and monitoring are key to finding the right balance.

Q5: Is web scraping legal?

The legality of web scraping is complex and varies by jurisdiction and the nature of the data being scraped. Generally, scraping publicly available data is often considered legal, but scraping copyrighted content, personal data, or data behind a login wall without permission can be illegal. Always review a website's terms of service and consult legal counsel if unsure, especially when dealing with sensitive information or large-scale data collection.

Recommended

How to Finetune Llama 4: A Comprehensive Guide

References

[1] Research Nester. "Web Scraping Software Market Size & Share - Growth Trends 2037." Research Nester

[2] Scrapfly. "The Complete Guide To Using Proxies For Web Scraping." (22 Aug. 2024) Scrapfly Blog

[3] DataDome. "9 Bot Detection Tools for 2025: Selection Criteria & Key Features." (10 Mar. 2025) DataDome

[4] Cloudflare. "Cloudflare Bot Management & Protection." Cloudflare

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.