How to Finetune Llama 4: A Comprehensive Guide

Expert Network Defense Engineer

Introduction: The Importance of Llama 4 and Fine-tuning

In the rapidly evolving landscape of artificial intelligence, Large Language Models (LLMs) have emerged as a central force driving technological advancements. Among these, Meta AI's Llama series models have garnered significant attention in both research and application fields due to their open-source nature and high performance. Llama 4, as its latest generation, not only inherits the strengths of its predecessors but also achieves significant breakthroughs in multimodal processing, function calling, and tool integration, offering developers unprecedented flexibility and powerful capabilities. However, general-purpose models often fall short in specific tasks or domains. This is where fine-tuning becomes a crucial step in transforming a general model into a domain-specific expert. Through fine-tuning, we can adapt the Llama 4 model to specific datasets and application scenarios, thereby significantly enhancing its performance and accuracy in particular tasks.

This article aims to provide a comprehensive practical guide on how to fine-tune Llama 4. We will delve into the architecture and variants of Llama 4, compare different fine-tuning strategies, emphasize the importance of high-quality data, and provide detailed practical steps with code examples. Furthermore, we will discuss how to evaluate the effectiveness of fine-tuning and specifically recommend Scrapeless, a powerful data scraping tool, to help readers acquire high-quality training data. Whether you intend to improve Llama 4's performance in specific industry applications or explore its potential in innovative tasks, this guide will offer valuable insights and practical steps, enabling you to successfully fine-tune Llama 4 like a seasoned professional.

Llama 4 Architecture and Variants: Understanding Its Core

Successful fine-tuning Llama 4 begins with a thorough understanding of its architecture and the characteristics of its various models. Llama 4 is Meta AI's fourth-generation open-source large language model family, designed for exceptional flexibility, scalability, and seamless integration. Compared to its predecessors, Llama 4 introduces significant enhancements, positioning it as one of the most advanced open-source LLMs available today.

Key features of Llama 4 include:

- Native Multimodal Capabilities: Llama 4 can process both text and image information natively. This means it not only understands and generates text but also interprets visual content, opening doors for building more intelligent and interactive AI applications.

- Function Calling and External Tool Integration: Llama 4 supports direct function calls and seamless integration with external tools, such as web search engines or code execution environments. This capability allows Llama 4 to perform more complex tasks, including retrieving enterprise data, calling custom APIs, and orchestrating multi-step workflows.

- Mixture of Experts (MoE) Architecture: A significant architectural choice in Llama 4 across all its variants is the adoption of the MoE design. This architecture enables the model to activate different 'expert' sub-networks when processing various types of input, significantly enhancing efficiency and scalability while maintaining high performance. For fine-tuning Llama 4, this translates to more efficient utilization of computational resources.

The Llama 4 series currently includes several variants, each tailored to different application scenarios and computational resource constraints. The two most notable variants are:

- Llama 4 Scout (17B, 16 experts): This is a relatively smaller model, yet it demonstrates outstanding performance among models of its size, especially in tasks requiring a 10M context window. It is an ideal choice for fine-tuning Llama 4 in resource-constrained environments.

- Llama 4 Maverick (17B, 128 experts): This variant excels in reasoning and coding capabilities, even surpassing GPT-4o in some benchmarks. With a larger number of experts, it possesses enhanced capabilities for handling complex tasks.

It is important to note that all Llama 4 checkpoints share the same tokenizer, rotary positional encodings, and Mixture of Experts router. This commonality means that fine-tuning Llama 4 strategies and code developed for one variant can often be easily adapted to others, greatly simplifying the development and deployment process.

Understanding these architectural details and variant characteristics is the first step toward successfully fine-tuning Llama 4. It helps in selecting the appropriate model based on specific needs and designing a targeted fine-tuning approach to maximize Llama 4's potential.

Fine-tuning Strategies: Choosing the Right Method for You

Successfully fine-tuning Llama 4 depends not only on understanding the model's architecture but also on selecting the right fine-tuning strategy. Different strategies offer a trade-off between fidelity, computational resource requirements, and cost. Choosing the most suitable method based on your specific needs and available resources is crucial. Here are some of the most popular fine-tuning strategies and their characteristics:

-

Full Supervised Fine-tuning (SFT):

- Description: SFT is the most straightforward fine-tuning method, updating all parameters of the pre-trained model. This means all layers of the model are adjusted based on the new dataset.

- Advantages: It allows the model to adapt to the new data to the greatest extent, typically achieving the highest performance and fidelity.

- Disadvantages: It has huge computational resource requirements, needing a large amount of GPU memory and training time, making it the most expensive option. For a large model like Llama 4, full-parameter fine-tuning usually requires multiple high-end GPUs.

- Applicable Scenarios: SFT can be considered when you have ample computational resources and have the highest demands for model performance. However, for most users, this is not the first choice for how to fine-tune Llama 4.

-

LoRA (Low-Rank Adaptation):

- Description: LoRA is a parameter-efficient fine-tuning method. It freezes most of the weights of the pre-trained model and only injects small, trainable low-rank adapter matrices into specific layers of the model (such as the query, key, and value projection layers of the attention mechanism). The number of parameters in these adapter matrices is much smaller than in the original model, thus greatly reducing the number of parameters that need to be trained.

- Advantages: Compared to SFT, LoRA can achieve performance close to full fine-tuning (about 95% fidelity) at a significantly lower computational cost (usually 25% of the computation). It significantly reduces VRAM usage, making it possible to fine-tune Llama 4 on a single consumer-grade GPU.

- Disadvantages: Although the performance is close to SFT, there may still be slight differences. The position and rank of the adapter injection need to be carefully selected.

- Applicable Scenarios: For users with limited resources who still pursue high performance, LoRA is an excellent choice for how to fine-tune Llama 4.

-

QLoRA (Quantized Low-Rank Adaptation):

- Description: QLoRA is a further optimization of LoRA. It quantizes the weights of the pre-trained model to 4-bit NF4 (NormalFloat 4-bit) precision and keeps these quantized weights unchanged during training. Only the LoRA adapter matrices are trainable and are usually computed with higher precision (such as 16-bit).

- Advantages: QLoRA greatly reduces VRAM requirements, making it realistic to fine-tune Llama 4 on a single GPU with 16GB of VRAM or even less. It is the ideal choice for fine-tuning large models on a single-GPU laptop.

- Disadvantages: Due to quantization, the model's performance may be slightly reduced, but it is usually within an acceptable range.

- Applicable Scenarios: For users with limited VRAM who want to fine-tune Llama 4 on a single GPU, QLoRA is currently the most recommended method.

-

Prompt-tuning:

- Description: Prompt-tuning does not modify any of the model's parameters. Instead, it learns a "soft prompt" or prefix vector, which is added to the model's input. The model learns to guide its behavior by learning this prompt to adapt to specific tasks.

- Advantages: It has the lowest computational cost, minimal VRAM requirements, and fast training speed.

- Disadvantages: The fine-tuning scope is the narrowest, and the performance improvement is usually not as good as LoRA or SFT, with limited adaptability to tasks.

- Applicable Scenarios: Simple tasks with extremely limited resources and low performance requirements.

The following table summarizes the comparison of these fine-tuning strategies:

| Strategy Name | Description | Advantages | Disadvantages | Applicable Scenarios | Resource Requirements for How to Fine-tune Llama 4 |

|---|---|---|---|---|---|

| SFT | Updates all parameters | Highest fidelity | Highest computational cost, large VRAM demand | Extremely high performance requirements, ample resources | High |

| LoRA | Freezes base model, injects adapter matrices | Low computational cost, near-SFT performance | Still requires some VRAM | Limited resources but high-performance pursuit | Medium |

| QLoRA | Quantized version of LoRA, 4-bit NF4 quantization | Very low VRAM demand, single-GPU feasible | Slightly lower performance than LoRA | Single-GPU environment, limited VRAM | Low |

| Prompt-tuning | Learns a prefix vector | Lowest cost | Narrowest scope, limited performance improvement | Extremely limited resources, low performance requirements | Very Low |

In practice, we generally recommend starting with LoRA when trying to fine-tune Llama 4, as it strikes a good balance between performance and resource consumption. If your GPU memory is very limited, then QLoRA will be your best choice. These strategies are a key part of understanding how to fine-tune Llama 4, and choosing the right one will directly affect the efficiency and final outcome of the fine-tuning.

Data Preparation: The Cornerstone of Successful Fine-tuning

When discussing how to fine-tune Llama 4, an undeniable truth is that data quality determines the upper limit of model performance. Even with the most advanced model architecture and the most sophisticated fine-tuning strategies, a model cannot reach its full potential if the training data is of low quality. A high-quality, representative dataset is the cornerstone of successfully fine-tuning Llama 4, ensuring that the model learns the correct patterns, domain knowledge, and desired behaviors.

A typical fine-tuning dataset consists of two parts:

- Base Corpus: This part of the data provides the model with general language understanding and generation capabilities. For example, the OpenAssistant Conversations dataset (approximately 161,000 dialogues, under a CC-BY-SA license) offers a diverse range of intents and dialogue structures, making it a good choice for building general conversational abilities.

- Domain-Specific Data: This part of the data is tailored to a specific task or domain, such as your company's internal Q&A logs, product documentation, customer service conversation records, or professional articles and forum discussions from a specific industry. This data helps Llama 4 learn the terminology, facts, and reasoning patterns of a particular domain.

After obtaining the raw data, a rigorous data cleaning process is crucial:

- Length Filtering: Remove texts that are too short (e.g., less than 4 tokens) or too long (e.g., more than 3000 tokens). Short texts may lack meaningful information, while long texts can lead to inefficient training or be difficult for the model to process.

- Format Standardization and Deduplication: Standardize Unicode encoding, remove HTML tags, Markdown formatting, or other non-text content. Deduplicate by calculating the SHA256 hash of the content to ensure that there are no duplicate samples in the dataset, which helps prevent the model from overfitting.

- Content Filtering: Apply profanity filters or other content filters to remove inappropriate or harmful content. Then, perform manual spot checks to identify issues that automated tools might miss.

- License Tracking: If you are combining datasets from different sources, be sure to carefully track the source and license information for each example to ensure the compliance of the final model.

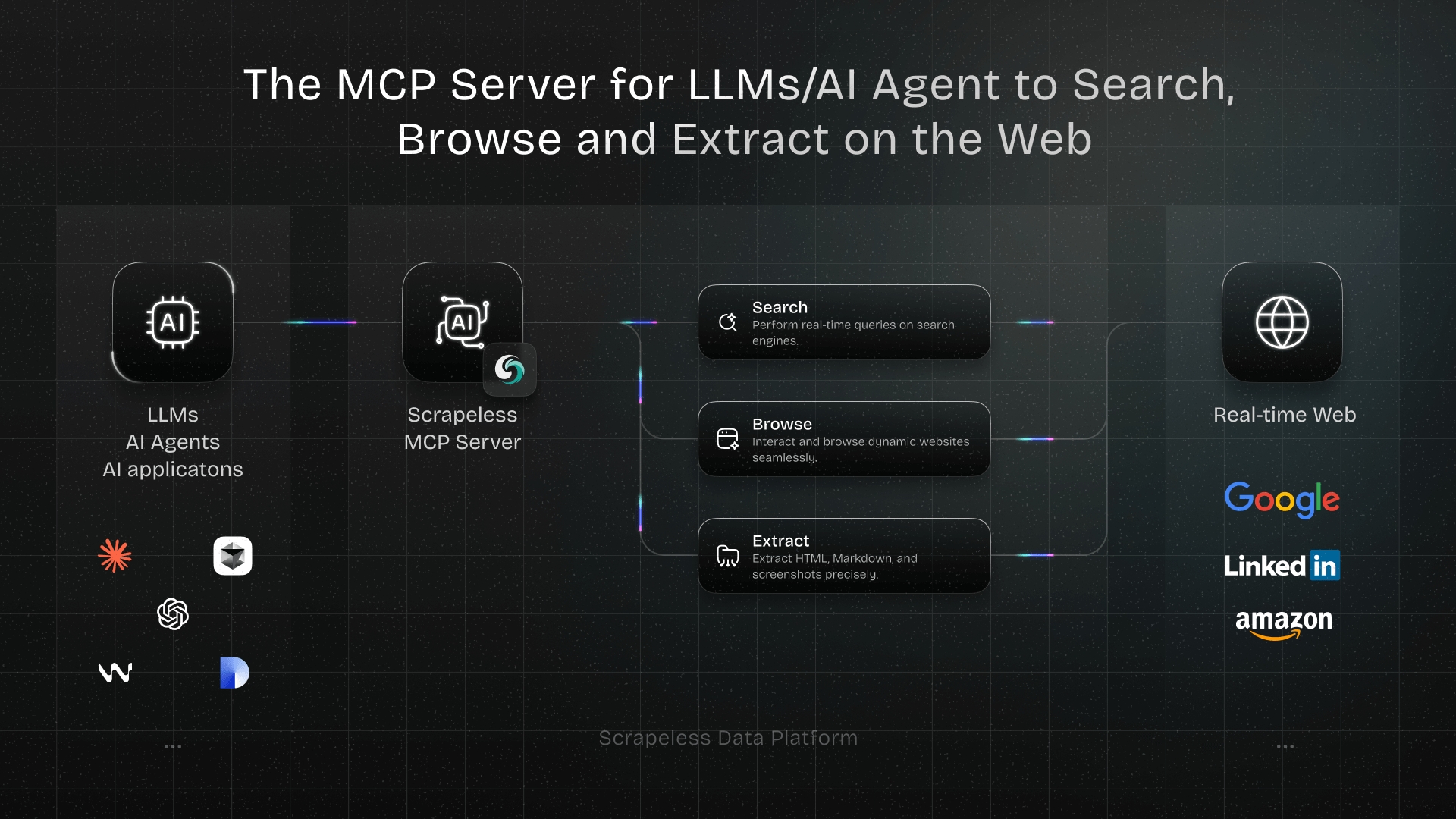

Scrapeless: A Powerful Tool for Acquiring High-Quality Data

In the process of fine-tuning Llama 4, one of the biggest challenges is often obtaining high-quality domain-specific data. Traditional web scraping methods can face issues such as anti-scraping mechanisms, complex data structures, and difficult data cleaning. This is where a powerful data scraping tool like Scrapeless becomes particularly important. Scrapeless can help users efficiently and accurately obtain high-quality web data, providing a solid data foundation for fine-tuning Llama 4.

Advantages of Scrapeless:

- High Efficiency: Scrapeless provides an automated data scraping process that can quickly extract the required information from a large number of web pages, significantly saving the time spent on manual data collection and organization.

- High Accuracy: It has the ability to intelligently parse the structure of web pages, accurately identifying and extracting target data, ensuring data integrity and accuracy, and reducing the workload of subsequent cleaning.

- Flexibility: Scrapeless supports scraping data from a variety of sources (such as news websites, blogs, forums, e-commerce platforms, etc.) and can output data in multiple formats (such as JSON, CSV), to meet the specific needs of different fine-tuning Llama 4 projects.

- Ease of Use: Scrapeless typically provides a simple API interface or an intuitive user interface, making it easy for even non-professional data engineers to get started, greatly lowering the technical barrier to data acquisition.

- Anti-Scraping Evasion: Scrapeless has built-in advanced anti-scraping mechanisms that can effectively deal with anti-scraping measures such as IP restrictions, CAPTCHAs, and dynamic content loading, ensuring the stability and success rate of data scraping.

Application Scenarios:

With Scrapeless, you can easily scrape:

- Professional articles and research reports in a specific domain: to provide Llama 4 with the latest industry knowledge and professional terminology.

- Forum discussions and social media content: to capture users' real language habits, emotional expressions, and common questions, which helps the model learn a more natural conversational style.

- Product reviews and user feedback: to help Llama 4 understand users' opinions on products or services, improving its performance in customer service or sentiment analysis tasks.

- Q&A pairs from Q&A communities: to directly provide Llama 4 with high-quality question-and-answer data, enhancing its Q&A capabilities.

In summary, the data obtained through Scrapeless can ensure that your fine-tuning Llama 4 project has the best quality "fuel" from the very beginning, thereby significantly improving the model's performance and its performance on specific tasks. It is not just a scraping tool, but an indispensable data infrastructure in the Llama 4 fine-tuning project, capable of providing a continuous stream of high-quality training data that meets specific needs.

Practical Steps: A Detailed Guide on How to Fine-tune Llama 4

Now that we have covered the theoretical aspects and data preparation, let's dive into the practical steps of how to fine-tune Llama 4. This section will provide a detailed guide, focusing on a common and efficient approach using LoRA/QLoRA with popular tools like Unsloth and Hugging Face Transformers. We will use Google Colab as an example environment, which is accessible for many users.

1. Environment Setup

First, you need to set up your development environment. If you are using Google Colab, ensure you have access to a GPU runtime.

-

Enable GPU: In Google Colab, go to

Runtime->Change runtime type-> SelectGPUas the hardware accelerator. -

Install Dependencies: Install the necessary libraries. Unsloth is highly recommended for its efficiency in fine-tuning Llama 4 with LoRA/QLoRA, offering significant speedups and VRAM reductions.

bash!pip install -qU unsloth[flash-attn] bitsandbytes==0.43.0unsloth: Provides optimized implementations for LoRA/QLoRA fine-tuning.flash-attn: A fast attention mechanism that further speeds up training.bitsandbytes: Essential for 4-bit quantization (QLoRA).

2. Load the Base Llama 4 Model

After setting up the environment, the next step is to load the pre-trained Llama 4 model. You will need to have accepted Meta's license on Hugging Face to access the models.

python

from unsloth import FastLanguageModel

model_name = "meta-llama/Llama-4-Scout-17B-16E-Instruct" # Or another Llama 4 variant

model, tokenizer = FastLanguageModel.from_pretrained(

model_name,

max_seq_length=2048, # Adjust based on your data and GPU memory

dtype=None, # Auto-detects based on GPU capabilities

load_in_4bit=True, # Enables QLoRA, significantly reduces VRAM usage (e.g., ~11 GB for 17B model)

)model_name: Specify the exact Llama 4 model you wish to fine-tune.Llama-4-Scout-17B-16E-Instructis a good starting point.max_seq_length: Defines the maximum sequence length for your training data. Longer sequences require more VRAM. Adjust this based on your dataset characteristics and GPU memory.load_in_4bit=True: This crucial parameter enables 4-bit quantization, allowing you to fine-tune Llama 4 with significantly less VRAM, making it feasible on consumer-grade GPUs.

3. Attach LoRA Adapters

Once the base model is loaded, you need to attach the LoRA adapters. This tells Unsloth which parts of the model to make trainable.

python

model = FastLanguageModel.get_peft_model(

model,

r=16, # LoRA rank. Higher rank means more parameters, potentially better performance, but more VRAM.

lora_alpha=32, # LoRA scaling factor

target_modules=["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"], # Common target modules for Llama models

seed=42, # For reproducibility

random_state=42, # For reproducibility

)r: The LoRA rank. A common value is 16 or 32. Experiment with this parameter to find the optimal balance between performance and resource usage when you fine-tune Llama 4.lora_alpha: A scaling factor for the LoRA updates.target_modules: Specifies which linear layers of the model will have LoRA adapters attached. For Llama models,q_proj,k_proj,v_proj,o_proj,gate_proj,up_proj, anddown_projare typical choices.

4. Data Loading and Training

With the model and adapters ready, you can now load your prepared dataset and begin the training process. Hugging Face datasets library is commonly used for this.

python

from datasets import load_dataset

from unsloth import SFTTrainer

from transformers import TrainingArguments

# Load your dataset. Replace "tatsu-lab/alpaca" with your own dataset path or name.

# Ensure your dataset is in a format compatible with SFTTrainer (e.g., Alpaca format).

# For demonstration, we use a small slice of the Alpaca dataset.

data = load_dataset("tatsu-lab/alpaca", split="train[:1%]", token=True) # token=True if private dataset

# Define training arguments

training_args = TrainingArguments(

output_dir="./lora_model", # Directory to save checkpoints

per_device_train_batch_size=1, # Batch size per GPU

gradient_accumulation_steps=16, # Accumulate gradients over multiple steps

warmup_steps=5, # Number of warmup steps for learning rate scheduler

num_train_epochs=1, # Number of training epochs

learning_rate=2e-4, # Learning rate

fp16=True, # Enable mixed precision training for faster training and less VRAM

logging_steps=1, # Log every N steps

optim="adamw_8bit", # Optimizer

weight_decay=0.01, # Weight decay

lr_scheduler_type="cosine", # Learning rate scheduler type

seed=42, # Random seed for reproducibility

)

# Initialize the SFTTrainer

trainer = SFTTrainer(

model=model,

tokenizer=tokenizer,

train_dataset=data,

dataset_text_field="text", # Name of the column containing text in your dataset

max_seq_length=2048, # Must match the max_seq_length used when loading the model

args=training_args,

)

# Start training

trainer.train() # This process can take a while depending on your data size and GPU.

# Save the fine-tuned model (LoRA adapters)

trainer.save_model("l4-scout-lora")- Dataset Format: Ensure your dataset is formatted correctly. For instruction fine-tuning, the Alpaca format (

{"instruction": "...", "input": "...", "output": "..."}) is common, whichSFTTrainercan handle if you specifydataset_text_fieldcorrectly or use a formatting function. TrainingArguments: Configure various training parameters such as batch size, learning rate, number of epochs, and optimizer.gradient_accumulation_stepsallows you to simulate larger batch sizes with limited VRAM.fp16=Trueenables mixed-precision training, which is crucial for efficient fine-tuning Llama 4.trainer.train(): This command initiates the fine-tuning process. Monitor your GPU usage and loss during training.

5. Merge and Test the Fine-tuned Model

After training, the LoRA adapters need to be merged back into the base model to create a single, deployable model. Then, you can test its performance.

python

# Merge LoRA adapters with the base model

merged_model = model.merge_and_unload()

# Alternatively, if you saved the adapters separately and want to load them later:

# from peft import PeftModel, PeftConfig

# peft_model_id = "./l4-scout-lora"

# config = PeftConfig.from_pretrained(peft_model_id)

# model = FastLanguageModel.from_pretrained(config.base_model_name_or_path, load_in_4bit=True)

# model = PeftModel.from_pretrained(model, peft_model_id)

# merged_model = model.merge_and_unload()

# Test the fine-tuned model

from transformers import pipeline

pipeline = pipeline("text-generation", model=merged_model, tokenizer=tokenizer)

# Example inference

input_text = "Explain backpropagation in two sentences."

result = pipeline(input_text, max_new_tokens=120, do_sample=True, temperature=0.7)

print(result[0]["generated_text"])merge_and_unload(): This function from Unsloth merges the LoRA adapters into the base model and unloads the PEFT (Parameter-Efficient Fine-Tuning) configuration, making the model a standard Hugging Face model that can be saved and deployed.- Inference: Use the

pipelinefunction fromtransformersto easily perform inference with your newly fine-tuned Llama 4 model. Experiment withmax_new_tokens,do_sample, andtemperatureto control the generation output.

These detailed steps provide a clear roadmap on how to fine-tune Llama 4 using efficient methods. Remember that successful fine-tuning often involves iterative experimentation with data, hyperparameters, and evaluation metrics.

Experiment Tracking and Evaluation: Ensuring Fine-tuning Success

Once you have a fine-tuned model, the process of how to fine-tune Llama 4 is not yet complete. A critical, and often overlooked, phase is rigorous evaluation and experiment tracking. This ensures that your fine-tuned model not only performs well on your specific tasks but also maintains its quality, safety, and reliability in a production environment. A multi-layered evaluation protocol is essential.

Evaluation Protocol

-

Automatic Benchmarks: Run the

lm-eval-harnesssuite on standard tasks to quantify the gains over the base model. Key benchmarks include:- MMLU (Massive Multitask Language Understanding): To assess knowledge recall.

- GSM8K (Grade School Math 8K): To evaluate mathematical reasoning.

- TruthfulQA: To measure the model's resistance to generating hallucinations.

Track metrics like exact match for closed-form questions and BERTScore for free-form outputs.

-

Human Review: Automated benchmarks are useful, but they don't always capture the nuances of human preferences. Draw a sample of approximately 200 prompts from your live production traffic and have two independent annotators rate each response on a 1-5 Likert scale for:

- Helpfulness: Does the response effectively address the user's query?

- Correctness: Is the information provided accurate?

- Tone Consistency: Does the response align with your brand's voice?

Use the overlap to compute inter-annotator agreement and identify edge-case failures.

-

Canary Tokens: Insert unique canary strings into a small fraction (e.g., 0.1%) of your fine-tuning examples. Deploy the model to a staging environment and monitor logs for any unexpected reproduction of these strings. This can signal unsafe memorization or data leakage.

-

Continuous Monitoring: After deployment, embed lightweight telemetry to log prompt inputs, token distributions, and latency percentiles. Set up alerts for any drift in quality metrics or usage spikes that may reveal new failure modes.

Deployment Checklist

Once your model has passed a rigorous evaluation, the next step is to operationalize it with a structured deployment checklist that covers performance, safety, and maintainability.

- Quantization: Export your merged weights to a 4-bit integer format (int4) using a tool like GPTQ. To avoid quality regressions, confirm that the downstream perplexity increases by less than 2% compared to the full-precision model.

- Safety: Wrap the inference endpoint with a safety filter, such as Meta's Llama Guard or an open-source safe-completion library. Include prompt sanitization and rejection policies for disallowed content.

- Monitoring: Instrument your service to log incoming prompts, the top-k token distributions, and key latency percentiles (e.g., P95). Set up dashboards and alerts for atypical throughput, error rates, or drift in response characteristics.

- Rollback: Keep the previous adapter and merged weights in object storage. Architect your serving layer (e.g., with vLLM or a custom FastAPI) so that swapping adapters is a two-line configuration change, enabling instant rollback if a deployment misbehaves.

Evaluation is a crucial step in verifying whether your approach to how to fine-tune Llama 4 has been successful. It provides the necessary feedback loop for iterative improvement and ensures that your model is ready for real-world applications.

Conclusion: Key Takeaways for Fine-tuning Llama 4 and the Value of Scrapeless

Fine-tuning Llama 4 is a powerful technique for transforming a general-purpose large language model into a domain-specific expert. By following a structured approach, you can create a model that speaks in your brand's voice, understands your specific domain, and performs tasks with high accuracy. The key to success lies in a combination of high-quality data, the right fine-tuning strategy (such as LoRA or QLoRA), and a rigorous evaluation and deployment process. Mastering how to fine-tune Llama 4 is a valuable skill for any AI developer or product manager looking to leverage the full potential of open-source LLMs.

Throughout this guide, we have emphasized the critical role of data quality in the success of any fine-tuning project. This is where a tool like Scrapeless becomes invaluable. Scrapeless helps you acquire high-quality, relevant data from the web, which is the fuel for your fine-tuning process. By providing a reliable and efficient way to gather data, Scrapeless ensures that your fine-tuning Llama 4 efforts are built on a solid foundation. Its ability to handle anti-scraping mechanisms, parse complex websites, and deliver clean, structured data makes it an essential tool in the modern AI development toolkit. Whether you are building a customer service chatbot, a code generation assistant, or a research tool, leveraging Scrapeless to gather your training data will give you a significant advantage.

By understanding how to fine-tune Llama 4 and utilizing powerful tools like Scrapeless, you can unlock new possibilities in AI and build truly intelligent applications that are tailored to your specific needs.

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.