What is the Requests Library Used for in Python?

Expert Network Defense Engineer

Key Takeaways: The Python Requests library simplifies HTTP communication, making web interactions intuitive and efficient for developers.

It is essential for tasks ranging from basic API integrations to complex web scraping, offering a user-friendly interface over Python's built-in modules. Requests streamlines sending various HTTP requests, handling responses, and managing advanced features like sessions and authentication, significantly accelerating development workflows.

Introduction

The Requests library is the de facto standard for making HTTP requests in Python, providing a human-friendly approach to interacting with web services. This article explores the diverse applications of the Requests library, demonstrating its critical role in modern web development and data acquisition. We will delve into its core functionalities, compare it with other HTTP clients, and illustrate its practical uses through real-world examples. Whether you are building web applications, automating tasks, or extracting data, understanding Requests is fundamental for efficient and reliable web interactions.

The Core Functionality of Requests: Simplifying HTTP

Requests simplifies complex HTTP operations into straightforward function calls, abstracting away the intricacies of network communication. It allows developers to send various types of HTTP requests—GET, POST, PUT, DELETE, and more—with minimal code. The library automatically handles common tasks such as URL encoding, connection pooling, and cookie management, which are often cumbersome with lower-level libraries. This ease of use makes Requests an indispensable tool for anyone working with web APIs or web content.

Sending Basic HTTP Requests

Sending a basic GET request to retrieve data from a web server is remarkably simple with Requests. The requests.get() method fetches content from a specified URL, returning a Response object that encapsulates the server's reply. This object provides convenient access to the response's status code, headers, and body content, enabling quick data processing. For instance, fetching data from a public API or a simple webpage requires just a few lines of code, demonstrating the library's efficiency.

python

import requests

response = requests.get('https://api.github.com/events')

print(response.status_code)

print(response.json())Similarly, sending data to a server using a POST request is equally intuitive. The requests.post() method allows you to send form data, JSON payloads, or files, making it ideal for submitting forms or interacting with RESTful APIs that require data submission. This straightforward approach reduces boilerplate code and improves readability, allowing developers to focus on the logic rather than the mechanics of HTTP.

Handling Responses and Errors

Requests provides robust mechanisms for handling server responses and potential errors, ensuring applications can gracefully manage various outcomes. The Response object offers properties like status_code to check for success or failure, text for string content, and json() for parsing JSON responses. For error handling, Requests can raise HTTPError for bad responses (4xx or 5xx client/server errors), which simplifies error propagation and management within applications. This integrated error handling promotes more resilient and reliable code.

Requests vs. urllib: A Comparison Summary

Requests is widely preferred over Python's built-in urllib module due to its superior ease of use, modern design, and extensive feature set. While urllib provides fundamental HTTP capabilities, it often requires more verbose code and manual handling of many aspects that Requests automates. The table below highlights key differences, illustrating why Requests has become the go-to library for most Python developers interacting with the web.

| Feature | Requests | urllib |

|---|---|---|

| Ease of Use | Highly intuitive, human-friendly API | More complex, requires more boilerplate |

| HTTP Methods | Simple functions (.get(), .post()) |

Requires urllib.request.urlopen() with Request object |

| JSON Handling | Built-in .json() method |

Manual parsing required |

| Error Handling | raise_for_status() for HTTP errors |

Manual status code checking |

| Sessions | requests.Session() for persistent connections |

Manual cookie and header management |

| Redirects | Automatic | Manual handling required |

| Authentication | Built-in methods | Manual header construction |

| Connection Pooling | Automatic | Manual implementation |

| SSL Verification | Automatic (configurable) | Manual handling |

Requests' design philosophy prioritizes developer experience, making common tasks simple and complex tasks possible. For example, managing cookies and sessions is effortless with requests.Session(), which persists parameters across requests, crucial for maintaining state in web interactions. This contrasts sharply with urllib, where such features demand significant manual effort and a deeper understanding of HTTP protocol details.

Practical Applications and Case Studies

The versatility of the Requests library extends across numerous domains, from automating routine web tasks to building sophisticated data pipelines. Its robust features make it suitable for a wide array of applications, empowering developers to interact with web resources effectively.

Case Study 1: Interacting with Public APIs

Requests is the ideal tool for interacting with public APIs, such as those provided by social media platforms, weather services, or financial data providers. Developers can easily send authenticated requests, pass parameters, and parse JSON responses, integrating external services into their applications. For instance, fetching real-time stock data from a financial API or posting updates to a social media platform becomes a straightforward process. This capability is fundamental for building dynamic web applications and data-driven services.

According to the Postman State of the API Report 2023, 92% of developers use APIs daily, highlighting the pervasive need for efficient HTTP clients like Requests [1]. Its simplicity in handling API requests contributes significantly to this widespread adoption.

Case Study 2: Web Scraping and Data Extraction

Web scraping, the automated extraction of data from websites, is another primary use case for Requests. By sending GET requests to web pages, developers can retrieve HTML content, which can then be parsed using libraries like Beautiful Soup or LXML to extract specific information. This is invaluable for market research, content aggregation, and competitive analysis. Requests handles the underlying HTTP communication, allowing scrapers to focus on data parsing logic.

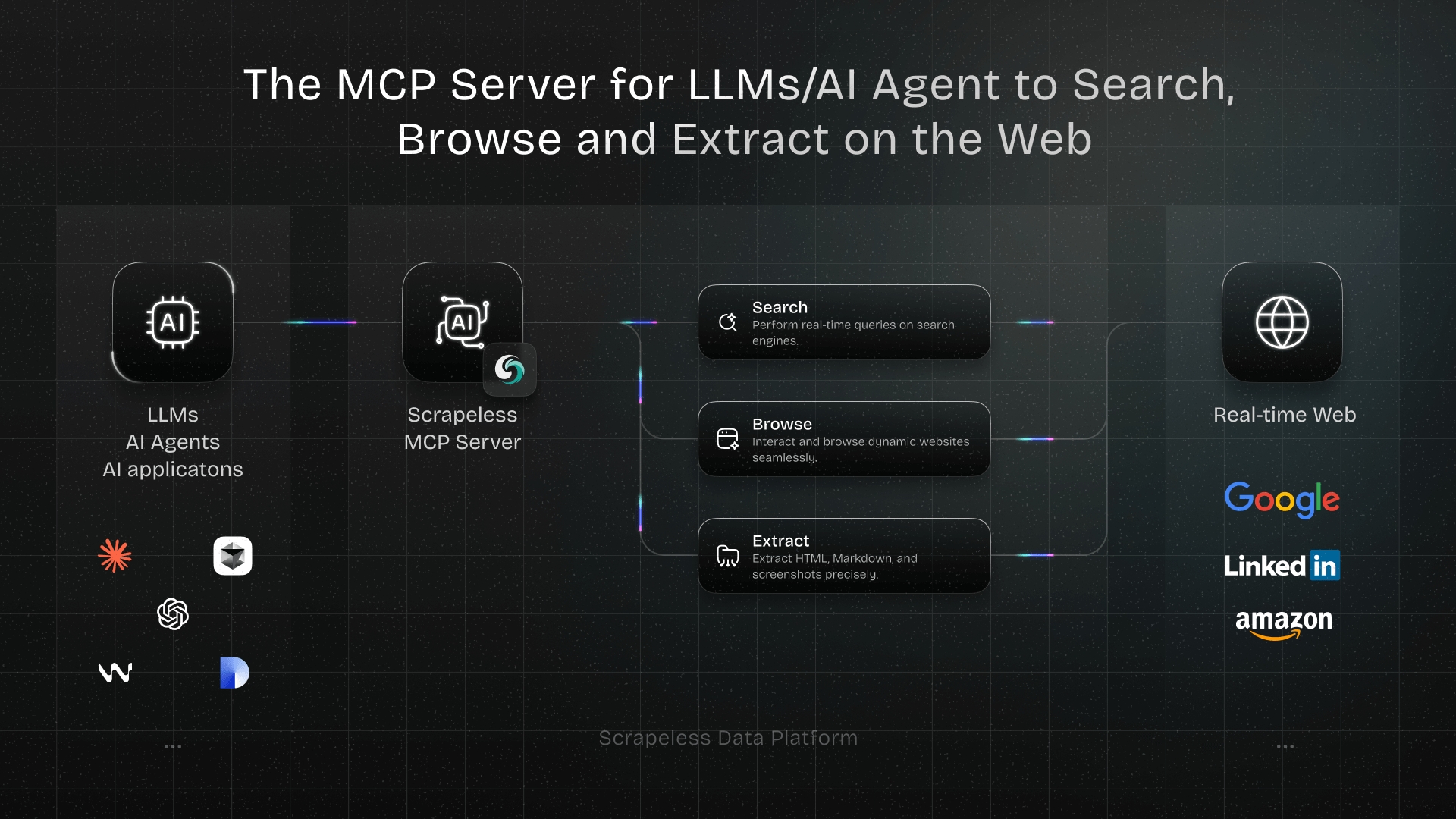

However, web scraping often encounters anti-bot measures like Cloudflare and DataDome. These systems detect and block automated requests, making data extraction challenging. This is where specialized tools become necessary. Scrapeless offers a solution to bypass these sophisticated anti-bot technologies, ensuring reliable data access. Scrapeless helps you bypass Cloudflare, DataDome, and all other anti-bot measures, allowing you to scrape any website without being blocked. Try Scrapeless for free!

Case Study 3: Automating Web Interactions and Testing

Requests is also extensively used for automating web interactions, such as logging into websites, submitting forms, or simulating user behavior for testing purposes. By managing sessions and cookies, Requests can maintain state across multiple requests, mimicking a browser session.

This is crucial for automated testing of web applications, where simulating user journeys and verifying server responses is essential for quality assurance. For example, a QA engineer might use Requests to automate the login process and then navigate through different pages to check for expected content or functionality.

Case Study 4: Downloading Files and Media

The library excels at downloading files, images, and other media from the web. Requests can handle large file downloads efficiently by streaming the response content, preventing memory overload for very large files. This is particularly useful for applications that need to retrieve assets, process large datasets, or back up online content. For example, downloading a large dataset from a public repository or an image from a content delivery network can be done with ease.

Advanced Features for Robust HTTP Operations

Requests provides a suite of advanced features that enable developers to build more robust, secure, and efficient HTTP clients. These features address common challenges in web communication, offering fine-grained control over requests and responses.

Proxies and Sessions

Using proxies with Requests allows routing requests through intermediary servers, which is vital for privacy, bypassing geo-restrictions, or distributing request load in web scraping operations. Requests makes proxy configuration straightforward, supporting various proxy types.

Sessions, managed by requests.Session(), enable persistence of parameters like cookies and headers across multiple requests, simulating a continuous browsing experience. This is essential for maintaining login states or managing complex multi-step interactions with web services.

Authentication and SSL Verification

Requests simplifies various authentication methods, including Basic, Digest, and OAuth, allowing secure interaction with protected resources. It also handles SSL certificate verification by default, ensuring secure communication over HTTPS.

This built-in security measure helps prevent man-in-the-middle attacks and ensures data integrity. Developers can also configure custom SSL certificates or disable verification for specific use cases, though the latter is generally not recommended for production environments.

Timeouts and Retries

Configuring timeouts prevents requests from hanging indefinitely, improving application responsiveness and resource management. Requests allows specifying a timeout value for both connecting to the server and receiving data. For unreliable network conditions or transient server issues, implementing retry mechanisms is crucial. While Requests does not have built-in retries, it integrates seamlessly with libraries like requests-toolbelt or custom retry logic, enhancing the resilience of HTTP operations.

Conclusion

The Python Requests library is an indispensable tool for any developer working with web-based data or services. Its intuitive API, comprehensive features, and robust error handling capabilities make it the preferred choice for tasks ranging from simple API calls to complex web scraping and automation. By abstracting away the complexities of HTTP, Requests empowers developers to build efficient, reliable, and scalable applications that interact seamlessly with the web. Embracing Requests means embracing a more productive and less frustrating approach to HTTP communication in Python.

Try Scrapeless for free! Start scraping any website without being blocked. Sign up here!

FAQ

Q1: Why should I use Requests instead of Python's built-in urllib?

Requests offers a much more user-friendly and intuitive API compared to urllib, simplifying common HTTP tasks. It handles many complexities automatically, such as connection pooling, cookie management, and JSON parsing, which require manual implementation with urllib. Requests is designed for humans, making your code cleaner and more efficient.

Q2: Can Requests handle authenticated API calls?

Yes, Requests provides excellent support for various authentication methods, including Basic, Digest, and OAuth. You can easily pass authentication credentials as parameters to your request methods, allowing seamless interaction with protected web resources.

Q3: Is Requests suitable for web scraping?

Requests is a fundamental component for web scraping, as it handles the HTTP requests to retrieve web page content. However, for advanced web scraping scenarios involving anti-bot measures like Cloudflare or DataDome, you might need additional tools like Scrapeless to ensure successful data extraction without being blocked.

Q4: How does Requests handle redirects?

Requests handles HTTP redirects automatically by default. When a server responds with a redirect status code (e.g., 301, 302), Requests will follow the redirect to the new URL. You can inspect the response.history attribute to see the chain of redirects that occurred.

Q5: What are sessions in Requests and why are they useful?

requests.Session() objects allow you to persist certain parameters across multiple requests, such as cookies, headers, and authentication credentials. This is particularly useful when interacting with websites that require maintaining a logged-in state or when you need to send multiple requests with the same set of headers, improving efficiency and simplifying code.

References

[1] Postman. (2023). State of the API Report 2023.

[2] Real Python. (2023). Python Requests Library (Guide).

[3] W3Schools. (n.d.). Python Requests Module.

[4] ScrapingBee. (n.d.). What is requests used for in Python?.

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.