How to Build a Amazon Scraper With Scrapeless Agent Browser: A Step-by-step Guide for 2026

Advanced Data Extraction Specialist

Key Takeaways:

- Dynamic rendering, not raw HTML. Amazon product detail pages, search results, and reviews are hydrated client-side — Scrapeless Agent Browser renders them in a real cloud browser. On Amazon specifically,

wait --load networkidledoes not settle (lazy-loaded ad/beacon streams keep firing); usewait 1500thenwait '<stable-selector>'(e.g.#productTitleon PDPs,[data-asin]:not([data-asin=""])on/spages). - Per-session geo routing.

--proxy-country,--proxy-state,--proxy-city,--timezone, and--languageskeep every request locale-consistent across.com,.co.uk,.de, and.co.jp. - Discover → Extract flow. Amazon rotates class names and attribute hooks across A/B variants. Read the DOM with

get htmlbefore writing selectors, then extract witheval— never hardcode class names in shipped code. - One fresh session per logical operation. In practice, Scrapeless sessions stay clean for 2–3 consecutive hydrated-page fetches. Beyond that they begin returning

chrome://new-tab-pageor terminating silently. Mint a new session per page or per small batch rather than reusing one long-lived session for 20+ requests.

Introduction

Amazon is the single largest product-data surface on the open web: price intelligence, review mining, Best Sellers Rank monitoring, MAP compliance, and brand-protection all flow from the same public listing pages. The official Product Advertising API has restricted approval cycles and does not expose review bodies, BSR time-series, or competitor-seller data — so most serious Amazon data pipelines still scrape.

A modern Amazon scraper has to deal with several realities simultaneously: pages are hydrated in JavaScript, class names rotate across A/B variants, cookies and locale state need to persist across paginated requests, and IP-reputation-based filtering treats datacenter egress harshly. Handling those four concerns in a raw HTTP client — or a locally-installed Playwright — adds weeks of maintenance nobody wants to own.

This guide walks through a terminal-first workflow on top of Scrapeless Agent Browser that handles the parts that normally eat weeks: anti-detection fingerprinting, residential proxies, dynamic rendering, and cross-marketplace locale consistency — all through a single scrapeless-scraping-browser CLI.

What You Can Do With It

- Dynamic competitive pricing. Track competitor ASIN prices in real time on

.com, feed the signal into repricer rules, and monitor promotional windows without running local browsers. - MAP compliance monitoring. Scan retailer listings and third-party seller offers against your manufacturer-set Minimum Advertised Price, flagging violations as they appear.

- On-PDP review signal. Cluster the top reviews surfaced on each PDP (5–10 reviews per ASIN via the Step 3 extraction) — enough for trend detection and reputation monitoring on a rolling basis. Full-corpus review mining across

/product-reviews/*requires authenticated sessions in 2026; see Step 5. - Inventory and availability tracking. Monitor stockouts, Prime-eligibility changes, and shipping-lead-time shifts across ASINs to anticipate supply-chain pressure.

- BSR and rank intelligence. Pull Best Sellers Rank across categories and subcategories for market sizing, launch tracking, and category-share analysis.

- Brand protection. Detect counterfeit listings, unauthorized third-party sellers, and trademark violations across marketplace storefronts.

Why Scrapeless Agent Browser

Scrapeless Agent Browser is a customizable, anti-detection cloud browser designed for web crawlers and AI Agents. For Amazon specifically, it brings:

- Residential proxies in 195+ countries (

--proxy-country,--proxy-state,--proxy-city) for locale-matched egress — the load-bearing primitive for staying off Amazon's anti-bot interstitial in the first place. - Anti-detection fingerprinting on every session, so pages render identically to organic traffic.

- Session persistence via

--session-idacross paginated requests, so cookies, cart, and Prime context remain consistent page to page. - JavaScript rendering in the cloud browser, ensuring hydrated DOM for price blocks, availability banners, lazy-loaded review bodies, and A+ content.

- Per-session locale alignment —

--timezoneand--languagesautomatically align with the selected proxy geography.

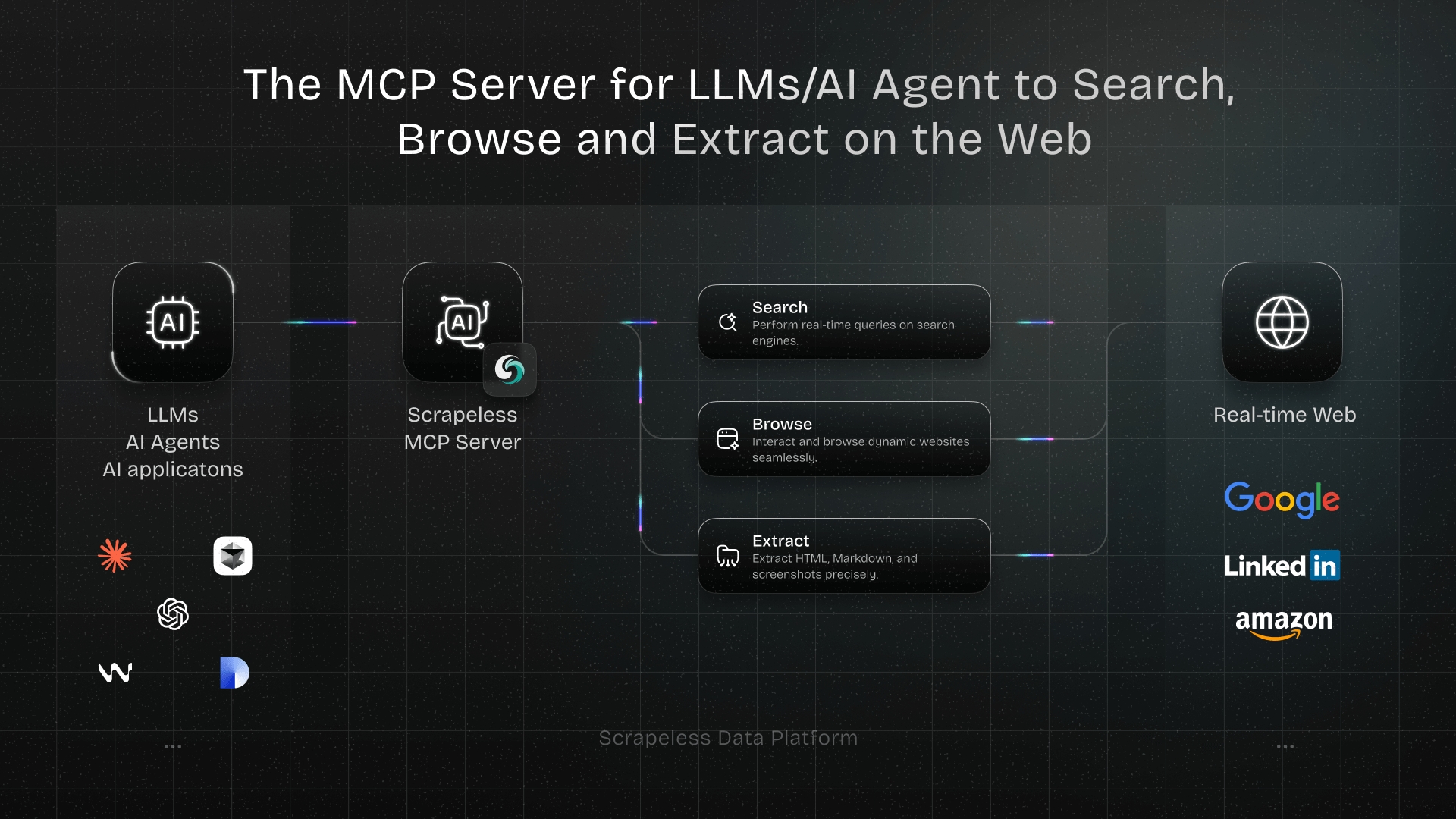

Get your API key on the free plan at scrapeless.com. Related Scrapeless products: Universal Scraping API, Proxy Solutions, and the Scrapeless MCP Server for Model Context Protocol integrations.

If an API-style surface fits your pipeline better than a browser, see the companion Amazon Scraper API and Scraping Amazon Product Data guides. For a full Amazon-focused solutions hub, see scrapeless.com/en/solutions/amazon.

Prerequisites

- Node.js 18 or newer.

- A Scrapeless account and API key — sign up at scrapeless.com.

jq(optional, for JSON parsing in shell scripts — a portablegrepfallback is shown below).- Basic familiarity with the terminal.

Install

The recipes below run on the scrapeless-scraping-browser CLI. Setup is three steps — both CLI users and AI-agent users need #1 and #2; AI-agent users do #3 too.

1. Install the CLI package

bash

npm install -g scrapeless-scraping-browserThis provides the scrapeless-scraping-browser binary that every step of this guide calls. The skill does not bring its own runtime — it loads command patterns into your AI agent, but the CLI itself must be installed first.

2. Configure your API key

Get your token from scrapeless.com, then store it where the CLI can read it:

bash

scrapeless-scraping-browser config set apiKey your_api_token_here

scrapeless-scraping-browser config get apiKey # verifyUsing an AI agent? The skill's instructions explicitly tell your agent that authentication is required before any session call. If the API key isn't set when the agent first tries to use the CLI, the agent will prompt you and run the config set apiKey … command for you — you can either set it manually now (commands above) or paste your token when the agent asks.

The config file lives at ~/.scrapeless/config.json with access restricted to the current user, takes priority over the environment variable, and is portable across agents and CI runners. For CI pipelines, prefer:

bash

export SCRAPELESS_API_KEY=your_api_token_here3. Install the Scrapeless skill in your AI agent

This is a separate step from step 1 above. Step 1 installed the CLI binary — the runtime your agent invokes. The skill is what teaches your agent how to invoke it correctly (selectors, waits, retry patterns, the discover→extract workflow). They're two different things, and you need both.

The skill is a folder containing SKILL.md + skill.json + references/. The canonical source is the scrapeless-ai/scrapeless-agent-browser → skills/scraping-browser-skill repo on GitHub.

To install it in Claude Code, Cursor, VS Code + GitHub Copilot, OpenAI Codex CLI, or Gemini CLI, follow the Scrapeless AI Agent install guide — it has the per-agent copy-paste commands (bash and Windows PowerShell). Reload your agent after install so the skill becomes active.

Without the skill installed, your agent doesn't know the discover→extract pattern, the per-engine waits, or the selectors that actually work in 2026, and you'd have to spoon-feed every detail in every prompt.

What the skill loads into your agent's operating context up-front:

- Authentication — check for

~/.scrapeless/config.jsonorSCRAPELESS_API_KEYand prompt you to set it if missing (see step 2). - Discover → Extract workflow — the anti-fragility pattern. The agent reads the live DOM with

get html "<region>"first, identifies stable anchors (data-*attributes,aria-label,role, semantic ids), then writesevalselectors based on what's actually rendered — instead of guessing utility class names that rotate across A/B variants and break in weeks. - Selector syntax — when to use CSS selectors (

#productTitle,[data-asin]) vs accessibility refs (@e1fromsnapshot -i). - Wait gotchas —

wait 1500betweenopenandwait --load networkidleto dodge the cold-sessionchrome://new-tab-pagerace;wait <selector>against a target-page element when networkidle won't settle. - Parallel CLI workers — single-shell

&&chaining, unique session names, ≤3 concurrent workers per host.--session-idalone is not sufficient under daemon contention. - Common pitfalls —

evalreturns JSON-quoted values ("49.99"not49.99),openexits non-zero on successful navigation, sessions terminate when the connection closes. - Full command reference — every flag for

new-session,open,wait,eval,get,click,fill,snapshot,auth,profile,recording,stop, etc.

4. Verify the skill is wired up

Before the first real Amazon scrape, smoke-test the install with one safe prompt to your agent:

"Using the Scrapeless skill, open https://example.com and tell me the page title."

Your agent should mint a Scrapeless session, navigate, and reply with "Example Domain". If you see those two words, the skill is loaded, the API key is set, and the cloud browser is reachable — you're ready to scrape Amazon.

If it fails:

| Symptom | Likely cause | Fix |

|---|---|---|

| "I don't have a tool/skill to do that" | Skill not loaded in this agent session | Reinstall via the skill install guide and reload the agent |

Authentication failed / 401 |

API key not set | Re-run scrapeless-scraping-browser config set apiKey <token> (Install step 2) |

command not found |

CLI binary missing on PATH | Re-run Install step 1 (npm install -g scrapeless-scraping-browser) |

Hangs / lands on chrome://new-tab-page/ |

Cold-session wait race | Ask the agent to retry — the skill knows to insert wait 1500 between open and wait --load networkidle |

page.goto: Timeout 25000ms exceeded on Amazon (but example.com works) |

Scrapeless ↔ Amazon residential-proxy CAPTCHA pressure | Retry on a different proxy country (--proxy-country GB/CA/DE); for sustained use, switch to the Scrapeless Amazon Scraper API |

How you actually use this: prompt your agent

After install, you scrape Amazon by talking to your agent — not by copy-pasting bash. The skill loads selectors, waits, retry classifiers, and the discover→extract pattern into the agent's context, so a one-line natural-language prompt is enough to get structured JSON back.

Prompts you can paste

| You say to your agent | What you get back |

|---|---|

| "Scrape Amazon for AirPods — top 10 with title, price, rating, ASIN" | JSON list, 10 cards, fields {title, price, rating, asin, url, sponsored} |

| "Get the full product details for ASIN B09B8V1LZ3" | JSON object: {title, price, rating, reviewCount, availability, prime, asin, topReviews[]} |

| "Scrape Amazon search for 'wireless headphones' across pages 1–5, save as headphones.json" | One JSON file, 5 pages × ~22 organic cards each, deduped by ASIN |

| "Pull the top reviews from the Echo Dot product page" | JSON array — author, rating, title, date, body, helpful count — for the on-PDP review carousel |

| "Compare prices for ASIN B09B8V1LZ3 on amazon.com and amazon.co.uk" | Side-by-side prices; .es/.de blocked anonymously — agent will tell you and offer the Scraper API path |

| "Track price for ASIN B09B8V1LZ3 every hour for the next 6 hours" | Polling loop, fresh session per poll, results appended to a CSV/JSON |

| "Find Best Sellers in Electronics → top 20 with price + rating" | JSON, 20 rows from /gp/bestsellers/electronics |

| "Scrape this listing and tell me if it's Prime-eligible: https://amazon.com/dp/B09B8V1LZ3" | Single object with prime: true/false plus the supporting evidence text from the badge |

| "Get the rating histogram (5★/4★/3★/2★/1★ %) for B09B8V1LZ3" | Auth-gated in 2026 — agent will explain and offer the Scraper API path |

| "Search 'office chair under 200', filter ads, return top 10 organic" | Organic-only JSON; sponsored cards stripped via [data-component-type="sp-sponsored-result"] |

| "What's currently on sale in the Apple Store on amazon.com?" | Discover-pass on the storefront, then extract sale-tagged items |

| "For ASIN B09B8V1LZ3 list the bullet-point features and the A+ section text" | Two arrays: feature bullets from #feature-bullets and A+ paragraphs |

Worked example: get the full product card for ASIN B09B8V1LZ3

You type:

"Get the title, price, rating, review count, and top 5 reviews for Amazon ASIN B09B8V1LZ3. Return JSON."

The agent's plan (in plain English):

- Mint a US-egress session (Amazon localizes by IP).

- Open

https://www.amazon.com/dp/B09B8V1LZ3,wait 1500, thenwait '#productTitle'to dodge the cold-session race.- Confirm anchors with

get html "#centerCol"(handles Amazon's frequent layout swaps), thenevalfor#productTitle,.a-price .a-offscreen,#acrPopover[title],#acrCustomerReviewText, and[data-hook="review"]× 5.

What you get back (schema is normative — the JSON shape; review text below is an illustrative sample):

json

{

"asin": "B09B8V1LZ3",

"title": "Echo Dot (5th Gen, 2022 release) | Big vibrant sound...",

"price": "$49.99",

"rating": "4.7 out of 5 stars",

"reviewCount": "191,146 ratings",

"availability": "In Stock",

"prime": true,

"topReviews": [

{

// illustrative — actual review fields are populated from [data-hook="review"] × 5

"author": "James M.",

"rating": "5.0 out of 5 stars",

"title": "Excellent voice quality, easy setup",

"date": "Reviewed in the United States on March 14, 2026",

"body": "Setup took two minutes. Voice recognition is noticeably sharper..."

}

// ... 4 more reviews with the same shape

]

}That's the entire user-facing surface for this scrape. The bash, selectors, and wait choreography in Steps 1–6 below are what the skill makes the agent run — you don't have to type any of them.

Shaping prompts: how to control what comes back

Small phrasings change what the agent extracts and how it returns it.

| Phrasing | Effect |

|---|---|

| "…return JSON" / "…as CSV" | Output format |

| "…fields: title, price, rating only" | Restricts the fields the agent extracts |

| "…across pages 1–5" / "…top 50" | Pagination depth — fresh session per page |

| "…save to airpods.json" | Writes to file instead of just printing |

| "…organic only" / "…drop sponsored" | Filter — [data-component-type="sp-sponsored-result"] exclusion |

| "…then for each ASIN, also pull the bullet features" | Chains a second pass per result |

| "…use a UK IP" | Sets --proxy-country GB on the session |

| "…poll every 30 minutes for 4 hours" | Loop — fresh session per iteration |

That's the workflow. Steps 1–6 below are not a copy-paste recipe — they're the under-the-hood reference for understanding what the skill makes your agent do. Read them once to see how the discover → extract pattern composes; then trust your agent to apply it. Scripting outside an agent is possible (the bash works as shown) but is not the recommended path — the skill is the product.

Step 1 — Connect to Scrapeless Agent Browser

Create a US-egress session. The proxy geography is set before the browser opens any page — it cannot change mid-session.

bash

SESSION=$(scrapeless-scraping-browser new-session \

--name "amazon-us" \

--ttl 1800 \

--recording true \

--proxy-country US \

--proxy-state CA \

--proxy-city "Los Angeles" \

--json | jq -r '.data.taskId')

echo "Session: $SESSION"Portable fallback without jq:

bash

SESSION=$(scrapeless-scraping-browser new-session \

--name "amazon-us" --ttl 1800 --recording true \

--proxy-country US --proxy-state CA --proxy-city "Los Angeles" --json \

| grep -oE '"taskId":"[^"]*"' | cut -d'"' -f4)Residential-proxy allocations occasionally return a transient 503 on the first attempt — retry once. Allocation latency is usually a few seconds for US and may be longer for less-populated geos; treat the first session of a run as a probe and retry if it doesn't return a taskId.

Step 2 — Discover the DOM before writing selectors

Amazon rotates class names, test IDs, and component attributes across A/B variants. The Scrapeless pattern is discover → extract: read the live DOM first, then build selectors from what the current variant actually serves.

bash

ASIN="B0XXXXXXXX"

scrapeless-scraping-browser --session-id $SESSION open "https://www.amazon.com/dp/$ASIN"

# Brief pause so `wait --load networkidle` doesn't resolve on the pre-warm

# chrome://new-tab-page/ before navigation commits.

scrapeless-scraping-browser --session-id $SESSION wait 1500

scrapeless-scraping-browser --session-id $SESSION wait --load networkidle

# Peek at the product-information region

scrapeless-scraping-browser --session-id $SESSION get html "div[id*='productTitle'], div[id*='centerCol']"From the returned HTML, identify the stable anchors: the ASIN is on a data-asin attribute at the card level; the price is next to a Price label; the availability text lives under a distinct region; Prime eligibility is a role-labelled badge. Use those patterns — not the utility class names — in the extraction step.

Step 3 — Extract core PDP fields

With selectors discovered in Step 2, extract the canonical PDP schema via a single eval. Role-based and semantic anchors survive layout refactors better than utility class hooks.

bash

scrapeless-scraping-browser --session-id $SESSION eval '

(() => {

const q = (sel) => document.querySelector(sel);

const txt = (el) => el ? el.textContent.trim() : null;

const num = (s) => s ? Number(s.replace(/[^\d.]/g, "")) : null;

// Selectors below are examples — replace with anchors you discovered in Step 2

const title = txt(q("#productTitle"));

const priceText = txt(q("[data-a-color=\"price\"] .a-offscreen, .a-price .a-offscreen"));

// Rating: aria-label on #acrPopover is the verified-stable surface in 2026

const rating = num((q("#acrPopover") || {}).getAttribute?.("title"));

const reviews = num(txt(q("#acrCustomerReviewText")));

const availability = txt(q("#availability span"));

const prime = !!q("[aria-label*=\"Prime\"]");

const asinMatch = location.pathname.match(/\/(?:dp|gp\/product)\/([A-Z0-9]{10})/i);

return JSON.stringify({

asin: asinMatch ? asinMatch[1] : null,

title, price: priceText, availability, rating, reviewCount: reviews,

primeEligible: prime, url: location.href,

});

})()

'For richer schemas (bullet features, brand, seller, ship-from, image gallery, variant matrix), repeat the discover → extract cycle for each region. The ASIN-level wiki guide and product-price wiki guide document additional fields.

Step 4 — Scrape a category search with pagination

Amazon paginates search results via &page=N. The accessible organic depth for a single query is approximately 20 pages (~400 items) — a structural index constraint. For broader coverage, split the query by facet (brand, price band, subcategory) rather than pushing past the cap.

bash

QUERY="wireless+headphones"

for page in $(seq 1 20); do

# Mint a fresh session per page — long-lived session reuse degrades after

# ~3 hydrated-page requests (silent returns of chrome://new-tab-page or

# outright termination). One-session-per-page is both more reliable and

# trivially parallelizable.

SID=$(scrapeless-scraping-browser new-session --name "search-p$page" --ttl 300 \

--json | jq -r '.data.taskId')

URL="https://www.amazon.com/s?k=$QUERY&page=$page"

scrapeless-scraping-browser --session-id $SID open "$URL"

# networkidle never settles on /s due to lazy-loaded streams; wait for cards instead

scrapeless-scraping-browser --session-id $SID wait '[data-asin]:not([data-asin=""])'

scrapeless-scraping-browser --session-id $SID eval "

(() => {

const cards = document.querySelectorAll('[data-asin]:not([data-asin=\"\"])');

// Card count varies (~16–60 depending on viewport, query, and A/B variant);

// emit a per-page index and let the consumer compute absolute rank from that

// plus the page number rather than baking a fixed multiplier in here.

return JSON.stringify({

query: '$QUERY', page: $page,

results: [...cards].map((c, i) => ({

pageIndex: i + 1,

asin: c.getAttribute('data-asin'),

title: c.querySelector('h2 span')?.textContent?.trim() || null,

price: c.querySelector('.a-price .a-offscreen')?.textContent || null,

// Rating: try multiple star-widget surfaces — Amazon rotates these

rating: parseFloat(

c.querySelector('[aria-label*=\"out of 5 stars\"]')?.getAttribute('aria-label')

|| c.querySelector('i.a-icon-star span.a-icon-alt, i.a-icon-star-small span.a-icon-alt')?.textContent

|| ''

) || null,

sponsored: c.querySelector('[data-component-type=\"sp-sponsored-result\"]') !== null || c.matches('[data-component-type=\"sp-sponsored-result\"]'),

prime: !!c.querySelector('[aria-label*=\"Prime\"]'),

url: c.querySelector('a.a-link-normal.s-underline-link-text')?.href || null,

})),

});

})()

" > "results-page-$page.json"

scrapeless-scraping-browser stop $SID

doneOne fresh session per page, not one long session. In theory a reused --session-id preserves cookies and fingerprint; in practice Scrapeless sessions start returning blank chrome://new-tab-page or terminating after ~3 hydrated-page requests, so the reliable pattern is to mint a short-TTL session per page and stop it at the end. Parallelizing across pages is then straightforward — run the loop body in background workers without coordination.

ASINs are NOT unique across pages 1–5 (sponsored ASINs legitimately repeat — 104 unique / 274 total observed); don't assume cross-page ASIN sets are disjoint when deduping.

Keep concurrency ≤ 3 per host. Running 4+ simultaneous CLI invocations against the same local daemon surfaces target-page-crash and "Target page, context or browser has been closed mid-eval" errors. For higher throughput, shard across multiple hosts (or multiple user accounts) rather than pushing concurrency up on a single box.

Running parallel workers? The default

~/.scrapeless-scraping-browser/daemon is shared across processes on the same host — multiple workers can hijack each other's sessions even when each passes its own--session-id. What actually works: chain every CLI call for one job as a single-shell&&invocation (atomic from the daemon's POV — other workers can't interleave between your steps), use unique session names (port is hashed from the name), and cap concurrency around 3 workers per host.USERPROFILE/HOMEenv-var isolation is documented in the upstream skill but does not isolate the Rust v0.1.1 binary on Windows in our testing — for higher fan-out, shard across hosts. Seeskill-dev/SKILL.md"Parallel CLI Agents" for the full pattern.

For deeper coverage strategies, see How to Scrape Amazon Category Data.

Step 5 — A note on reviews and the star histogram

Throughout 2025, /product-reviews/<ASIN>/ pages were anonymously accessible and the Cartesian product of star-filter × page-number made full review-corpus scraping straightforward. In 2026, Amazon changed the gate: anonymous requests to review URLs now redirect to /ap/signin in the overwhelming majority of cases. The on-PDP review carousel ([data-hook="review"] inside the main PDP) remains anonymously accessible — that's the 5–10 top reviews surfaced in Step 3's extraction — but the per-star-filter deep traversal and the star-rating histogram on the reviews page require an authenticated session.

Two practical paths forward:

- Use the on-PDP top reviews (available in the Step 3 extraction; no extra navigation needed) when 5–10 reviews per ASIN is enough — usually true for reputation monitoring and trend detection.

- For the full review corpus, pair Scraping Browser with authenticated session state.

auth save <name>stores credentials andauth login <name>replays them, but MFA prompts still appear on each fresh login — practical only for Amazon accounts without MFA enforcement. For pipelines needing the full historical review corpus at scale, the Scrapeless Amazon Scraper API handles the server-side auth so the caller doesn't.

Either way, don't attempt anonymous /product-reviews/* scraping in 2026 — every request after the first couple hits /ap/signin and the session is effectively dead for review work.

Step 6 — A note on cross-marketplace snapshots

The ASIN is globally stable — pricing, availability, and seller pool differ across .com, .co.uk, .de, and .co.jp — and in principle a proxy-aligned session per marketplace is the natural pattern. In practice, US-routed sessions hitting non-US marketplaces frequently land on locale interstitials (e.g., amazon.es/dp/<ASIN> returns the Spanish "Seguir comprando" continue-shopping page — ~160 bytes, no #productTitle, no add-to-cart markers — instead of the product detail page). Non-US marketplace requests are currently best served by the Scrapeless Amazon Scraper API, which handles marketplace routing server-side.

For the US marketplace specifically (.com), every step of this guide works end-to-end without any geo flag. For multi-marketplace pipelines today, use the Scraper API for non-US marketplaces and the Scraping Browser for the US custom-flow work.

See Scraping Amazon With Residential Proxies and Proxy for Scraping Amazon for background on the residential-proxy model.

What You Get Back

The Scraping Browser returns a live DOM — the extraction schema is whatever the caller writes into the eval step. For a single PDP pass with the discover → extract template from Step 3, the fields that reliably come back today look like this:

json

// Schema is normative; field values below are illustrative samples

// from a recent capture, not a frozen snapshot of B09B8V1LZ3 today.

{

"asin": "B09B8V1LZ3",

"title": "Echo Dot (5th Gen, 2022 release) | Big vibrant sound...",

"brand": "Amazon",

"final_price": "49.99",

"availability": "In Stock",

"rating": 4.7,

"review_count": 191146,

"prime_eligible": true,

"amazon_choice": true,

"bought_past_month": "10K+ bought in past month",

"features": [

"PERFECT FOR ANY ROOM – Vibrant sound...",

"VOICE CONTROL YOUR MUSIC – Stream songs..."

],

"image_url": "https://m.media-amazon.com/images/I/...",

"images_count": 9,

"delivery": "FREE delivery on qualifying orders",

"url": "https://www.amazon.com/dp/B09B8V1LZ3",

"domain": "www.amazon.com"

}A few honest observations about this output, worth knowing before running at scale:

- The detail-spec table is template-gated. Fields that live inside

#productDetails_detailBullets_sections1/#detailBulletsWrapper_feature_div—product_dimensions,item_weight,model_number,manufacturer,upc,date_first_available,department— are frequently absent from the DOM Amazon serves. A different residential IP, or appending?language=en_US&m=ATVPDKIKX0DERto the URL, sometimes surfaces the full template. For fields that must be present every run, the Scrapeless Amazon Scraper API resolves this server-side. - Best Sellers Rank (BSR) and its ancestor categories (

bs_rank,bs_category,root_bs_rank,root_bs_category,subcategory_rank) are part of that same detail-spec block. Same caveat. number_of_sellersrequires a secondary navigation to/gp/offer-listing/<ASIN>/and then extracting the seller-row count.top_reviewcomes from the PDP review carousel ([data-hook="review-body"]). The fallback path to/product-reviews/<ASIN>/no longer works anonymously in 2026 (see Step 5) — rely on the PDP carousel for 5–10 top reviews per ASIN.parent_asinrequires scanning the variant-twister region (#twister/#variation_*); only present on products that ship variants.

A search-page snapshot returns a structured results array:

json

{

"query": "wireless headphones",

"page": 1,

"results": [

{

"rank": 1, "asin": "B0XXXXXXXX", "title": "...",

"price": "$79.99", "rating": 4.5, "review_count": 12034,

"sponsored": false, "prime": true,

"url": "https://www.amazon.com/dp/B0XXXXXXXX"

}

]

}Need the full 55-field schema? Use the Amazon Scraper API

The companion product — the Scrapeless Amazon Scraper API — returns a pre-normalized ~55-field schema for any ASIN: parent_asin, buybox_prices{initial, final, unit, discount}, full BSR across ancestor categories (root_bs_rank, bs_rank, subcategory_rank), number_of_sellers, seller_id, product_details[{type, values}], top_review, answered_questions, variations, and the rest — without writing a single selector or extra navigation.

| Scrapeless Scraping Browser (this guide) | Scrapeless Amazon Scraper API | |

|---|---|---|

| Output | Live DOM; you write the eval |

Pre-normalized JSON, ~55 fields |

| Selector maintenance | On the caller | On Scrapeless |

| Best for | Logged-in views, custom fields, multi-step flows, region-gated pages, add-to-cart automation, per-variant navigation | High-volume product intelligence, MAP monitoring, repricers, structured analytics |

Normalization (is_available, buybox_prices split, BSR ancestor traversal) |

Caller's responsibility | Handled server-side |

| Good first choice when… | You need exactly the field subset your pipeline cares about, or non-PDP flows (cart, saved-for-later, wishlist, Subscribe & Save) | You want the canonical Amazon product object with guaranteed schema |

Both products share the same Scrapeless account and the same residential-proxy pool. Pick the right tool per use case; they compose cleanly when a pipeline needs both.

For full schema reference and example payloads, see Scrape Amazon Product Data and the Amazon solutions hub.

A few more honest observations about search-card outputs, worth knowing before running at scale:

- The

currencyfield is usually inferable from the marketplace domain but not always emitted inline by the page — derive it from the session's marketplace context rather than parsing the price string. availabilitytext is localized; normalize to an enum (InStock,OutOfStock,BackOrdered,LimitedStock,Unavailable) before downstream use.- The

ratingandreviewCountfields on search-result cards are occasionally missing on brand-new listings; treat them as nullable.

Conclusion

Scraping Amazon at scale is no longer just about fetching HTML — it requires reliable rendering, locale-aware sessions, and a workflow that can survive frequent DOM changes and anti-bot pressure. Scrapeless Scraping Browser brings these pieces together in a production-ready way, making it easier to collect stable product data, reviews, and search results without maintaining fragile local browsers.

For teams that need simple, structured Amazon data at scale, Scrapeless Amazon Scraper API is the better fit. For workflows that require interaction, custom navigation, or browser-level control, Scrapeless Scraping Browser gives you the flexibility and resilience to build on top of real web pages. In practice, the two products work best as a combined stack: use the API for standardized extraction, and the browser for complex flows and edge cases.

Ready to Scrape and Earn?

Join our vibrant community to claim a free plan and connecting with fellow innovators:

Scrapeless Official Discord Community

Scrapeless Official Telegram Community

FAQ

Q1: Why use Scrapeless Scraping Browser instead of a regular scraper or local Playwright setup?

Amazon pages are heavily dynamic and often protected by anti-bot systems. Scrapeless Scraping Browser gives you real browser rendering, residential proxies, fingerprinting protection, and session control in one place, which makes it much more reliable for production scraping.

Q2: Do I need a proxy to scrape Amazon?

Yes. Datacenter IP ranges are filtered aggressively on Amazon's edge, and request patterns from a single IP are throttled quickly. Residential proxies — wired into Scrapeless Scraping Browser via --proxy-country, --proxy-state, and --proxy-city — produce stable collection rates. See Proxy for Scraping Amazon and Scraping Amazon With Residential Proxies.

Q3: How is this different from the Amazon Scraper API?

The Amazon Scraper API returns structured JSON for well-known endpoints (ASIN lookup, category pages, search) without the caller rendering a browser — ideal for pipelines that want a fixed schema and no extraction logic. Scrapeless Scraping Browser is the right tool when the page needs JavaScript rendering, when navigation sequences matter (filters, dropdowns, expandable sections), or when per-session cookie/fingerprint continuity across paginated requests is required.

Q4: Which marketplaces are supported?

Every Amazon marketplace domain is a plain URL — .com, .co.uk, .de, .fr, .it, .es, .co.jp, .com.au, .in, .com.mx, .com.br, and so on. Align --proxy-country, --timezone, and --languages with the marketplace's origin region. In practice (see Step 6), the US marketplace works end-to-end on the Scraping Browser; non-US marketplaces are currently best served by the Scrapeless Amazon Scraper API, which handles marketplace routing server-side.

Q5: What happens when Amazon changes the DOM?

The discover → extract flow is the durable answer: get html the relevant region first, identify stable anchors (ASIN data attributes, ARIA labels, semantic role-based locators), and populate eval with selectors derived from the current DOM — not hardcoded class names. When the page refactors, the same discover step adapts automatically.

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.