Hermes Agent + Scrapeless: 1-Line CDP Integration for Anti-Detection Web Agents

Scraping and Proxy Management Expert

Key Takeaways:

- One config-line integration. Hermes Agent by Nous Research has a built-in browser tool that already speaks the Chrome DevTools Protocol. Pointing it at Scrapeless Scraping Browser is a single

browser.cdp_urlline in~/.hermes/config.yaml. No SDK install, no CLI subprocess, no agent-side code change. - Every Hermes browser action runs in the Scrapeless cloud browser.

browser_navigate,browser_snapshot,browser_click,browser_type,browser_scroll,browser_press,browser_get_images, andbrowser_visionexecute inside the Scrapeless cloud browser, behind residential proxies, with anti-detection fingerprinting on every session. - Multi-channel reach. Hermes' gateway exposes the agent over Telegram, Discord, Slack, WhatsApp, Signal, email, and a CLI. With Scrapeless wired in, the cloud browser becomes the back-end of any chat-driven research, lead-generation, or monitoring workflow without exposing a separate scraping endpoint.

- Anti-detection cloud browser, residential proxies in 195 countries. Scrapeless Scraping Browser handles JavaScript rendering, residential-proxy egress, browser fingerprint customization (user agent, time zone, language, screen resolution), and session persistence at the platform level, so the agent can focus on the task itself rather than on access infrastructure.

- Direct CDP integration. Pointing Hermes' browser tool at the Scrapeless WSS endpoint is the entire wiring — no skill drop-in, no SDK, no subprocess.

Introduction: from local Chromium to a hardened cloud browser

Hermes Agent is an open-source autonomous agent with persistent memory, autonomous skill creation, and a multi-channel gateway. Out of the box it ships a browser tool that uses an accessibility-tree model — pages render to text snapshots with interactive elements labeled @e1, @e2, @e3, and the LLM drives navigation and form-fill against those refs. This works well for documentation lookups and basic navigation tasks.

The commercial web is a different surface. Cloudflare Turnstile, reCAPTCHA, Akamai Bot Manager, IP-reputation lists, and JavaScript-only SPAs sit between automated clients and many retailers, marketplaces, and SERPs. A local Chromium running unaided is often identified as automated traffic by these layers. Workflows the agent could otherwise complete — pulling pricing from a category page, monitoring a public listings page, filling an authenticated form, extracting a typed dataset for downstream RAG — pause at the first interstitial.

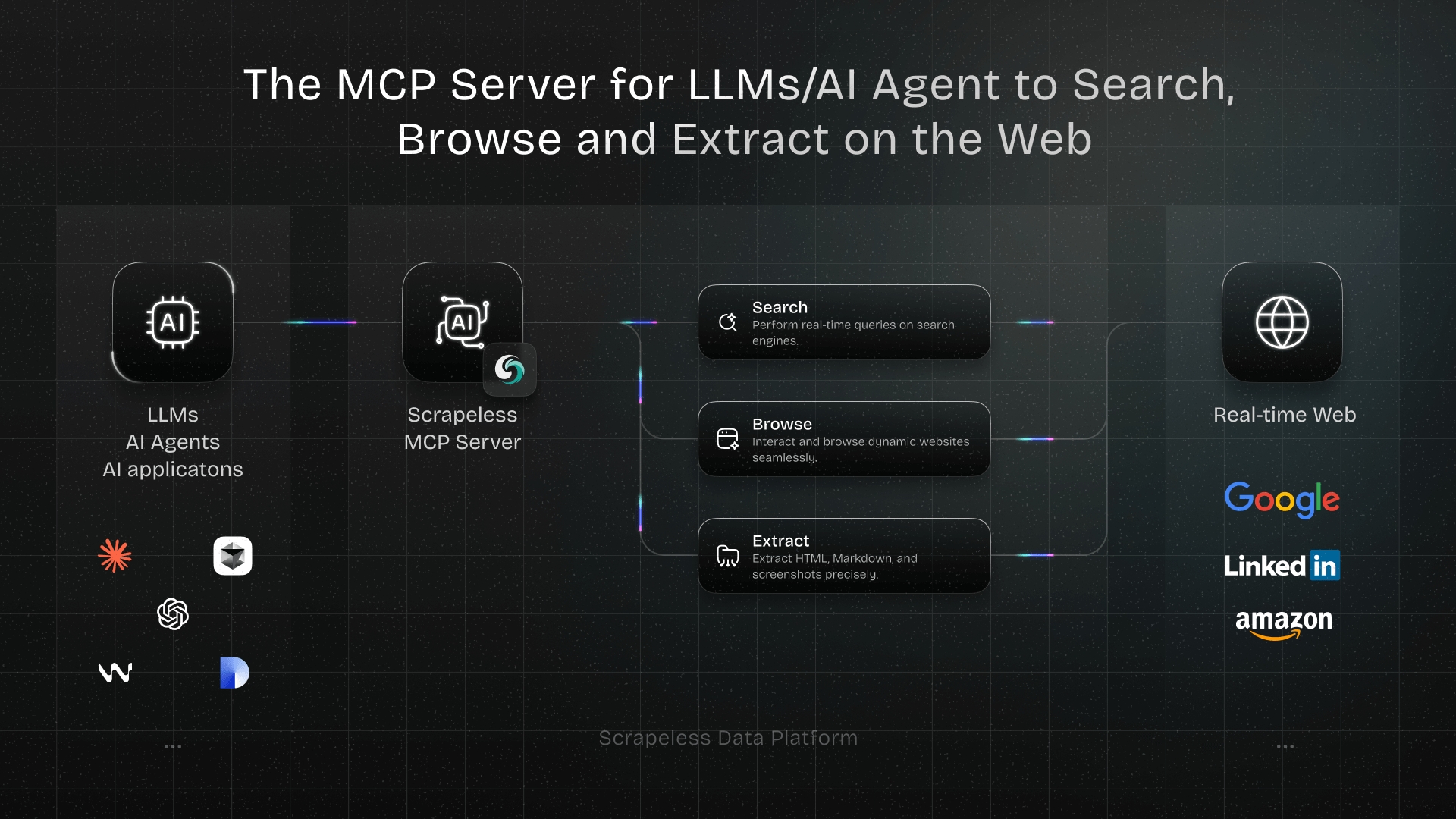

Scrapeless Scraping Browser is an anti-detection cloud browser exposed over the Chrome DevTools Protocol. It ships a residential-proxy network spanning 195 countries (per Scrapeless's documentation) and randomizes browser fingerprints per session. Hermes' browser tool already speaks CDP. The integration is one config line. This post walks through the wiring, the prompts the agent will accept, and the discover → extract pattern that scales the combination across sites.

Why Scrapeless Scraping Browser

Scrapeless Scraping Browser is a customizable, anti-detection cloud browser designed for web crawlers and AI agents. For Hermes Agent specifically, it brings:

- Chrome DevTools Protocol surface — Hermes' browser tool already speaks CDP. The cloud browser drops in behind the same tool calls without recompilation, configuration sprawl, or new code paths.

- Residential proxies in 195 countries — geo-bound queries return the listings a local user would see, with rotation per session and no per-request setup.

- Cloud-side JavaScript rendering — pages are fully hydrated before extraction, so SPAs, infinite-scroll feeds, and lazy-loaded panels are first-class targets for

browser_snapshotandbrowser_vision. - Browser fingerprint customization — core parameters (user agent, time zone, language, screen resolution) are configurable per session per Scrapeless's documentation; consistent identities are available via Scrapeless's custom fingerprint feature when continuity matters.

- Session persistence through

sessionTTL(60–900 seconds) andsessionNamequery parameters on the WSS endpoint, so multi-step Hermes flows reuse the same warm browser, cookies, and scroll position across tool calls. - Single management surface — one API key, one cloud account, and dashboard-side recording for replay.

Get your API key on the free plan at sign up on Scrapeless and join our official community.

Scrapeless Official Discord Community

Scrapeless Official Telegram Community

Prerequisites

- Hermes Agent installed. The official installer covers Linux, macOS, WSL2, and Termux on Android:

curl -fsSL https://raw.githubusercontent.com/NousResearch/hermes-agent/main/scripts/install.sh | bash. The setup wizard runs on first launch. - A Scrapeless account and API key — sign up at Scrapeless and copy the key from Settings → API Key Management.

- Python 3.11 or newer — Hermes' runtime requirement.

- A chat-model API key — Hermes is provider-agnostic (Nous Portal, OpenRouter, NVIDIA NIM, Xiaomi MiMo, and any custom OpenAI-compatible endpoint). Configure whichever provider Hermes is already wired to.

- Basic familiarity with editing

~/.hermes/config.yamlor running Hermes' CLI subcommands.

Install

The full setup is four sub-steps. Each one is independently verifiable, so you can pause and confirm before moving on.

1. Get the Scrapeless API key

Sign up at Scrapeless, open the dashboard, and from Settings → API Key Management create a key. Copy the value — it goes into the Hermes config in step 2.

2. Point Hermes' browser tool at the Scrapeless WSS endpoint

Open ~/.hermes/config.yaml (create the file if it does not exist) and add the browser.cdp_url line. The Scrapeless CDP endpoint accepts the API key, the proxy country, and the session TTL as query parameters:

yaml

# ~/.hermes/config.yaml

browser:

cdp_url: "wss://browser.scrapeless.com/api/v2/browser?token=YOUR_SCRAPELESS_API_KEY&proxyCountry=US&sessionTTL=600"That single line routes every Hermes browser tool call — browser_navigate, browser_snapshot, browser_click, browser_type, browser_scroll, browser_press, browser_get_images, browser_vision — through the Scrapeless cloud browser. The accessibility-tree representation Hermes uses to label @e1, @e2, @e3 is produced from the cloud browser's render, so existing prompts and skills keep working.

If editing the YAML by hand is inconvenient, the CLI form /browser connect "wss://browser.scrapeless.com/api/v2/browser?token=YOUR_SCRAPELESS_API_KEY&proxyCountry=US" does the same thing for the current session without persisting it.

3. Set the API key out of the config file (recommended)

For shared repos or multi-user shells, keep the secret out of the YAML. Hermes config supports ${VAR} substitution; export the key once and reference it from the URL:

macOS / Linux (bash or zsh) — append to ~/.zshrc or ~/.bashrc:

bash

export SCRAPELESS_API_KEY="your_api_token_here"

source ~/.zshrc # or ~/.bashrcWindows (PowerShell) — persistent, user-scoped:

powershell

[Environment]::SetEnvironmentVariable("SCRAPELESS_API_KEY", "your_api_token_here", "User")Then update the config to interpolate the variable:

yaml

browser:

cdp_url: "wss://browser.scrapeless.com/api/v2/browser?token=${SCRAPELESS_API_KEY}&proxyCountry=US&sessionTTL=600"4. Verify the connection

Restart Hermes so it picks up the new config, then ask the agent:

"Open https://example.com and tell me the H1 heading text."

A successful run returns "Example Domain". If the agent reports an ERR_TUNNEL_CONNECTION_FAILED, a 401, or a hang on browser_navigate, the most common causes are the API key, the proxy region, or the WSS URL pasted with a stray space.

How you actually use this: prompt your agent

After the config-line change, you drive Scrapeless from Hermes by talking to the agent — not by writing CDP glue. The agent owns the discover → extract loop and picks the browser tools turn by turn. Hermes' multi-channel gateway means the same prompts work from Telegram, Discord, Slack, WhatsApp, Signal, email, or the local CLI.

Prompts you can paste

| You type | What the agent does |

|---|---|

| "Open https://news.ycombinator.com and return the top five stories with title, URL, author, score, and comment count as JSON." | browser_navigate → browser_snapshot → typed extraction. |

| "Compare the pricing pages of these three SaaS competitors and summarize the differences." | Multi-tab navigation, browser_get_images for plan tiers, LLM summary. |

"Pull the homepage and pricing page from https://example.com as if I were in Tokyo." |

Restart the session with proxyCountry=JP (see "Pin a region" below), then render. |

"Watch this Greenhouse careers page and tell me which roles match staff engineer or infra." |

Navigate, snapshot the listing block, filter rows by keyword, return structured rows. |

"Take a full-page screenshot of https://example.com and analyze what's on it." |

browser_navigate → browser_vision (captures + sends to the multimodal model). |

"Fill the contact form at <URL> with my name, email, and a short message — but stop before submit so I can review." |

Snapshot the form, map prompt fields to @e1/@e2/…, browser_type, screenshot, halt at the submit ref. |

| "The extraction came back empty yesterday — rerun with session recording enabled so I can replay it." | Reissue the same flow with sessionRecording=true on the WSS URL; replay link surfaces in the Scrapeless dashboard. |

"Open the Amazon product page at <URL> from a US egress and return title, price, rating, review count." |

Pinned-region session, snapshot, structured extract. |

Shaping prompts

| Phrasing | Effect |

|---|---|

| "Use a German egress." | Restart the cloud-browser session with proxyCountry=DE on the WSS URL. |

| "Keep the session warm for the next ten minutes." | Bump sessionTTL=600 so multi-step flows reuse the same browser. |

| "Enable session recording." | Append sessionRecording=true — the dashboard exposes a replayable video for the run. |

| "Return markdown, not raw HTML." | The agent feeds the snapshot through its extractor and returns the markdown view. |

| "Stop before the final submit." | Hermes' built-in pattern — drive the form, screenshot, halt at the submit ref. |

Steps 1–5 below are the under-the-hood reference. Read them once to see how the discover → extract pattern composes; then trust the agent to apply it to whatever request the operator hands over from chat.

Step 1 — Connect to Scrapeless Scraping Browser

The connection is the WSS URL from the install step. The Hermes browser tool dials it on first use and reuses the same socket for the lifetime of the session.

yaml

# ~/.hermes/config.yaml

browser:

cdp_url: "wss://browser.scrapeless.com/api/v2/browser?token=${SCRAPELESS_API_KEY}&proxyCountry=US&sessionTTL=600"Three query parameters do most of the work:

token— the Scrapeless API key. Required.proxyCountry— the residential proxy country (ISO-3166 alpha-2, e.g.US,DE,JP,GB). Defaults to a global pool; pin it for geo-bound listings.sessionTTL— how long the cloud browser stays alive after the last command, in seconds. Range 60–900. Higher TTLs are right for multi-step flows; the default of 60 is right for one-shot extractions.

A transient os error 10054, ERR_TUNNEL_CONNECTION_FAILED, or 503 on the first dial can occur when anti-bot infrastructure resets new sessions before the browser fully spins up. Reissue the failing prompt to retry; for production workflows, the FAQ entry below covers an explicit retry pattern.

Step 2 — Discover with browser_navigate + browser_snapshot

Open the page and read it as an accessibility tree before extracting. The snapshot returns text labels for every interactive ref (@e1, @e2, @e3, …) and the surrounding text content — enough for the agent to pick the right element without guessing CSS selectors.

text

You: Open https://example.com/products and snapshot the page.

Agent: browser_navigate "https://example.com/products"

browser_snapshot

[returns accessibility tree with @e1 = search input, @e2 = sort dropdown,

@e3..@e22 = product cards with title + price + rating refs]browser_snapshot is the load-bearing call. It is what turns a CDP-style live page into something an LLM can reason about turn by turn. Skip it and the agent has to fall back to slicing raw HTML, which is more brittle and uses more tokens. The snapshot is the discover step in the discover → extract pattern; every extraction below assumes it ran first.

Step 3 — Extract with structured prompts

With the snapshot in hand, the extraction is a regular LLM tool-call: the agent reads the refs and surrounding text, picks the fields it needs, and returns a typed record. No CSS selectors, no JS evals — the snapshot already contains the data the model needs.

text

You: From the snapshot, return the top 10 products as JSON with title, price, rating, and product URL.

Agent: [returns JSON array with 10 rows; missing fields are null]For non-trivial pages (multi-tab catalogs, infinite-scroll feeds, A/B-rendered variants), supplement the snapshot with browser_scroll to hydrate lazy-loaded panels, then re-snapshot. The cloud browser handles the JS rendering; Hermes handles the loop.

Step 4 — Drive a multi-step interaction

The same browser tools handle form-fill, navigation chains, and human-in-the-loop reviews. The pattern: snapshot → identify ref → act → snapshot → next ref.

text

You: Open https://app.example.com/contact, fill name, email, and message,

screenshot the form, and stop before submit so I can review.

Agent: browser_navigate "https://app.example.com/contact"

browser_snapshot

# @e1 [input] "Full name", @e2 [input] "Email",

# @e3 [textarea] "Message", @e4 [button] "Submit"

browser_type @e1 "Jane Doe"

browser_type @e2 "jane@example.com"

browser_type @e3 "Hello, I'd like to talk about ..."

browser_vision # capture full-page render for review

# halt — @e4 is not pressed until the human approves the captured pageReal input events fire on the cloud browser, so client-side validation runs exactly as it would for a human visitor. The stop-before-submit pattern keeps a human in the loop at the last step — the recommended default for any action with real-world consequences (job applications, vendor onboarding, payment forms).

Step 5 — Pin a region and persist a session across turns

For any target where listings vary by egress region (Google SERPs, Amazon by-marketplace, hotel/flight booking, local-business directories), pin proxyCountry to the user's intended region. For multi-step flows that need warm cookies and scroll position across multiple agent turns (paginated SERPs, authenticated dashboards, multi-page forms), set sessionTTL higher and reuse the same sessionName.

yaml

# Tokyo egress, 15-minute warm session, replayable video

browser:

cdp_url: "wss://browser.scrapeless.com/api/v2/browser?token=${SCRAPELESS_API_KEY}&proxyCountry=JP&sessionTTL=900&sessionName=tokyo-research&sessionRecording=true"Switching regions mid-conversation is a /browser connect away — Hermes drops the current socket, dials the new URL, and the next browser_navigate runs through the new exit. Recording is the highest-leverage flag for an unattended pipeline: every run shows up in the Scrapeless dashboard as a replayable video, so when the agent reports an empty extraction the operator can see what the cloud browser actually rendered.

What to expect when this runs against the live web

- Hydration timing varies by site. SPAs that hydrate content through a secondary XHR may need a

browser_scrollor a brief wait before the snapshot reflects the final DOM. Re-snapshot once if a field is consistently null. - Selector-free extraction is more resilient than CSS selectors but not immune to layout drift. The accessibility tree changes when sites add a new column or rename a button; re-prompt the agent to re-discover refs rather than encoding them in a saved skill.

- Anti-bot interstitials show up as a redirect in the snapshot. When a site front-loads a Cloudflare or Akamai challenge that the cloud browser cannot transparently complete, the snapshot reports the challenge page rather than the target. Widen the fingerprint or pin a different proxy region.

browser_visioncomplementsbrowser_snapshot. For visually complex pages (price tables embedded as images, charts, infographics), the vision tool is the right escape hatch — it sends a screenshot to the multimodal model rather than the accessibility text.- Session recording is high-leverage.

sessionRecording=trueon the WSS URL turns "the agent did something weird" into a clickable video in the Scrapeless dashboard. Check the pricing page for whether your plan includes recording.

FAQ

Do I need a residential proxy?

Yes for any site with meaningful anti-bot protection, which is most retailers, marketplaces, and SERP endpoints. The Scrapeless WSS endpoint routes through the residential pool by default; the proxyCountry query parameter pins the egress country.

The first connection returned ERR_TUNNEL_CONNECTION_FAILED or os error 10054. What now?

Both are transient session-spin-up errors and typically resolve on retry. Reissue the failing prompt; for production workflows that must not bounce on the first failure, wrap the failing prompt in a small retry loop with exponential backoff (2s, 5s, 15s).

A site returns Access Denied. What now?

First, retry — anti-bot layers often clear after a session restart. If the page persistently blocks, change proxyCountry to a different region, reissue /browser connect to spin a fresh fingerprint, or contact Scrapeless support to confirm the block is at the platform level rather than at the account level.

Selectors keep breaking. How do I survive DOM rotation?

Use browser_snapshot rather than slicing raw HTML with CSS selectors. The accessibility-tree representation is more stable across layout drift, and the agent re-discovers refs each turn instead of relying on hard-coded paths.

How many concurrent workers per host?

Scrapeless does not publish a fixed per-host concurrency cap; rate-limiting is handled platform-side. For multi-host pipelines, run independent agent loops per host rather than concentrating on a single domain — and confirm your account tier's concurrency in the Scrapeless Dashboard before scaling out.

Can I use this without an AI agent?

Yes. The Scrapeless WSS endpoint is plain CDP — any Puppeteer or Playwright script connects with puppeteer.connect({ browserWSEndpoint: ... }) or chromium.connectOverCDP(...) and gets the same cloud browser. Hermes is the recommended path when chat-driven research or multi-channel reach matters; the CDP endpoint is the lower-level fallback.

Can I swap Hermes for another agent?

Yes. Any agent that supports a custom CDP endpoint (Browserbase-style integrations, Browser Use, custom Playwright/Puppeteer pipelines) connects to the same WSS endpoint. The integration surface is the protocol, not the client.

How do I keep cookies and login state across multiple agent turns?

Set sessionTTL to a longer value (300–900 seconds), give the session a stable sessionName, and avoid restarting the connection between calls. The cloud browser keeps the same browser profile, cookies, and scroll position warm across the lifetime of the session.

Where do I see what the cloud browser actually rendered?

Append sessionRecording=true to the WSS URL. Every run surfaces in the Scrapeless dashboard as a replayable video, so an empty extraction or a surprise interstitial is visible end to end without instrumenting the agent.

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.