LangChain Agents That See the Live Web: Building AI Data Pipelines with Scrapeless

Web Data Collection Specialist

Key Takeaways:

- First-party LangChain integration. The

langchain-scrapelesspackage on PyPI ships five ready-to-use tools —ScrapelessDeepSerpGoogleSearchTool,ScrapelessDeepSerpGoogleTrendsTool,ScrapelessUniversalScrapingTool,ScrapelessCrawlerCrawlTool, andScrapelessCrawlerScrapeTool— that drop straight into a LangChain or LangGraph agent. No subprocess plumbing, no custom CDP wiring. - The pipeline is four moves: Discover → Render → Extract → Store. Search Google with the deep-SERP tool, render any URL with the universal scraping tool (Scraping Browser under the hood), extract typed records with

PydanticOutputParser, and optionally embed into a vector store for downstream RAG. - The agent decides when to scrape. LangChain's

create_agent(LangGraph runtime under the hood) lets the LLM choose between answering from prior context and calling the Scrapeless tool — the cloud browser only spins up when fresh data is genuinely needed, which keeps cost and latency bounded on routine turns. - Typed records, not raw HTML. Pairing

ScrapelessUniversalScrapingTool(markdown response) with a Pydantic schema turns scraped pages into validatedProduct/Article/JobListingrecords that downstream code can rely on without extra parsing glue. - Anti-detection cloud browser, residential proxies in 195+ countries. Scrapeless Scraping Browser handles JavaScript rendering, residential-proxy egress, and fingerprint randomization (UA, timezone, WebGL, canvas) on every session, so the agent stays focused on reasoning rather than evasion plumbing.

Introduction: AI data pipelines that see the live web

A bare LLM answers from training data. For most agentic workflows that actually ship — competitive price intelligence, lead research, market monitoring, structured news ingestion, RAG over the live web — the model needs to see the page right now. Training cutoffs, paywalls, lazy-loaded SPAs, and personalized rendering all push the answer into territory the LLM never saw, and the response either cites a stale number or politely declines.

LangChain plus a cloud browser is the standard answer. The model reasons; the browser fetches; the agent stitches the two together. The friction point most teams hit sits below the agent: residential proxies, JavaScript rendering, anti-detection fingerprinting, and session lifecycle each need solving before the agent can do anything useful. Direct Playwright over a residential VPN works for a single laptop run; it does not survive a production schedule.

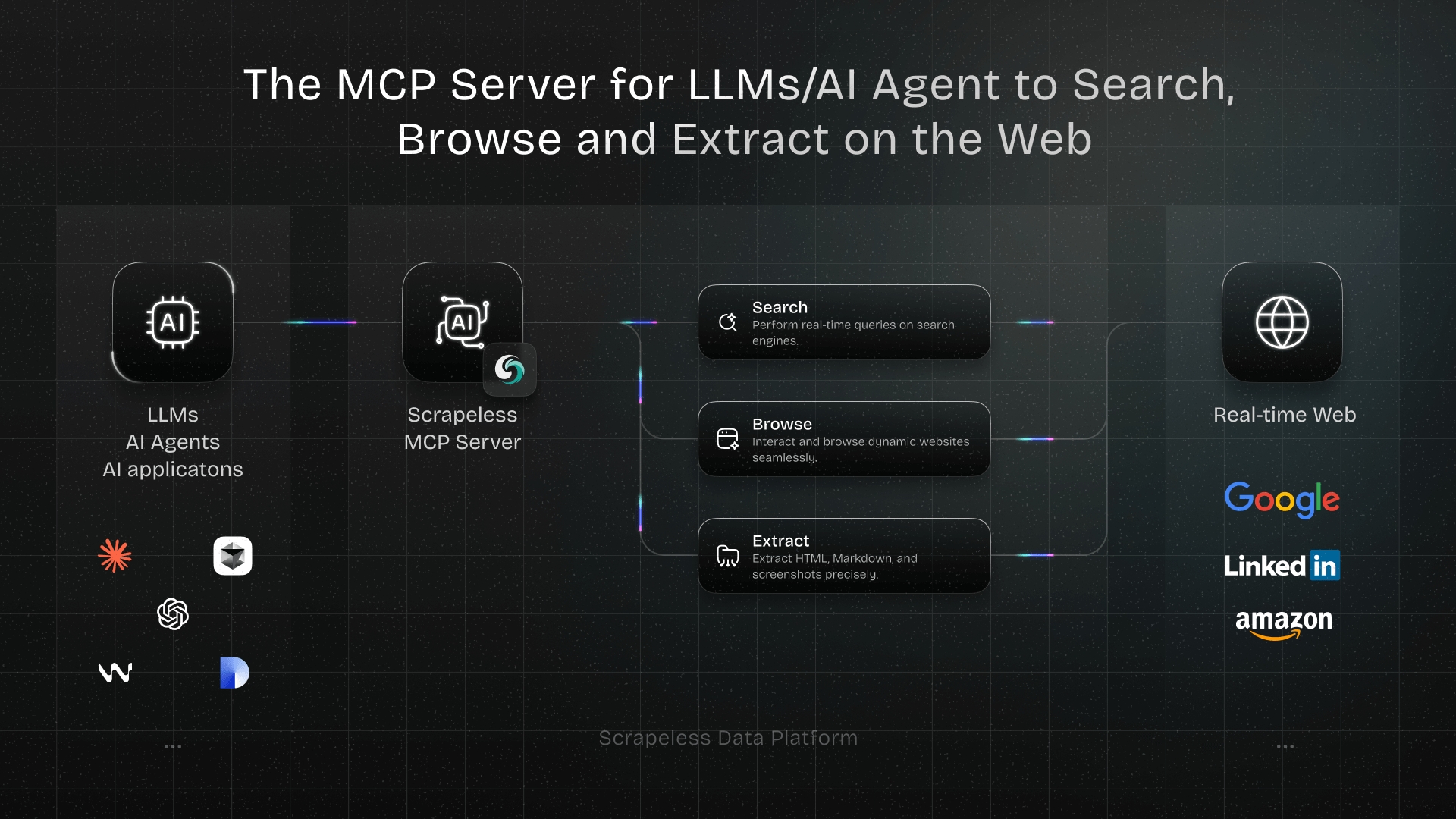

Scrapeless Scraping Browser handles those four concerns at the platform level, and the langchain-scrapeless PyPI package exposes them as native LangChain tools. This post walks through composing those tools into a four-step Discover → Render → Extract → Store AI data pipeline, with a competitive-research worked example, typed Pydantic output, bounded concurrency, and observability hooks. For the same primitive over a different protocol, see the MCP integration post.

What you can build

The five tools shipped by langchain-scrapeless cover the most common AI-data-pipeline patterns:

- Competitive price intelligence. Search a category, render the top retailer pages, extract a typed

Productrecord with price, rating, and review count. - SERP monitoring. Track keyword ranking and snippet drift across regions with

ScrapelessDeepSerpGoogleSearchToolparameterized byglandhl. - Market-trend tracking. Pull

interest_over_timeand related queries withScrapelessDeepSerpGoogleTrendsToolfor category sizing (Trends endpoint access depends on your Scrapeless plan tier). - Product-detail extraction at scale. Feed a list of URLs into

ScrapelessCrawlerScrapeTooland get back markdown ready for an LLM extractor. - Lead generation from directories. Crawl business listing sites with

ScrapelessCrawlerCrawlTool, parse contact rows into typed records, dedupe by domain. - Structured news ingestion for RAG. Render publisher pages to clean markdown, extract

Articlerecords, embed into a LangChain vector store, and query with retrieval-augmented chains.

All six pipelines compose the same primitives — search, render, extract, store — and the worked example below covers the full chain end to end.

Why Scrapeless Scraping Browser

Scrapeless Scraping Browser is a customizable, anti-detection cloud browser designed for web crawlers and AI agents. For LangChain agents specifically, it brings:

- Residential proxies in 195+ countries — geo-bound queries return the listings a local user would see, and rotation is automatic on every session.

- Cloud-side JavaScript rendering — full Chromium with the page hydrated before extraction, so SPAs, infinite-scroll feeds, and lazy-loaded panels are first-class targets.

- Anti-detection fingerprinting on every session — UA, timezone, language, screen resolution, WebGL, and canvas are randomized per session, with a custom-fingerprint API for fixed identities when consistency matters.

- Session persistence at the cloud-browser layer via

sessionTTL(60–900s) andsessionName— available when driving the WSS endpoint directly; thelangchain-scrapelesstool calls allocate a fresh session perinvoke, which is the right default for a research pipeline. - First-party LangChain integration —

pip install langchain-scrapelessexposes the cloud browser as native LangChain tools; no subprocess wrappers, no CDP plumbing, no custom serializers.

Get your API key on the free plan at Scrapeless. The full integration is documented at github.com/scrapeless-ai/langchain-scrapeless.

Claim your free plan and start scrape:

Join Scrapeless's vibrant community to claim a $5-10 free plan and connecting with fellow innovators:

Scrapeless Official Discord Community

Scrapeless Official Telegram Community

Prerequisites

- Python 3.10 or newer.

- A Scrapeless account and API key — sign up at Scrapeless and copy the key from Settings → API Key Management.

- A chat-model API key — the examples below use OpenAI (

OPENAI_API_KEY); the same agent code works withlangchain-anthropic,langchain-google-genai,langchain-ollama, or any LangChain chat model by swapping theChatOpenAIline. - Basic familiarity with

pipandvenv.

Install

The full setup is four sub-steps. Each one is independently verifiable, so you can pause and confirm before moving on.

1. Create a venv and install the packages

bash

python -m venv .venv

source .venv/bin/activate # Windows: .venv\Scripts\activate

pip install langchain langchain-scrapeless langchain-openai langgraph pydanticlangchain-scrapeless pulls in langchain-core and the Scrapeless Python SDK as transitive dependencies. The langchain meta-package provides langchain.agents.create_agent, the modern (non-deprecated) ReAct agent runtime; langgraph provides the underlying CompiledStateGraph runtime; langchain-openai is the chat-model provider used in the examples. Swap to langchain-anthropic or another provider if you prefer.

2. Configure your API keys

Export both keys for the current shell session:

bash

export SCRAPELESS_API_KEY="your_api_token_here"

export OPENAI_API_KEY="your_openai_token_here"For a permanent install, add the same lines to ~/.bashrc / ~/.zshrc, or use a .env loader (python-dotenv) and load the file at process start. The Scrapeless tools read SCRAPELESS_API_KEY from the environment automatically — you do not pass it as a constructor argument.

3. Verify the install

A short smoke test that exercises the search tool and prints a one-line result. The Scrapeless API occasionally returns a transient 400 on the first call after a cold start; the test loops up to three times so a single retry rescues those cases:

python

# verify.py

import time

from langchain_scrapeless import ScrapelessDeepSerpGoogleSearchTool

tool = ScrapelessDeepSerpGoogleSearchTool()

for attempt in range(3):

try:

result = tool.invoke({"q": "scrapeless scraping browser",

"hl": "en", "gl": "us"})

print(str(result)[:300])

break

except ValueError as e:

print(f"transient (attempt {attempt + 1}): {e}")

time.sleep(3)Run it: python verify.py. A successful run prints a string of search results within a few seconds. If all three attempts raise ValueError with failed with status 401, the API key is missing or wrong; re-check echo $SCRAPELESS_API_KEY in the same shell. Persistent 400s after three attempts indicate the account may not have access to the requested endpoint — see the FAQ on transient errors.

4. (Optional) Pin the dependency versions

For reproducible builds, pin the versions you tested against. The combination resolved by pip install langchain langchain-scrapeless langchain-openai langgraph pydantic on a clean Python 3.12 environment is:

langchain==1.2.17

langchain-core==1.3.2

langchain-openai==1.2.1

langchain-scrapeless==0.1.3

langgraph==1.1.10

pydantic==2.13.3

scrapeless==1.1.1Note: langchain-scrapeless 0.1.3's package metadata declares langchain-core <0.4.0, but pip resolves to langchain-core 1.3.2 because langchain-openai requires it; the runtime import still works. To avoid the resolver warning entirely, install langchain-scrapeless and only the chat-model provider you need (e.g. drop langchain-openai and pass a ChatAnthropic instance instead). LangGraph 1.0 went GA in October 2025; the 1.1.x series is the current stable.

How you actually use this: prompt your agent

After install, you build pipelines by talking to the agent — not by re-deriving CSS selectors every time the target site rotates its DOM. The agent owns the discover → render → extract loop and the LLM picks the right tool for each turn.

Prompts you can paste

| You type | What the agent does |

|---|---|

| "Find the top 5 portable espresso makers and return name, price, rating as JSON." | Searches Google, renders the top results, extracts a typed Product[]. |

"Give me the current Google Trends interest over the last 12 months for vector database in the US." |

Calls ScrapelessDeepSerpGoogleTrendsTool with data_type="interest_over_time" (requires plan-tier access to the Trends endpoint). |

"Crawl https://example.com/docs to depth 2 and return markdown for every page." |

Calls ScrapelessCrawlerCrawlTool with limit=.... |

"Render https://www.amazon.com/dp/B08N5WRWNW as markdown." |

Calls ScrapelessUniversalScrapingTool with response_type="markdown". |

"Search for series A startups in fintech 2026, list the companies and their funding round size." |

Search → render → typed extract chained automatically. |

| "Pull the homepage and pricing page from these three SaaS competitors and summarize differences." | Multi-URL ScrapelessCrawlerScrapeTool → LLM summary. |

"Watch this Greenhouse careers page and tell me which roles match staff engineer or infra." |

Render → keyword filter → JSON rows. |

"What are the top 10 organic results for langchain scrapeless tutorial from a UK egress?" |

ScrapelessDeepSerpGoogleSearchTool with gl="uk", hl="en". |

Worked example

You type:

Find the top 3 portable espresso makers for travel under $150. For each one, return name, price, average rating, review count, and three key features. Pin the search to a US egress.

The agent's plan (in plain English):

- Call

ScrapelessDeepSerpGoogleSearchToolwithq="best portable espresso makers under 150",gl="us",hl="en",num=5to get organic result URLs. - For the top 3 result URLs, call

ScrapelessUniversalScrapingToolwithresponse_type="markdown"to render each page as clean markdown. - Pass each rendered page to the LLM with a

PydanticOutputParserbound to aProductschema; reject any page where the parser fails to extractnameandprice. - Aggregate the three

Productrecords into a JSON array and return.

What you get back:

json

[

{

"name": "Wacaco Nanopresso",

"price": 79.95,

"rating": 4.7,

"review_count": 12483,

"key_features": [

"Manual hand-pump operation",

"Up to 18 bars of extraction pressure",

"Compatible with ground coffee or NS capsules via adapter"

],

"url": "https://example.com/p/wacaco-nanopresso"

},

{

"name": "Flair NEO Flex",

"price": 119.00,

"rating": 4.5,

"review_count": 2104,

"key_features": [

"Lever-driven, no electricity required",

"Espresso-grade pressure with bottomless portafilter",

"Detachable for travel"

],

"url": "https://example.com/p/flair-neo-flex"

},

{

"name": "Outin Nano",

"price": 129.99,

"rating": 4.6,

"review_count": 5871,

"key_features": [

"Built-in heating element",

"Self-cleaning cycle",

"USB-C charging, ~3-min heat-up"

],

"url": "https://example.com/p/outin-nano"

}

]

// Schema reflects exactly what the Step 4 parser emits. Field values are illustrative.Shaping prompts

| Phrasing | Effect |

|---|---|

"Use a German egress (gl=de)." |

Pins the search-tool proxy region; results return what a Berlin user would see. |

| "Fetch as markdown." | response_type="markdown" on the universal scraping tool — cheaper LLM context, more selector-stable than HTML. |

| "Limit the crawl to 25 pages." | limit=25 on ScrapelessCrawlerCrawlTool. |

| "Skip pages that don't have a price." | Parser returns None for missing fields; the agent filters. |

| "Run three URLs in parallel." | Hand-off to the bounded-concurrency pattern in Step 6 below. |

Steps 1–6 below are the under-the-hood reference. Read them once to see how the discover → render → extract → store pattern composes; then trust the agent to apply it to whatever query the operator hands over.

Architecture

┌──────────────────────────────────────────────────────────────────────┐

│ LangChain create_agent (LangGraph runtime) │

│ │

│ ┌───────────────────────┐ ┌────────────────────────────────┐ │

│ │ Chat model │ ──► │ Tools (langchain-scrapeless) │ │

│ │ (OpenAI / Anthropic │ │ • DeepSerpGoogleSearch │ │

│ │ / Gemini / Ollama) │ ◄── │ • UniversalScraping │ │

│ └───────────────────────┘ │ • CrawlerCrawl │ │

│ ▲ │ • CrawlerScrape │ │

│ │ │ • DeepSerpGoogleTrends │ │

│ │ └──────────────┬─────────────────┘ │

│ │ │ │

│ │ PydanticOutputParser │ │

│ │ (typed records) ▼ │

│ │ ┌──────────────────────────────────┐ │

│ │ │ Scrapeless cloud browser │ │

│ │ │ • residential proxies (195+) │ │

│ │ │ • anti-detection fingerprint │ │

│ │ │ • Chromium JS rendering │ │

│ │ │ • session TTL 60–900s │ │

│ │ └──────────────────────────────────┘ │

│ │ │ │

│ └──────────────────────────────────┘ │

│ typed records flow back to the agent │

└──────────────────────────────────────────────────────────────────────┘

│

▼ (optional)

┌───────────────────────────┐

│ Vector store (Chroma / │

│ PGVector / pgvector) │

└───────────────────────────┘Three layers, clean separation: the LLM reasons over the conversation, the langchain-scrapeless tools wrap the cloud browser through native LangChain interfaces, and the cloud browser handles every concern that is not reasoning. Each layer can be swapped — chat model, prompt, even the underlying tool — without rewriting the others.

Step 1 — Define the typed output schema

Pydantic is the load-bearing primitive that turns scraped markdown into something downstream code can rely on. Define the target record once and bind it to the LLM extractor in Step 4.

python

# schema.py

from typing import Optional

from pydantic import BaseModel, Field, HttpUrl

class Product(BaseModel):

name: str = Field(...,

description="Product name. Always required — use the page title or H1 if no clear product name.")

price: Optional[float] = Field(None, description="Numeric price in USD; null if absent")

rating: Optional[float] = Field(None, description="Average rating, 0–5; null if absent")

review_count: Optional[int] = Field(None, description="Number of reviews; null if absent")

key_features: list[str] = Field(default_factory=list, description="3–5 short feature bullets")

url: HttpUrl = Field(..., description="Canonical product URL")Mark every field that may be absent as Optional and default lists to empty — anti-bot interstitials, regional layout differences, and lazy-hydrated DOM nodes mean pages routinely omit one or two fields, and a non-optional schema rejects rows that would otherwise be useful. Keep name required and give it a fallback in its description (page title, H1) so the extractor never returns null for the required field; that single hint lets the schema absorb noisy pages without raising.

Step 2 — Discover with ScrapelessDeepSerpGoogleSearchTool

The deep-SERP tool returns organic Google results for a query, parameterized by language (hl), country (gl), and result count (num). It is the discovery primitive — search broadens the universe of URLs before you commit any per-page render budget.

python

# discover.py

from langchain_scrapeless import ScrapelessDeepSerpGoogleSearchTool

search = ScrapelessDeepSerpGoogleSearchTool()

results = search.invoke({

"q": "best portable espresso makers 2026 under 150",

"hl": "en",

"gl": "us",

"num": 5,

})

print(results)hl controls the result language and gl controls the egress country — they are the regional levers. For SERP monitoring across regions, run the same query with different gl values (us, de, jp, br) and diff the result lists. Transient ValueError (HTTP 400/503 wrapped by the tool) or TimeoutError responses are normal at the high end of query volume; wrap the call with the retry decorator from Step 6 before scaling out.

Step 3 — Render with ScrapelessUniversalScrapingTool

The universal scraping tool fronts Scrapeless Scraping Browser. It accepts a URL and returns the rendered page as markdown (or HTML, or screenshot). Markdown is the cheapest format to feed into an LLM extractor — it strips ads, navigation chrome, and inline styles, leaving the content the page is actually about.

python

# render.py

from langchain_scrapeless import ScrapelessUniversalScrapingTool

scrape = ScrapelessUniversalScrapingTool()

markdown = scrape.invoke({

"url": "https://example.com/p/wacaco-nanopresso",

"response_type": "markdown",

})

print(markdown[:600])Each invoke allocates a fresh cloud-browser session, which is the right default for a research pipeline — fresh sessions per URL are simpler and more resilient to per-session anti-bot state. (Cross-call session reuse via sessionName is a CDP-level feature; if your workflow needs warm cookies and login state across pages, drive the cloud browser directly via the WSS endpoint rather than through this LangChain tool.) The tool also accepts response_type="html" when you need to run your own selectors, response_type="plaintext" for the cheapest LLM context, or response_type="png" / "jpeg" for visual-regression pipelines.

Step 4 — Extract with PydanticOutputParser

The schema bound at Step 1 plugs straight into a LangChain Expression Language (LCEL) chain that takes rendered markdown and returns a typed Product. The parser injects the JSON schema into the prompt and validates the LLM's response against it.

python

# extract.py

from langchain_core.output_parsers import PydanticOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

from schema import Product

parser = PydanticOutputParser(pydantic_object=Product)

prompt = ChatPromptTemplate.from_messages([

("system",

"You extract product records from rendered web pages.\n"

"Output strictly matches this schema:\n{format_instructions}"),

("human",

"Source URL: {url}\n\nRendered markdown:\n{markdown}\n\n"

"Return one Product record. The `name` field is required — use the page "

"title or H1 if no clear product name. Set ONLY the optional fields "

"(price, rating, review_count) to null when absent."),

]).partial(format_instructions=parser.get_format_instructions())

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

extract_chain = prompt | llm | parser

product = extract_chain.invoke({"url": "https://example.com/p/wacaco-nanopresso",

"markdown": "<rendered markdown from Step 3>"})

print(product.model_dump())Parser failures are rare when three things hold: (1) every uncertain field is marked Optional[...], (2) the prompt explicitly tells the model that only the optional fields may be null, and (3) every required field has a fallback in its description (e.g. name uses the page title or H1). With those three in place, the schema's nullable contract handles missing optional fields, and the prompt instruction keeps the LLM from nulling required fields on noisy pages — so the chain runs cleanly without any retry wrapper.

Step 5 — Compose into a create_agent

The agent ties the three tools together and lets the LLM decide which one to call for any given user message. langchain.agents.create_agent is the canonical runtime as of langchain 1.2 (it replaces the deprecated langgraph.prebuilt.create_react_agent and uses the same LangGraph state graph under the hood).

python

# agent.py

from langchain.agents import create_agent

from langchain_core.tools import tool

from langchain_openai import ChatOpenAI

from langchain_scrapeless import (

ScrapelessDeepSerpGoogleSearchTool,

ScrapelessUniversalScrapingTool,

ScrapelessCrawlerCrawlTool,

)

from tenacity import (retry, stop_after_attempt,

wait_exponential, retry_if_exception_type)

# Underlying first-party tools

_search = ScrapelessDeepSerpGoogleSearchTool()

_scrape = ScrapelessUniversalScrapingTool()

_crawl = ScrapelessCrawlerCrawlTool()

# The retry decorator the agent will see on every tool call.

# Scrapeless tools surface transient API errors as ValueError, so that's the filter.

_retry = retry(

stop=stop_after_attempt(3),

wait=wait_exponential(multiplier=1, min=2, max=15),

retry=retry_if_exception_type(ValueError),

)

def _check(payload: str) -> str:

# The cloud browser sometimes returns HTTP 200 with an embedded ERR_ JSON.

# Surface it as ValueError so the @_retry decorator above kicks in.

if isinstance(payload, str) and payload.startswith('{"statusCode"') and "ERR_" in payload:

raise ValueError(f"Cloud-browser error: {payload[:200]}")

return payload

@tool

@_retry

def google_search(q: str, hl: str = "en", gl: str = "us", num: int = 5) -> str:

"""Search Google and return the top organic results as JSON."""

return _check(str(_search.invoke({"q": q, "hl": hl, "gl": gl, "num": num})))

@tool

@_retry

def render_page(url: str) -> str:

"""Render a URL with the Scrapeless cloud browser and return clean markdown."""

return _check(_scrape.invoke({"url": url, "response_type": "markdown"}))

@tool

@_retry

def crawl_site(url: str, limit: int = 10) -> str:

"""Crawl a site to a bounded page count, returning markdown for each page."""

return _check(str(_crawl.invoke({"url": url, "limit": limit})))

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

system_prompt = (

"You are a research agent that builds typed product datasets. "

"Given a category query, you: "

"(1) call google_search for the top organic URLs, "

"(2) call render_page with each promising URL, "

"(3) extract a Product record per page using the schema, "

"(4) return a JSON array of records. "

"Pin gl='us' and hl='en' unless the user asks otherwise. "

"If a page lacks a price, omit the row from the final array."

)

agent = create_agent(llm,

[google_search, render_page, crawl_site],

system_prompt=system_prompt)

for chunk in agent.stream(

{"messages": [("human",

"Find the top 3 portable espresso makers under $150 "

"and return name, price, rating, review_count, key_features, url.")]},

stream_mode="values",

):

chunk["messages"][-1].pretty_print()The @tool + @retry decorator stack is the load-bearing piece for production. The langchain-scrapeless tools wrap underlying ScrapelessErrors as ValueError before raising, so transient 400/503 responses inside the agent's tool-call loop bubble up as ValueError. Without retry, a single transient failure crashes the whole agent run; with the decorator stack, each tool call retries up to three times with exponential-jitter backoff before giving up. (The langchain-core Runnable.with_retry() returns a RunnableRetry, which create_agent does not accept — the @tool-decorated function above is the path that produces a real StructuredTool inside the retry shell.)

agent.stream(..., stream_mode="values") emits the full message state on every step, so the operator can watch the agent's tool calls and intermediate reasoning in real time. For a single final answer, swap to agent.invoke(...). To pipe the typed Product[] from Step 4 through the agent, expose the extract chain as another @tool function and add it to the list — the agent will call it after each render. The langchain-scrapeless README still demonstrates from langgraph.prebuilt import create_react_agent; that path works but emits a LangGraphDeprecatedSinceV10 warning, so the modern import above is the recommended path.

Step 6 — Production hardening

A research script that works on three URLs in a notebook does not survive thirty thousand. Four hardening patterns turn the pipeline above into something a scheduler can run unattended.

Bounded concurrency

python

# concurrent_render.py

import asyncio

from langchain_scrapeless import ScrapelessUniversalScrapingTool

scrape = ScrapelessUniversalScrapingTool()

SEM = asyncio.Semaphore(3) # cap at 3 concurrent renders per host

async def render(url: str) -> str:

async with SEM:

return await scrape.ainvoke({"url": url, "response_type": "markdown"})

async def render_all(urls: list[str]) -> list[str]:

return await asyncio.gather(*(render(u) for u in urls))Three concurrent renders per host is the sweet spot — high enough to amortize per-session warm-up cost, low enough to stay below most sites' per-IP rate limits. Cap at the host level, not globally; ten different hosts at three workers each is fine, ten workers all hitting the same retailer is not.

Retry on transient errors

python

# retry.py

from tenacity import retry, stop_after_attempt, wait_exponential, retry_if_exception_type

from langchain_scrapeless import ScrapelessUniversalScrapingTool

scrape = ScrapelessUniversalScrapingTool()

def _raise_on_embedded_error(payload: str) -> str:

# Scrapeless sometimes returns HTTP 200 with a JSON body describing an

# internal browser-side error (tunnel reset, ERR_CONNECTION_RESET, …).

# The tool does not raise on that; surface it as ValueError so retry kicks in.

if payload.startswith('{"statusCode"') and "ERR_" in payload:

raise ValueError(f"Cloud-browser error: {payload[:200]}")

return payload

@retry(

stop=stop_after_attempt(4),

wait=wait_exponential(multiplier=1, min=2, max=20),

retry=retry_if_exception_type((ValueError, TimeoutError)),

)

def render_with_retry(url: str) -> str:

return _raise_on_embedded_error(

scrape.invoke({"url": url, "response_type": "markdown"}))The Scraping Browser session occasionally fails in two distinct shapes: an HTTP-layer 400/503 (the langchain-scrapeless wrapper raises this as ValueError) and an HTTP 200 with a JSON body describing a browser-side error such as ERR_TUNNEL_CONNECTION_FAILED (the wrapper does not raise — the error JSON comes back as a string). The _raise_on_embedded_error guard above catches the second shape and converts it into a ValueError so the same retry policy applies. With both shapes covered, exponential backoff with four attempts catches the high-90s percentile without burying genuine failures (anti-bot blocks, 404s) under retries. For agent-driven calls, use the @tool + @retry decorator stack from Step 5 — the wrapper functions there should apply the same _raise_on_embedded_error check before returning.

Observability with LangSmith

bash

export LANGCHAIN_TRACING_V2=true

export LANGCHAIN_API_KEY="your_langsmith_key"

export LANGCHAIN_PROJECT="scrapeless-research-agent"With those three env vars set, every tool call, every LLM call, and every parser failure shows up in LangSmith with timing, cost, and the exact prompt the model saw. For a production run, this is the single highest-leverage change — it turns "the agent did something weird" into a clickable trace.

Persist into a vector store

python

# embed.py

from langchain_chroma import Chroma

from langchain_openai import OpenAIEmbeddings

from langchain_core.documents import Document

docs = [Document(page_content=p.model_dump_json(), metadata={"url": str(p.url)})

for p in products]

vs = Chroma.from_documents(docs, OpenAIEmbeddings(), persist_directory=".chroma")The vector store is the optional fourth stage of the pipeline. It pays off when the agent is a long-running RAG service that answers downstream questions over the dataset; it is overkill for a one-shot research script. Pick the store based on operations preference — langchain_chroma for local files, langchain_postgres for a managed Postgres + pgvector, langchain_pinecone for a hosted vector DB.

What you get back

json

[

{

"name": "Wacaco Nanopresso",

"price": 79.95,

"rating": 4.7,

"review_count": 12483,

"key_features": [

"Manual hand-pump operation",

"Up to 18 bars of extraction pressure",

"Compatible with ground coffee or NS capsules via adapter"

],

"url": "https://example.com/p/wacaco-nanopresso"

},

{

"name": "Flair NEO Flex",

"price": 119.00,

"rating": 4.5,

"review_count": 2104,

"key_features": [

"Lever-driven, no electricity required",

"Espresso-grade pressure with bottomless portafilter",

"Detachable for travel"

],

"url": "https://example.com/p/flair-neo-flex"

},

{

"name": "Outin Nano",

"price": 129.99,

"rating": 4.6,

"review_count": 5871,

"key_features": [

"Built-in heating element",

"Self-cleaning cycle",

"USB-C charging, ~3-min heat-up"

],

"url": "https://example.com/p/outin-nano"

}

]

// Schema reflects exactly what the Step 4 parser emits. Field values are illustrative samples.A few honest observations on what to expect when this runs against the live web:

- Hydration timing varies by site. The universal scraping tool waits for

domcontentloadedby default; for SPAs that hydrate prices through a second XHR, the markdown may arrive before the price renders. Re-render once with a brief delay or fall back toresponse_type="html"and a custom selector if the field is consistently null. - Optional fields stay optional. Some product pages omit explicit ratings or review counts, especially on direct-to-consumer sites. Treat

nullvalues as informative rather than as a failure mode and filter downstream. - LLM extraction dominates latency. End-to-end the pipeline is roughly 1–3 seconds for the search call, 2–4 seconds per page render, and 3–5 seconds per LLM extraction. Concurrency at the render and extract stages is the biggest lever.

- Anti-bot interstitials surface as

ValueError. When a site front-loads a Cloudflare or Akamai challenge that the cloud browser cannot transparently complete, thelangchain-scrapelesswrapper raisesValueErrorrather than silently returning a placeholder page. The retry decorator catches the transient cases; the persistent cases are best handled by widening the fingerprint or pinning a different proxy region. - The vector-store stage is optional. For a research pipeline that returns typed records to a downstream consumer, skip it entirely. Add it when the same dataset will answer multiple downstream questions over time.

FAQ

Do I need a residential proxy?

Yes for any site with meaningful anti-bot protection, which is most retailers, marketplaces, and SERP endpoints. ScrapelessUniversalScrapingTool and the deep-SERP tools route through Scrapeless's residential proxy pool by default; the gl parameter on the search tool pins the egress country.

What about transient errors like 400 or 503?

The langchain-scrapeless tools surface transient API errors as ValueError (the underlying ScrapelessError is wrapped before reraising). For direct calls, use the tenacity decorator from Step 6 with retry_if_exception_type=(ValueError, TimeoutError). For agent-driven calls inside create_agent, wrap each tool with the @tool + @retry decorator stack from Step 5 — that produces a real StructuredTool the agent accepts and applies the retry policy on every tool call. Without one of these, a single transient 400 crashes the whole agent run.

A site returns Access Denied. What now?

First retry with the Step 6 decorator. If the page persistently blocks, widen the session by changing gl to a different country, or add a brief await asyncio.sleep(...) between attempts to let session state cool. For sites with consistent IP-layer blocks, contact Scrapeless support to confirm the block is at the platform level rather than at the account level.

Selectors keep breaking. How do I survive DOM rotation?

Use response_type="markdown" on ScrapelessUniversalScrapingTool rather than parsing HTML with CSS selectors. Markdown collapses navigation chrome and most layout drift, so the LLM extractor at Step 4 sees a stable representation of the content even when the underlying DOM shifts.

How many concurrent workers per host?

Three is the documented ceiling for stable runs. Cap at the host level (asyncio.Semaphore(3) in Step 6); workers across different hosts can run independently.

Can I use this without LangGraph?

Yes. ScrapelessUniversalScrapingTool().invoke({...}) is a plain callable — call it from any Python script, FastAPI route, or Celery task. LangGraph adds the agentic loop on top, but the tools themselves are framework-agnostic.

Can I swap OpenAI for Claude, Gemini, or a local model?

Yes. Replace ChatOpenAI(model="gpt-4o-mini") with ChatAnthropic(model="claude-sonnet-4-6"), ChatGoogleGenerativeAI(model="gemini-2.5-pro"), ChatOllama(model="llama3.1"), or any other LangChain chat model. The tools list, the prompt, and the parser are unchanged.

How do I add multi-turn memory?

Pass a MemorySaver checkpointer to create_agent(llm, tools, checkpointer=MemorySaver()) and supply a thread_id on each invoke. LangGraph persists the conversation state across turns, so the agent can refer back to earlier searches without re-running them.

Where do I see request traces?

Set LANGCHAIN_TRACING_V2=true, LANGCHAIN_API_KEY, and LANGCHAIN_PROJECT (Step 6). Every tool call, LLM call, and parser run shows up in LangSmith with timing, cost, and the exact prompt — the single highest-leverage observability change for a production deployment.

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.