Is Web Scraper Slow? (Causes, Fixes & Speed Optimization Tips)

Advanced Data Extraction Specialist

Introduction

Web scraping, while powerful, often raises a key question: is web scraper slow? The answer is nuanced; it can be, but optimization is possible. This article explores factors contributing to slow web scraping and offers strategies to boost performance. Understanding these aspects is vital for efficient data collection, whether you're a data analyst, developer, or business. We'll cover bottlenecks, optimization techniques, and solutions to enhance scraping speed, ensuring timely data access.

Why Your Web Scraper Might Be Slow: Common Bottlenecks

Understanding why a web scraper might be slow is the first step toward optimizing its performance. Several factors can contribute to sluggish data extraction, ranging from network limitations to inefficient code. Identifying these bottlenecks is crucial for implementing effective solutions.

Server Response Times and Network Latency

One of the primary culprits behind slow web scraping is the target server's response time [4]. If the server is overloaded or has limited resources, your requests will take longer. Sending too many requests too quickly can also overwhelm a server, leading to slower responses or IP blocking.

Inefficient Code and Resource Management

The way your scraping script is written significantly impacts its speed. Inefficient code, such as poorly optimized parsing logic or excessive logging, can consume valuable CPU time [4]. HTML parsing, especially for complex web pages, can be resource-intensive. If your script processes operations sequentially, your CPU might become a bottleneck.

I/O Operations and Sequential Processing

Input/Output (I/O) operations can easily become the bottleneck of your scraping operation [4]. If your script waits for a response from one external resource before moving to the next, it operates sequentially. This can lead to considerable delays, especially when scraping a large number of pages.

Other Factors Contributing to Slow Scraping

Beyond the core issues, several other elements can hinder your web scraping speed:

- Rate Limiting and IP Blocking: Websites often implement rate limits. Exceeding these can result in temporary or permanent IP bans, forcing your scraper to slow down or stop [4].

- CAPTCHAs and Anti-bot Measures: Sophisticated anti-scraping techniques like CAPTCHAs require human interaction or advanced bypass techniques, significantly slowing down the process [5].

- Dynamic Content Loading: Modern websites rely on JavaScript. Traditional scrapers might miss crucial data, requiring headless browsers, which are inherently slower [5].

- Website Structure Changes: Website updates can break scrapers, requiring constant maintenance [5].

- Internet Speed: A slow internet connection directly impacts scraping speed [Quora].

Understanding these challenges is the first step towards building more robust and efficient web scrapers. The next section will delve into practical techniques to overcome these hurdles and significantly speed up your web scraping operations.

Techniques to Speed Up Web Scraping

Optimizing web scraping performance involves employing various techniques that address the bottlenecks identified earlier. By strategically implementing these methods, you can significantly reduce the time it takes to extract data and improve the overall efficiency of your scraping operations. When considering is web scraper slow, these techniques offer practical solutions.

Concurrency: Multithreading, Multiprocessing, and Asynchronous Programming

One of the most effective ways to speed up web scraping is to introduce concurrency. Instead of processing requests sequentially, concurrency allows your scraper to handle multiple tasks simultaneously. This can be achieved through:

- Multithreading: Running multiple threads within a single process. Useful for I/O-bound tasks, as one thread can perform other operations while another waits. Python's GIL can limit true parallelism for CPU-bound tasks [6].

- Multiprocessing: Running multiple processes, each with its own interpreter and memory space. This bypasses the GIL, allowing for true parallel execution of CPU-bound tasks [6].

- Asynchronous Programming (Asyncio): Allows a single thread to manage multiple I/O operations concurrently without blocking. Highly efficient for web scraping as it enables your scraper to send multiple requests and process responses as they arrive [6].

Here's a comparison summary of these concurrency models:

| Feature | Multithreading | Multiprocessing | Asynchronous Programming (Asyncio) |

|---|---|---|---|

| Execution Model | Multiple threads within a single process | Multiple independent processes | Single thread managing concurrent I/O operations |

| Parallelism | Pseudo-parallelism (due to GIL in Python) | True parallelism (bypasses GIL) | Concurrency, not true parallelism |

| Resource Usage | Lower memory overhead (shared memory) | Higher memory overhead (separate memory for each process) | Lower memory overhead (event-driven) |

| Best For | I/O-bound tasks (e.g., network requests) | CPU-bound tasks (e.g., heavy data processing) | I/O-bound tasks, highly efficient for web scraping |

| Complexity | Moderate | Moderate to High | High (requires async/await syntax) |

Proxy Rotation and Management

To circumvent rate limiting and IP blocking, implementing proxy rotation is essential. Proxies act as intermediaries between your scraper and the target website, masking your IP address. By rotating through a pool of proxies, you can distribute your requests across multiple IP addresses, making it harder for websites to detect and block your scraper. This is a critical strategy when dealing with the question of is web scraper slow due to anti-bot measures [4].

Request Throttling and Random Delays

Even with proxies, sending requests too rapidly can still trigger anti-bot mechanisms. Implementing request throttling and random delays between requests mimics human browsing behavior, making your scraper less detectable. This helps maintain a good relationship with the target website and prevents your scraper from being identified as malicious.

Efficient Data Parsing and Storage

The speed of your scraper isn't just about fetching data; it's also about how efficiently you process and store it. Using optimized parsing libraries (e.g., lxml for XML/HTML parsing) can significantly reduce processing time. Choosing an appropriate data storage solution (e.g., a fast database like MongoDB) and optimizing your write operations can prevent I/O from becoming a bottleneck. When considering is web scraper slow, optimizing these post-fetch steps is often overlooked.

Headless Browsers and Their Optimization

For websites that rely heavily on JavaScript, headless browsers (like Selenium or Puppeteer) are indispensable. However, they are resource-intensive and inherently slower. To optimize their performance:

- Disable unnecessary resources: Turn off image loading, CSS, and fonts if not critical.

- Use efficient selectors: Use simpler, more direct selectors.

- Run in headless mode: Always run without a visible GUI.

- Reuse browser instances: Reuse existing instances to save startup time.

By combining these techniques, you can build a robust and efficient web scraper that overcomes common performance challenges. The next section will introduce a service that simplifies many of these complexities.

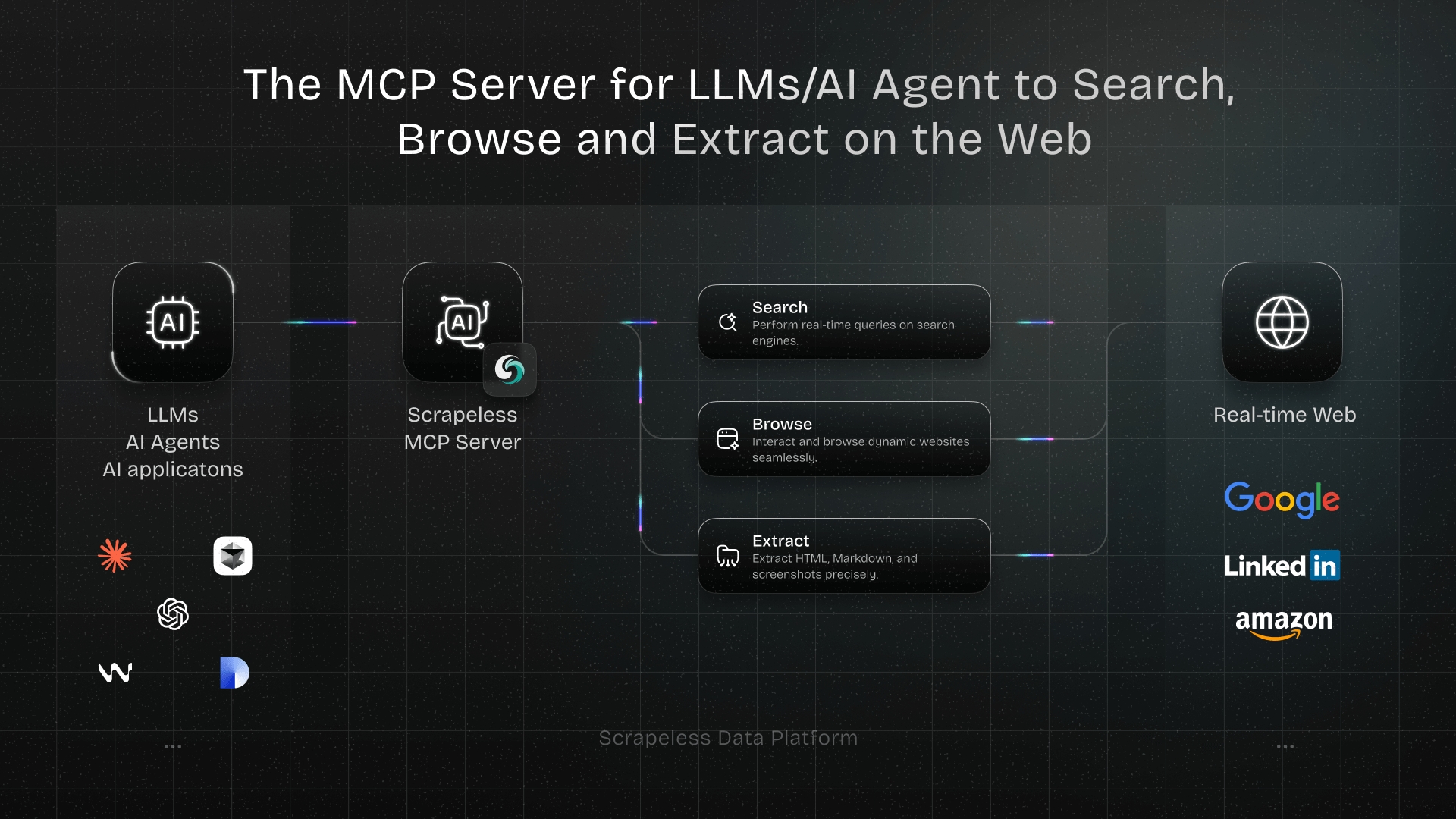

Introducing Scrapeless: Your Solution to Slow Web Scraping

While implementing optimization techniques can improve speed, managing proxies, CAPTCHAs, and dynamic content is complex. Scrapeless simplifies these, offering a robust solution for your web scraping needs. If you've asked, is web scraper slow, Scrapeless provides a powerful answer.

Scrapeless offers a comprehensive API that handles common web scraping challenges automatically:

- Automatic Proxy Rotation: Manages a vast pool of proxies, rotating them to prevent IP blocking.

- CAPTCHA Solving: Integrates advanced CAPTCHA solving.

- Headless Browser Functionality: Renders JavaScript-heavy pages effortlessly.

- Scalability: Handles large volumes of requests, ensuring fast and reliable data extraction.

- Simplified API: Integrates powerful web scraping with minimal code.

By leveraging Scrapeless, you focus on data extraction, not infrastructure. It transforms "is web scraper slow?" into "how fast can I get my data?"

Ready for faster, more reliable web scraping? Log in to Scrapeless today and streamline your data extraction workflows.

Conclusion

In conclusion, whether "is web scraper slow" is true depends on various factors like server response, code efficiency, and anti-scraping measures. While web scraping can be slow, advanced techniques such as concurrency, proxy rotation, request throttling, and efficient data handling can significantly improve performance. These strategies are crucial for effective data extraction.

However, managing a robust scraping infrastructure requires effort. For streamlined, high-performance solutions, Scrapeless offers a compelling alternative. By automating complexities, Scrapeless empowers you to collect data quickly and reliably, letting you focus on analysis rather than infrastructure.

Don't let slow web scraping hinder your data initiatives. Visit Scrapeless today to learn more and start your journey towards faster, more efficient web scraping. Experience the difference a dedicated scraping solution can make.

Key Takeaways

- Web scraping speed is variable: Whether a web scraper is slow depends on factors like server response, code efficiency, and anti-bot measures.

- Concurrency is key: Multithreading, multiprocessing, and asynchronous programming can significantly speed up I/O-bound tasks in web scraping.

- Proxies and throttling are essential: To avoid IP blocking and rate limits, use proxy rotation and random delays.

- Efficient parsing and storage matter: Optimize how you process and save extracted data to prevent bottlenecks.

- Headless browsers need optimization: For dynamic content, configure headless browsers to disable unnecessary resources and reuse instances.

- Scrapeless simplifies the process: Services like Scrapeless automate complex scraping challenges, offering a faster and more reliable solution.

Frequently Asked Questions (FAQ)

Q1: Why is my web scraper running so slowly?

A1: Your web scraper might be slow due to several factors, including slow server responses from the target website, inefficient code, excessive I/O operations, aggressive rate limiting, CAPTCHAs, dynamic content loading, or even your internet speed. Identifying the specific bottleneck is crucial for optimization.

Q2: How can I make my web scraper faster?

A2: To speed up your web scraper, consider using concurrency (multithreading, multiprocessing, or asyncio), implementing proxy rotation to avoid IP blocks, adding random delays between requests to mimic human behavior, optimizing your data parsing and storage, and configuring headless browsers to disable unnecessary resources if you are using them.

Q3: Does using a headless browser make web scraping slower?

A3: Yes, using a headless browser generally makes web scraping slower compared to direct HTTP requests. This is because headless browsers render the entire web page, including JavaScript, CSS, and images, which consumes more resources and time. However, they are necessary for scraping dynamic content that is loaded client-side.

Q4: What is the Global Interpreter Lock (GIL) and how does it affect Python web scraping speed?

A4: The Global Interpreter Lock (GIL) in Python is a mutex that protects access to Python objects, preventing multiple native threads from executing Python bytecodes at once. While it doesn't prevent multithreading, it limits true parallelism for CPU-bound tasks. For I/O-bound tasks like web scraping, multithreading can still offer performance benefits as threads can yield control during I/O operations.

Q5: When should I use a web scraping API service like Scrapeless?

A5: You should consider using a web scraping API service like Scrapeless when you need to handle complex challenges such as automatic proxy rotation, CAPTCHA solving, dynamic content rendering, and large-scale data extraction without managing the underlying infrastructure yourself. These services abstract away many technical complexities, allowing you to focus on data utilization.

References

[1] Research Nester. "Web Scraping Software Market Size & Share - Growth Trends 2037." Research Nester, link

[2] ScrapingAPI.ai. "The Rise of AI in Web Scraping: 2024 Stats That Will Surprise You." ScrapingAPI.ai Blog, link

[3] Medium. "10 Common Challenges in Web Scraping and How to Overcome Them." Medium, link

[4] Bardeen.ai. "Speed Up Your Python Web Scraping: Techniques & Tools." Bardeen.ai, link

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.