How to Build a Google Maps Scraper With Scrapeless Browserless: A Step-by-step Guide for 2026

Advanced Bot Mitigation Engineer

Key Takeaways:

- Google Maps is an unparalleled data source for B2B lead generation, local SEO and market intelligence, but its defenses are sophisticated.

- Scrapeless Scraping Browser acts as a powerful AI browser infrastructure, offering automated CAPTCHA solving, anti-detection fingerprinting and residential proxies to bypass Google's formidable anti-bot measures.

- Geo-grid tiling is the essential technique to overcome the per-search result cap (often 30–50 on broad searches, up to ~120 on tight zooms), enabling the extraction of thousands of unique business listings from any given area.

- The Scrapeless Scraping Browser platform provides AI agent ready structured JSON/HTML/Markdown output, seamlessly integrating into advanced AI workflows and data pipelines.

- Mastering the nuances of Google Maps' dynamic rendering and implementing robust retry logic are crucial for building a scalable scraping browser solution that works reliably at production scale.

Introduction: Unlocking the Google Maps Goldmine in the AI Era

Based on extensive practical experience with web crawlers, we can find that there's a consistent pattern every time you build one from scratch. The first hour feels great. The second hour you hit the first wall. By the end of the day you realize you've written three hundred lines of code just to extract a title reliably.

Google Maps is the richest public business dataset on the web. Every listing has a name, phone, website, address, category, rating, review count, opening hours, GPS, photos, service attributes and a live stream of reviews. And Google does not want you to have it in bulk. The Places API charges per request, throttles hard and leaves out fields that the regular web UI shows freely. So anyone who actually needs this data at scale ends up scraping.

This guide walks through a single-file TypeScript scraper on top of Scrapeless Scraping Browser that handles the parts that normally eat weeks: anti-detection, residential proxies, CAPTCHA, the per-search result cap and the way Google Maps serves a stripped-down panel when you open a place URL directly. One file, one run, every field.

What You Can Do With It

Google Maps data is a versatile asset, driving high-impact commercial applications from lead generation to advanced AI analytics.

The value derived from Google Maps data extends far beyond simple contact lists. Its structured nature and real-time updates make it an indispensable resource for diverse commercial applications. Here are five real-world commercial uses, all achievable from the same codebase, often with just a change in configuration:

- B2B lead generation. Run the scraper over "plumbers" in Phoenix or "dentists" in Chicago, set

maxPlaces: 500with a 3×3 grid, and you walk away with a CSV of name, phone, website, address, category and rating for every business in the area. Pipe that into your CRM or an outbound email tool. This is the single most common reason people scrape Google Maps and the easiest one to monetise. - Review and reputation monitoring. Schedule a daily run for your own business plus three to five competitors, store the JSON with a timestamp, and diff

reviews[]bypublishedAtDatebetween snapshots. New 1-star review on a competitor? Slack alert. New 5-star on yours? Push it to your marketing site. The full review text is in the output — author, stars, date and owner reply, all of it. - Real-estate and location intelligence. Tile a neighbourhood at 500m cell radius, pull every coffee shop, gym and grocery store, then plot density on a map. Property investors use this to compare amenity coverage between candidate addresses; retail chains use it for site selection. The

location.{lat,lng}field on every place makes this a one-line groupby. - Local SEO rank tracking. The scraper records rank per place per cell. Tile a city in 1km cells, pull "plumber" from each, see where your client business ranks across the grid. The rank-by-cell map IS the local-SEO heatmap that Whitespark and BrightLocal sell as a product. Build it yourself in fifty lines of code on top of the JSON output.

- ML and analytics datasets. Run the scraper across forty queries × twenty cities and you have a dataset of tens of thousands of businesses with structured attributes, opening hours and review text. Feed

reviews[].textinto a sentiment classifier, train a recommender oncategories × additionalInfo, or just use it as a benchmark corpus.

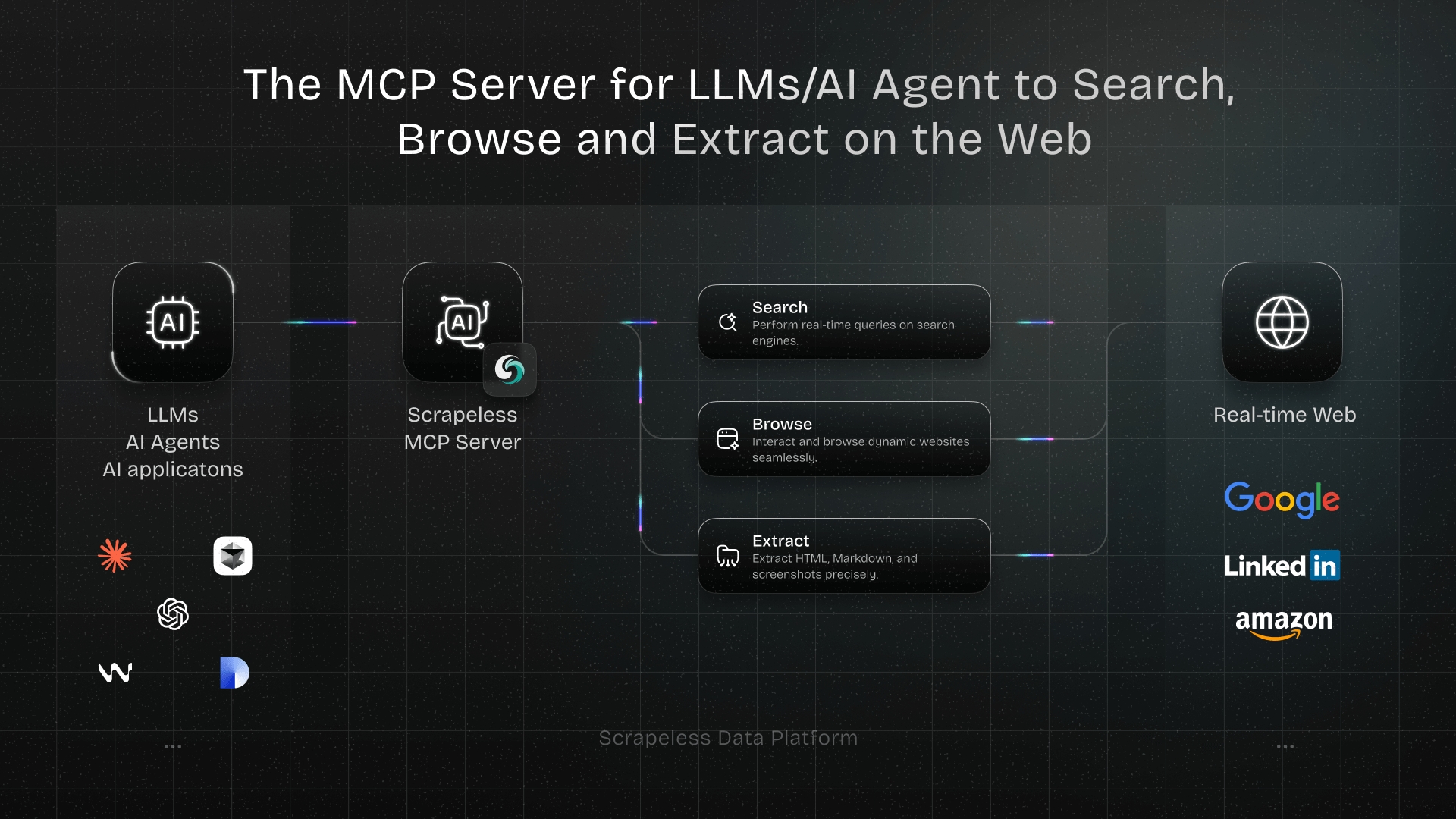

Why Scrapeless

Scrapeless Scraping Browser gives your scraper a production-grade cloud browser that Google treats as a real visitor — no stealth plugins, no fingerprint tuning, no proxy rotation scripts to maintain. Connect over a WebSocket endpoint using the CDP tools you already know (Puppeteer, Playwright) and let the infrastructure handle the hard parts.

Out of the box you get:

- Anti-detection fingerprinting that holds up under real load

- Residential proxies in 195+ countries

- Automatic CAPTCHA solving

- Session recording for debugging

- WebSocket endpoints that support CDP-based frameworks such as Puppeteer and Playwright, so there's no SDK to learn

The integration is a one-line change: point your existing puppeteer.connect() call at a Scrapeless URL instead of a local browser. The rest of your code stays exactly the same — standard CDP, standard selectors, standard workflows. All the anti-bot complexity lives on the server side, out of your codebase.

Get your API key on the free plan at app.scrapeless.com.

Prerequisites

- Node 18 or newer.

- A Scrapeless key (free tier is fine).

- Some Puppeteer familiarity helps but isn't required.

- No local Chrome. The browser runs in the cloud.

Install

bash

mkdir google-maps-browserless && cd google-maps-browserless

npm init -y

npm install puppeteer-core dotenv cheerio

npm install -D tsx typescript @types/node @types/cheerioAnd a .env:

env

SCRAPELESS_API_KEY=your_key_hereStep 1 — Connect

Every session opens the same way. Build a WSS URL with your token, country, and TTL, then hand it to puppeteer.connect.

typescript

import "dotenv/config";

import puppeteer from "puppeteer-core";

import * as cheerio from "cheerio";

async function parseWithCheerio(page: Page) {

const html = await page.content();

return cheerio.load(html);

}

function connectionURL(sessionName: string, proxyCountry = "US", sessionTTL = 600) {

const qs = new URLSearchParams({

token: process.env.SCRAPELESS_API_KEY!,

proxyCountry,

sessionTTL: String(sessionTTL),

sessionName,

});

return `wss://browser.scrapeless.com/api/v2/browser?${qs.toString()}`;

}

async function openBrowser(sessionName: string) {

return puppeteer.connect({

browserWSEndpoint: connectionURL(sessionName),

defaultViewport: null,

});

}That's the entire Scrapeless-specific surface area. Everything after this is vanilla Puppeteer. The template in the repo passes these options as a single cfg object instead of positional args — same effect, just cleaner once you have a dozen knobs.

Step 2 — The Per-Search Result Cap

Open Google Maps in a browser and search for "restaurants in New York". Scroll as long as you like — the feed stops loading new cards after a while. The theoretical ceiling is around 120, but in practice the number you actually get depends on zoom level, area density and query specificity. On a broad city-wide search the feed often tops out at 30–50 results; on a tight zoom into a dense neighbourhood it can climb closer to the 120 ceiling. The cap is context-dependent, not a fixed number.

This is the single most frustrating thing about scraping Google Maps, and a lot of first-time projects just quietly live with it. You can't scroll through it. You can't paginate past it. The cap is baked into the UI.

The workaround is geo-grid tiling. Split the target area into a grid of smaller cells, run one search per cell with a tighter zoom and different centre coordinate, merge the results and dedupe by placeId. Narrower cells mean a closer zoom level, which means more results per cell. A 2×2 grid over downtown Austin turns one search into four and typically yields 150–400 unique places after dedupe. A 3×3 over a dense metro can get you into the thousands.

The grid math is simple enough:

typescript

function kmPerDegLng(lat: number) { return 111.32 * Math.cos((lat * Math.PI) / 180); }

function kmPerDegLat() { return 111.32; }

function buildGrid(centerLat: number, centerLng: number, cellRadiusKm: number, maxCells: number) {

const side = Math.max(1, Math.floor(Math.sqrt(maxCells)));

const cells: { lat: number; lng: number }[] = [];

const stepLat = (cellRadiusKm * 2) / kmPerDegLat();

const stepLng = (cellRadiusKm * 2) / kmPerDegLng(centerLat);

const offset = (side - 1) / 2;

for (let i = 0; i < side; i++) {

for (let j = 0; j < side; j++) {

cells.push({

lat: centerLat + (i - offset) * stepLat,

lng: centerLng + (j - offset) * stepLng,

});

if (cells.length >= maxCells) return cells;

}

}

return cells;

}

function searchUrlForCell(query: string, lat: number, lng: number) {

const q = encodeURIComponent(query).replace(/%20/g, "+");

return `https://www.google.com/maps/search/${q}/@${lat.toFixed(6)},${lng.toFixed(6)},15z`;

}The @lat,lng,15z fragment in the URL is what re-centers the map for each search. The 15z is a zoom level. Crank it up for tighter cells, drop it for broader ones.

Step 3 — Scrape the Results Feed

For each cell URL, scroll the results sidebar until it stops loading new cards, then grab every a.hfpxzc link.

typescript

async function collectSearchResults(page: Page, searchUrl: string, target: number, maxScrolls = 40) {

await page.goto(searchUrl, { waitUntil: "domcontentloaded", timeout: 60000 });

await page.waitForSelector('div[role="feed"], h1.DUwDvf', { timeout: 25000 });

// Scroll the feed until enough cards load — count via cheerio snapshot.

// maxScrolls is the safety cap (each scroll ≈ 1.5s, so 40 ≈ 60s max).

// The loop breaks early when: (a) we hit the target count, or (b) the

// feed stops growing (3 consecutive scrolls with no new cards). Bump

// maxScrolls via CONFIG.maxFeedScrolls if you need longer waits on

// slow-loading feeds.

let last = 0, stable = 0;

for (let i = 0; i < maxScrolls; i++) {

const $peek = await parseWithCheerio(page);

const n = $peek(".Nv2PK").length;

if (n >= target) break;

if (n === last) { stable++; if (stable >= 3) break; } else stable = 0;

last = n;

await page.evaluate(() => {

const feed = document.querySelector('div[role="feed"]');

if (feed) (feed as HTMLElement).scrollTop = (feed as HTMLElement).scrollHeight;

});

await new Promise(r => setTimeout(r, 1500));

}

// Parse the settled DOM with cheerio — no page.evaluate for data extraction.

const $ = await parseWithCheerio(page);

const hits: SearchHit[] = [];

$(".Nv2PK a.hfpxzc").each((idx, el) => {

const name = $(el).attr("aria-label") || "";

const href = $(el).attr("href") || "";

const url = href.startsWith("http") ? href : `https://www.google.com${href}`;

if (url) hits.push({ name, url, rank: idx + 1 });

});

return hits;

}The stable-counter pattern (three scrolls with no new results means the feed is done) beats a fixed sleep every time. Waiting longer doesn't help if the feed has genuinely stopped loading.

The placeId is embedded in each URL, after !1s0x...:0x.... That's the dedupe key across cells.

Step 4 — The Weird Google Maps Rendering Thing

This one cost me the better part of a day to figure out.

If you navigate directly to a place URL like https://www.google.com/maps/place/Haraz+Coffee+House/..., Google Maps renders a stripped-down version of the panel. The h1 title is there. The rating is there. But the review count, the opening hours table, the service attributes, the photos — half of it is either missing or has empty text content. The entire DOM for the page comes out to about three thousand characters.

If you navigate to the search results instead, and click the same place card, you get the full rich panel. Same URL, completely different rendering.

The workaround is: always go through the search URL, click the card for the place you want, and wait for the panel.

typescript

const clicked = await page.evaluate((placeName) => {

const cards = document.querySelectorAll(".Nv2PK a.hfpxzc");

for (const c of Array.from(cards)) {

if (c.getAttribute("aria-label") === placeName) {

(c as HTMLElement).click();

return true;

}

}

return false;

}, hit.name);

await page.waitForSelector("h1.DUwDvf", { timeout: 15000 });A quick sanity check after the selector appears: poll the h1 for non-empty text content before moving on. Sometimes the element shows up before Google has actually filled it in.

Step 5 — Pull the Overview

Once the rich panel is loaded, take a cheerio snapshot and extract all basic fields server-side — no page.evaluate round-trip for data, just selectors.

typescript

const $ = await parseWithCheerio(page);

const txt = (s: string) => $(s).first().text().trim() || null;

const overview = {

title: txt("h1.DUwDvf"),

totalScore: parseFloat($('div.F7nice span[aria-hidden="true"]').first().text()) || null,

categoryName: txt("button.DkEaL"),

address: txt('button[data-item-id="address"] .Io6YTe'),

phone: txt('button[data-item-id*="phone"] .Io6YTe'),

website: txt('a[data-item-id="authority"] .Io6YTe'),

plusCode: txt('button[data-item-id="oloc"] .Io6YTe'),

};Lat, lng, and the placeId hash all live in the URL, not the DOM:

typescript

const at = page.url().match(/@(-?\d+\.\d+),(-?\d+\.\d+)/);

const location = at ? { lat: parseFloat(at[1]), lng: parseFloat(at[2]) } : null;

const placeId = page.url().match(/!1s(0x[0-9a-f]+:0x[0-9a-f]+)/i)?.[1] ?? null;A heads-up on the class names. DUwDvf, F7nice, Io6YTe, all of these are auto-generated by Google's build and they do change. Check your output against a known place every week or two and be ready to update the selectors when something drops to null.

Step 6 — Reviews, Photos, Menu, Q&A, About

The place panel has tabs. You click each, wait, scroll, extract. Order matters here, which is not obvious until you've hit the problem once.

Do About / attributes first. The About content lives inside the Overview panel. If you click Reviews or Photos before pulling the attributes, those nodes get unmounted and you lose them.

Reviews. Click the tab, pick a sort order if you care (newest, highest, lowest, or relevant), then scroll the pane until you have as many cards loaded as you want. Each card has the author name, text, stars, a relative date, the reviewer's photo and sometimes an owner reply.

typescript

// Expand truncated review bodies first (live DOM action), then parse with cheerio.

await page.evaluate(() => {

document.querySelectorAll("button.w8nwRe.kyuRq").forEach((b) => (b as HTMLElement).click());

});

await delay(400);

const $ = await parseWithCheerio(page);

const reviews: ApifyReview[] = [];

$(".jftiEf").each((idx, card) => {

if (idx >= maxReviews) return false;

const name = $(card).find(".d4r55").first().text().trim() || null;

const text = $(card).find(".wiI7pd").first().text().trim() || null;

const relDate = $(card).find(".rsqaWe").first().text().trim() || null;

const rEl = $(card).find(".kvMYJc").first();

const stars = rEl.length ? parseInt((rEl.attr("aria-label") || "").match(/(\d)/)?.[1] || "", 10) || null : null;

const responseFromOwnerText = $(card).find(".CDe7pd .wiI7pd").first().text().trim() || null;

reviews.push({ name, text, publishedAtDate: relDate, stars, responseFromOwnerText });

});One gotcha: longer review bodies get truncated with a "More" button. The page.evaluate click-all before the cheerio snapshot handles that — expand first, then parse.

Photos: Scroll the gallery, then grab every CSS background-image URL. The URL ends in something like =w150-h150 for the thumbnail; swap that to =w1600-h1600 for the full-size. Same URL, different size suffix.

Menu, Q&A: Each is a tab click with its own selector pack. Not every place has these. Coffee shops, for instance, usually don't surface a menu on Google Maps.

Step 7 — Making It Work at Scale

This is the part that separates a script that kind of works from one you can leave running overnight.

Google Maps is flaky. The SPA state gets weird after tab clicks. A click sometimes hits but the tab never populates. The feed occasionally loads with zero results for no obvious reason and a reload fixes it. If you're hitting one or two places by hand these are small annoyances, but at any real volume they compound fast.

Two things handle this:

- Fresh browser per place. After you've collected the search hits, open a new Scraping Browser session for each place. Reusing a session across places sounds like an obvious optimization, but panel state from place N bleeds into place N+1 in ways that are genuinely hard to debug. Fresh session every time, and the problem just goes away.

- Retry up to three times. Almost every failure is transient.

typescript

for (let attempt = 1; attempt <= 3; attempt++) {

const eb = await openBrowser(`gmp-enrich-${attempt}`);

const ep = await eb.newPage();

try {

await ep.goto(searchUrl, { waitUntil: "domcontentloaded", timeout: 60000 });

await ep.waitForSelector('div[role="feed"]', { timeout: 20000 });

const place = await enrichPlaceOnSearchPage(ep, hit, cfg);

if (place.title) return place;

} finally {

await ep.close();

await eb.close();

}

await new Promise(r => setTimeout(r, 2000));

}Across the test runs I did while building this — coffee shops in Austin, restaurants in Manhattan, dentists in Chicago — per-place success was somewhere in the 75–100% range. The failures are almost always Google not rendering the panel after a card click, and the retry loop catches most of them.

What You Get Back

One flat JSON object per place. It's deliberately wide so you don't have to run the scraper a second time to get a different slice — lead gen, reviews, attributes, all in one payload.

Here's a real result from a recent run on Haraz Coffee House in Austin:

json

{

"title": "Epoch Coffee",

"placeId": "0x8644ca3a5430e0ab:0xb9e41b4bc5eb5c33",

"cid": "13393467520822803507",

"url": "https://www.google.com/maps/place/Epoch+Coffee/...",

"searchPageUrl": "https://www.google.com/maps/search/coffee+shops/@30.278148,-97.753778,15z",

"rank": 1,

"address": "221 W N Loop Blvd, Austin, TX 78751",

"street": null,

"city": null,

"state": null,

"countryCode": null,

"postalCode": null,

"neighborhood": null,

"plusCode": "8862+QP Austin, Texas",

"location": { "lat": 30.3186545, "lng": -97.7265168 },

"phone": "(512) 454-3762",

"phoneUnformatted": "5124543762",

"website": "epochcoffee.com",

"domain": "epochcoffee.com",

"categoryName": "Coffee shop",

"categories": ["Coffee shop"],

"price": "$",

"totalScore": 4.5,

"reviewsCount": 2465,

"reviewsDistribution": { "5": 1723, "4": 451, "3": 149, "2": 60, "1": 82 },

"openingHours": [

{ "day": "Monday", "hours": "Open 24 hours" },

{ "day": "Tuesday", "hours": "Open 24 hours" },

"... 5 more days"

],

"popularTimesHistogram": {},

"popularTimesLiveText": "Usually not too busy",

"popularTimesLivePercent": 32,

"additionalInfo": {

"Service options": [

{ "name": "Dine-in", "value": true },

{ "name": "Takeout", "value": true },

{ "name": "Delivery", "value": true }

],

"Accessibility": [

{ "name": "Wheelchair accessible entrance", "value": true },

{ "name": "Wheelchair accessible seating", "value": true }

],

"Highlights": [

{ "name": "Great coffee", "value": true },

{ "name": "Good for working on laptop", "value": true }

],

"Offerings": [

{ "name": "Coffee", "value": true },

{ "name": "Vegan options", "value": true }

],

"Atmosphere": [{ "name": "Casual", "value": true }, { "name": "Cozy", "value": true }],

"Crowd": [{ "name": "College students", "value": true }, { "name": "LGBTQ+ friendly", "value": true }],

"Payments": [{ "name": "Credit cards", "value": true }, { "name": "NFC mobile payments", "value": true }]

},

"imageUrl": "https://lh3.googleusercontent.com/gps-cs-s/.../=w408-h306-k-no",

"images": [

"https://lh3.googleusercontent.com/geougc-cs/.../=w1600-h1600",

"https://lh3.googleusercontent.com/geougc-cs/.../=w1600-h1600",

"... 8 more URLs"

],

"imagesCount": 10,

"reviews": [

{

"name": "ciara",

"text": "The best vibes all around, friendly and efficient baristas, and absolutely delicious espresso...",

"publishedAtDate": "a month ago",

"stars": 5,

"likesCount": null,

"reviewerPhotoUrl": "https://lh3.googleusercontent.com/a-/.../=w36-h36-p-rp-mo-ba3-br100",

"reviewerNumberOfReviews": 25,

"responseFromOwnerText": null,

"responseFromOwnerDate": null

},

"... 9 more reviews"

],

"reviewsTags": [],

"menu": [],

"questionsAndAnswers": [],

"peopleAlsoSearch": [

{ "title": "Bennu Coffee", "url": "https://www.google.com/maps/place/..." },

{ "title": "Cenote", "url": "https://www.google.com/maps/place/..." }

],

"reservations": [],

"orderBy": [],

"claimThisBusiness": false,

"permanentlyClosed": false,

"temporarilyClosed": false,

"scrapedAt": "2026-04-16T10:59:25.305Z"

}Every field the scraper outputs is shown above. Here's the full inventory:

| Category | Fields |

|---|---|

| Identity | title, placeId, cid, url, searchPageUrl, rank |

| Location | address, street, city, state, countryCode, postalCode, neighborhood, plusCode, location.{lat,lng} |

| Contact | phone, phoneUnformatted, website, domain |

| Classification | categoryName, categories[], price |

| Ratings | totalScore, reviewsCount, reviewsDistribution{} |

| Hours | openingHours[] |

| Popular times | popularTimesHistogram{}, popularTimesLiveText, popularTimesLivePercent |

| Attributes | additionalInfo{} (Service options, Accessibility, Highlights, Offerings, Atmosphere, Crowd, Payments, Parking, Pets, etc.) |

| Media | imageUrl, images[], imagesCount |

| Reviews | reviews[] (name, text, publishedAtDate, stars, likesCount, reviewerPhotoUrl, reviewerNumberOfReviews, responseFromOwnerText, responseFromOwnerDate) |

| Extras | reviewsTags[], questionsAndAnswers[], menu[], peopleAlsoSearch[], reservations[], orderBy[] |

| Status | claimThisBusiness, permanentlyClosed, temporarilyClosed, scrapedAt |

Conclusion: Powering Your AI Agents with Real-World Data

Mastering Google Maps scraping with Scrapeless Scraping Browser is the definitive path to building autonomous AI agents that truly understand and interact with the physical world.

The journey to extract valuable data from Google Maps is fraught with technical challenges, from the per-search result cap to sophisticated anti-bot defenses. However, by leveraging the advanced capabilities of Scrapeless Scraping Browser—your dedicated AI browser infrastructure—you can transform these obstacles into opportunities. This guide has equipped you with a robust, AI-ready solution that combines geo-grid tiling, intelligent data extraction and production-grade best practices.

Whether your goal is to generate high-quality B2B leads, monitor local SEO performance, conduct in-depth market research, or feed your AI agents with real-time, structured intelligence about the physical world, Scrapeless provides the reliable foundation you need. Stop fighting anti-bot measures and start focusing on what truly matters: the insights derived from your data.

Ready to Build Your AI-Powered Data Pipeline?

Join our vibrant community to claim a free plan and connecting with fellow innovators:

FAQ

Q: Do I need a proxy to scrape from Google Map?

A: Yes. Without one you'll start getting rate-limited within a handful of requests, and from there it's 429s and consent walls. Scrapeless Scraping Browser has residential proxies baked in, so there's nothing separate to integrate. Every session you open goes through a different residential IP in the country you pick.

Q: How many places can I actually extract?

A: It depends on the city. A 2×2 grid of 2 km cells over a mid-size US downtown gives you 200–400 unique places after dedupe. Push to a 3×3 or 4×4 over dense metros and you can comfortably get into the thousands.

Q: Why use Scrapeless Scraping Browser instead of a local headless browser?

A: Scrapeless Scraping Browser offers enterprise-grade anti-detection, automated CAPTCHA solving and integrated residential proxies—features that are extremely difficult and costly to implement and maintain with a local headless browser setup. It provides a truly AI browser infrastructure, allowing you to focus on data extraction logic rather than fighting anti-bot measures.

Q: What about reviewsCount and reviewsDistribution?

A: The scraper reads reviewsCount from the Reviews tab header — the .fontBodySmall element showing "2,465 reviews" inside the rating summary. reviewsDistribution is extracted from the same tab: each row of the 5-star breakdown table carries an aria-label like "5 stars, 1,723 reviews", giving a per-star count that sums to reviewsCount. Verified across coffee shops (Austin), dentists (Chicago), restaurants (Manhattan), and gyms (San Francisco) — all 4 returned exact matches between reviewsCount and sum(reviewsDistribution).

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.