How to Build an Etsy Scraper With Scrapeless Scraping Browser: Comprehensive Guide 2026 (Node.js)

Scraping and Proxy Management Expert

Key Takeaways:

- Scrapeless Scraping Browser acts as a powerful AI browser infrastructure, clearing Etsy's DataDome anti-bot layer with automated fingerprinting, residential proxies and CAPTCHA solving.

- Four discovery modes from one CONFIG block — product URL, category URL, keyword search (with optional expansion) and shop URL. Swap inputs, same pipeline.

- Eight structured filters (on-sale, free-shipping, customizable, ships-to, min/max price, condition, order-by) compose with any discovery mode that uses search or category URLs.

- Output schema covers 30+ fields per product including

variations,breadcrumbs,listedDate,reviews[].photosand unique merchandising signals (isBestseller,isStarSeller,isFreeShipping,inStock,favoritesCount, per-review sub-scores). - A configurable retry loop (default

maxRetries: 10, growing backoff 3 s → 47 s) rotates to a fresh session and residential IP between attempts, absorbing transient 403s automatically.

Introduction: Scraping Etsy at Scale With an Anti-Detection Cloud Browser

Etsy is a goldmine for e-commerce intelligence: comparable-seller pricing for shop owners, sentiment-training corpora for ML projects and niche discovery for dropshippers all flow from the same listing pages. The official Etsy API has restricted access and a long approval cycle, third-party data resellers are costly and a custom scraper requires ongoing maintenance against DataDome and frequent CSS class-name changes on Etsy's frontend.

This guide walks through a single TypeScript file built on Scrapeless Scraping Browser that handles every hard part up front: the anti-detection cloud browser, the residential proxies, the per-product enrichment with reviews and shop metadata and the multi-query expansion technique that surfaces far more results from a single base keyword than Etsy's per-search ceiling normally allows. The same scraper supports four independent discovery modes — feed it a product URL, a category URL, a keyword search or a shop URL — and every output row carries the same rich 30-field schema regardless of how the listing was discovered.

What You Can Do With It

Etsy data is a versatile asset, driving high-impact commercial applications from product research to advanced AI analytics. Here are five real-world commercial uses, all achievable from the same codebase, often with just a change in configuration:

- Dropshipping research and product finding — keyword-search mode. Run the scraper over

"macramé plant hanger"withexpandStrategy: "keywords"across["boho", "modern", "minimalist"], setmaxProducts: 200and rank the output byfavoritesCount × rating. Filter to shops whereisStarSeller: trueand favoritesCount is well above the median — those are your dropshipping candidates. Drop the resulting CSV into Shopify or a private supplier list. This is the most common reason people scrape Etsy and the fastest one to turn into revenue. - Competitor price monitoring — product-URL (direct-URL) mode. Keep a list of competitor listing URLs in

startUrlsand run the scraper nightly. Store each JSON snapshot with itsscrapedAttimestamp and diffprice,originalPrice,discountPercentandinStockbetween runs. Price drop greater than 10%? Slack alert.inStockflips fromtruetofalse? Flag as a supply signal. The full pricing history you build this way is the core of every competitor-intel dashboard. - Keyword and trend research — category-URL mode with filters. Point

categoryUrlat a specific Etsy category (e.g./c/bags-and-purses/wallets-and-money-clips/wallets), apply filter combinations (filters.onSale: true,filters.condition: "new",filters.orderBy: "date_desc"), pulltagsandmaterialsacross a few hundred listings, frequency-count them and sort by the sum offavoritesCounton listings that use each tag. The tags showing up in recently-created listings but NOT in older ones are your rising sub-niches. - Review aggregation for ML and market research — keyword or category mode. Scrape

reviews[]across thousands of listings in a vertical (handmade candles, say, or personalised jewellery), feedreviews[].textinto a sentiment classifier and useitemQuality/shipping/customerServicesub-ratings as supervised training labels when they're present. Per-review photos (reviews[].photos[]) give you a parallel image corpus if you need visual training data. - Shop performance benchmarking — shop-URL mode. Point

shopUrlat a competitor's shop page (e.g.https://www.etsy.com/shop/TexasValleyLeather), setmaxPagesPerQuery: 5to paginate their full catalogue and the scraper enumerates every listing that shop currently sells. Compare sellers in the same category byshop.totalSales,shop.openedYear,rating,reviewsCountandisStarSeller.

Why Scrapeless

Scrapeless Scraping Browser gives your scraper a production-grade cloud browser that clears Etsy's DataDome checks out of the box — no stealth plugins, no fingerprint tuning, no proxy rotation scripts to maintain. Connect over a WebSocket endpoint using Puppeteer or Playwright and let the infrastructure handle the anti-bot layer.

Out of the box you get:

- Anti-detection fingerprinting that holds up across long-running sessions

- Residential proxies in 195+ countries (target US, GB, DE pricing separately)

- Automatic CAPTCHA solving when Etsy serves one

- Session recording for debugging selector regressions after the fact

- WebSocket endpoints that support CDP-based frameworks such as Puppeteer and Playwright — no SDK to learn

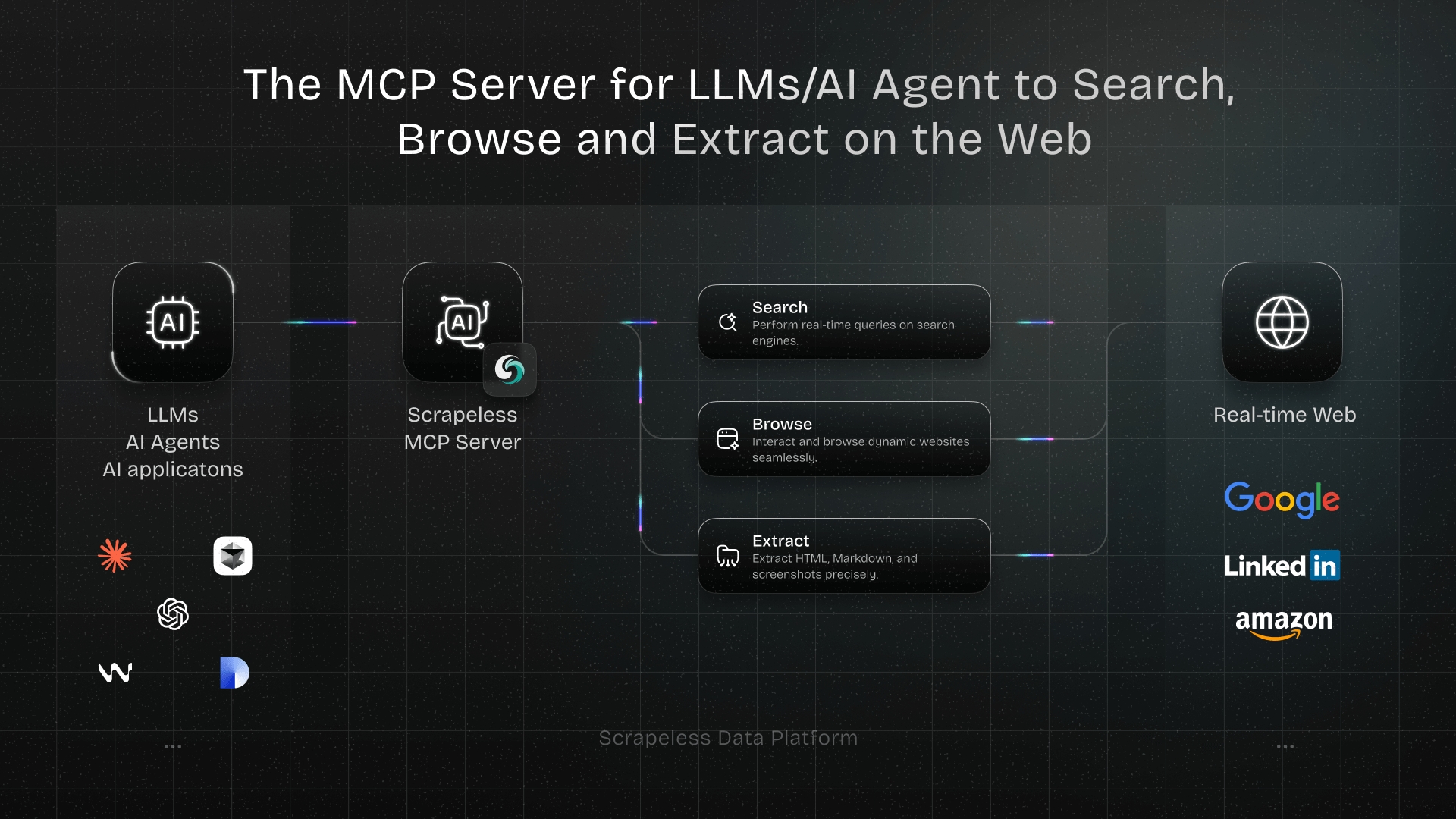

- AI Agent Ready: Seamlessly integrates with tools like the Scrapeless MCP Server to grant your AI agents "eyes and hands" on the web.

The integration is a one-line change: point puppeteer.connect() at a Scrapeless URL instead of a local browser. The rest of the code stays exactly the same — standard CDP, standard selectors, standard workflows. All the DataDome complexity lives on the server side, out of your codebase.

Get your API key on the free plan at app.scrapeless.com.

Prerequisites & Install

Node.js 18 or newer. A Scrapeless API key (free tier covers everything in this guide). Some Puppeteer familiarity helps. No local Chrome required — the browser runs in Scrapeless's cloud.

bash

mkdir etsy-scrapeless-browserless && cd etsy-scrapeless-browserless

npm init -y

npm install puppeteer-core dotenv cheerio

npm install -D tsx typescript @types/node @types/cheeriopuppeteer-core drives the cloud browser; cheerio parses the rendered HTML server-side once each page has finished loading. Splitting browser-side scrolling from Node-side parsing keeps every extractor typed and unit-testable against saved HTML fixtures.

.env:

SCRAPELESS_API_KEY=your_key_hereStep 1 — Connect to the Scraping Browser

One connection helper for the whole scraper. Build a WSS URL with the token, country and TTL, then hand it to puppeteer.connect.

ts

import "dotenv/config";

import puppeteer, { type Browser, type Page } from "puppeteer-core";

import * as cheerio from "cheerio";

// Helper — pull the page's full HTML and parse it with cheerio. The caller

// is responsible for running any browser-side scrolling / waitForFunction

// first so lazy regions are hydrated. After that, parsing stays in Node:

// typed, no stringified evaluate bodies, no `__name` tsx pitfall, easy to

// unit-test against saved HTML fixtures.

async function parseWithCheerio(page: Page): Promise<cheerio.CheerioAPI> {

const html = await page.content();

return cheerio.load(html);

}

type ScraperInput = {

proxyCountry: string; // e.g. "US", "GB", "DE"

sessionTTL: number; // seconds, 60–900 allowed; 600 is a safe default

};

function connectionURL(sessionName: string, cfg: ScraperInput): string {

const token = process.env.SCRAPELESS_API_KEY;

if (!token) throw new Error("SCRAPELESS_API_KEY is not set in .env");

// Scrapeless pins the residential IP for the lifetime of a session by

// default, so every page navigation inside one puppeteer.connect uses the

// same outbound IP. Opening a fresh session (new connection) rolls a new IP,

// which is what the retry loop relies on to route around a flagged IP.

const qs = new URLSearchParams({

token,

proxyCountry: cfg.proxyCountry,

sessionTTL: String(cfg.sessionTTL),

sessionName,

sessionRecording: "true",

// Let Scrapeless own the full desktop fingerprint — UA, screen, timezone

// and language. No manual setViewport / setUserAgent needed.

fingerprint: JSON.stringify({ platform: "Windows" }),

});

return `wss://browser.scrapeless.com/api/v2/browser?${qs.toString()}`;

}

async function openBrowser(sessionName: string, cfg: ScraperInput): Promise<Browser> {

return puppeteer.connect({

browserWSEndpoint: connectionURL(sessionName, cfg),

defaultViewport: null,

});

}That's the entire Scrapeless-specific surface area — one WSS URL and one puppeteer.connect. One thing worth knowing before you scale this: a single puppeteer.connect session is bound to a single residential IP for its lifetime (verified by hitting api.ipify.org three times in a row on the same browser handle — same IP each time). Opening a fresh session rolls a fresh IP. That's the foundation the retry loop in Step 8 builds on — if a request on this session's IP gets blocked, we close the session, open a new one, get a new IP and try again.

Scrapeless Scraping Browser owns the browser fingerprint at the connection layer — UA, screen size, timezone and language are all handled by the fingerprint: { platform: "Windows" } query parameter on the WSS URL. No manual setViewport or setUserAgent calls needed. The retry loop in Step 8 absorbs transient blocks on top.

The only browser-side setup is a one-line tsx compatibility stub:

ts

async function prepPage(page: Page): Promise<void> {

// Stub the tsx-injected __name helper so page.evaluate function bodies

// don't crash with "__name is not defined" inside the browser context.

await page.evaluateOnNewDocument(

"(function(){ globalThis.__name = function(f){ return f; }; })()",

);

}Session warm-up

Before navigating to a search or shop page, the scraper loads the Etsy homepage once to establish a valid browser session. Without this step, /search and /shop endpoints return 403 on a cold session:

ts

const ETSY_COUNTRY_PATHS: Record<string, string> = {

US: "", DE: "de/", GB: "uk/", FR: "fr/", IT: "it/", ES: "es/",

NL: "nl/", CA: "ca/", AU: "au/", JP: "jp/", IN: "in/",

};

async function warmUpSession(page: Page, proxyCountry: string): Promise<void> {

const path = ETSY_COUNTRY_PATHS[proxyCountry] ?? "";

try {

await page.goto(`https://www.etsy.com/${path}`, {

waitUntil: "domcontentloaded",

timeout: 30000,

});

} catch {

// Timeout or network error is fine — the cookies are set by then.

}

await dismissEtsyConsent(page);

await delay(1500);

}The country-specific path matters: a DE proxy hitting etsy.com/de/ returns 200 and sets the correct regional session cookies, while etsy.com/ with a DE proxy returns 403 and the session stays locked. Verified across US (64 listings), DE (60 listings) and GB (61 listings) — all three return search results on the first attempt when the warmup matches the proxy country. The scraper calls warmUpSession once per browser session before the first collectSearchResults call.

Step 2 — Four Discovery Modes

The scraper accepts four independent ways to find listings, all on the same CONFIG block. Pick the one that matches the upstream question and set exactly one of startUrls, shopUrl, categoryUrl, or searchQuery. If more than one is set, precedence is shopUrl → categoryUrl → searchQuery → startUrls.

Product URL mode (direct-URL) — known listings, nightly re-scrapes, competitor snapshots:

ts

const CONFIG: ScraperInput = {

startUrls: [

"https://www.etsy.com/listing/547491922/leather-walletwalletman-leather",

"https://www.etsy.com/listing/1022283131/personalized-slim-wallet-fathers-day",

],

maxProducts: 2,

// ...other defaults

};Category URL mode — whole-category crawls with structured filters:

ts

const CONFIG: ScraperInput = {

categoryUrl: "https://www.etsy.com/c/bags-and-purses/wallets-and-money-clips/wallets",

filters: {

onSale: true,

freeShipping: true,

minPrice: 20,

maxPrice: 60,

orderBy: "most_relevant",

},

maxPagesPerQuery: 2,

maxProducts: 20,

};Keyword search mode — niche discovery, trend research, volume listing pulls:

ts

const CONFIG: ScraperInput = {

searchQuery: "leather wallet",

expandStrategy: "keywords", // "none" | "keywords" | "prices"

expandKeywords: ["mens", "womens", "vintage"], // appended to base when expansion = keywords

maxProducts: 20,

};Shop URL mode — enumerate every listing in a specific shop for benchmarking / competitor analysis:

ts

const CONFIG: ScraperInput = {

shopUrl: "https://www.etsy.com/shop/TexasValleyLeather",

maxPagesPerQuery: 5,

maxProducts: 40,

};All four modes feed the same per-listing enrichment pipeline in Steps 4–6 and emit the same 30-field schema in Step 8.

Structured filters

Eight optional filter keys compose with searchQuery or categoryUrl. Set whichever apply, leave the rest off:

| Key | Values | Effect |

|---|---|---|

onSale |

true |

Only listings currently marked on sale |

freeShipping |

true |

Only listings shipping free to the proxy country |

customizable |

true |

Only personalizable listings |

shipsTo |

ISO code e.g. "US" |

Must ship to that country |

minPrice / maxPrice |

number | Price range (Etsy's native filter) |

condition |

"new" | "vintage" |

Etsy condition filter |

orderBy |

"most_relevant" | "date_desc" | "price_asc" | "price_desc" | "highest_reviews" |

Result ordering |

Pagination control

Set maxPagesPerQuery: N to explicitly iterate ?page=1..N on each discovery URL. Without it, the scraper scrolls the initial page to target and stops as soon as maxProducts unique listings are collected. Use explicit pagination when you want predictable wide sweeps (e.g. "scrape the first 5 pages of this category even if that's 200+ listings").

Step 3 — Multi-Query Expansion (the Etsy "result cap" workaround)

Etsy's consumer UI caps pagination well before most niches are exhausted, and per-IP rate limiting kicks in quickly under high request volume — any single keyword only ever surfaces one ranking slice. To exhaust a niche, split the base query along an axis (keywords or price buckets) and dedupe the results by listingId.

For "leather wallet", a keyword expansion looks like:

ts

function searchUrlForQuery(query: string, page = 1, priceMin?: number, priceMax?: number) {

const params = new URLSearchParams({ q: query });

if (page > 1) params.set("page", String(page));

if (priceMin !== undefined) params.set("min", String(priceMin));

if (priceMax !== undefined) params.set("max", String(priceMax));

return `https://www.etsy.com/search?${params.toString()}`;

}

type ExpandStrategy = "keywords" | "prices" | "none";

function multiQueryExpand(

base: string,

cfg: { expandStrategy: ExpandStrategy; expandKeywords: string[]; priceBuckets: [number, number][] }

) {

if (cfg.expandStrategy === "keywords") {

const queries = [base, ...cfg.expandKeywords.map((k) => `${k} ${base}`)];

return queries.map((q) => searchUrlForQuery(q));

}

if (cfg.expandStrategy === "prices") {

return cfg.priceBuckets.map(([min, max]) => searchUrlForQuery(base, 1, min, max));

}

return [searchUrlForQuery(base)];

}["mens", "womens", "vintage"] against "leather wallet" produces four searches. Run them, collect listing URLs, dedupe by the numeric ID buried in the URL (/listing/1051861316/...). Set maxProducts high enough (a few dozen to a few hundred) to actually spread across all the variants — if the target is small the scraper will short-circuit after the first query that has results, skipping the dedupe work entirely.

Price bucketing works the same way — different buckets surface different ranking slices because Etsy's "best match" is influenced by price relative to others in the result set.

Step 4 — Collect Listing URLs From Each Search

Scroll the results sidebar enough to trigger lazy-loaded cards, then grab every a[href*="/listing/"] link inside a div.listing-link (with [data-listing-id] as fallback when Etsy A/B-tests the class name).

ts

type SearchHit = { listingId: string | null; url: string; title: string | null; rank: number };

const delay = (ms: number) => new Promise((r) => setTimeout(r, ms));

async function collectSearchResults(page: Page, searchUrl: string, target: number, pageTimeoutMs = 60000): Promise<SearchHit[]> {

await page.goto(searchUrl, { waitUntil: "domcontentloaded", timeout: 60000 });

await dismissEtsyConsent(page);

await delay(2000);

// Scroll a few times to trigger lazy-loaded cards. Each pass takes a

// cheerio snapshot of the current DOM to count cards — as soon as we've

// got enough, we stop scrolling.

for (let i = 0; i < 6; i++) {

const $peek = await parseWithCheerio(page);

if ($peek("[data-listing-id], div.listing-link").length >= target) break;

await page.evaluate(() => window.scrollBy(0, 1200));

await delay(900);

}

// Parse the settled DOM with cheerio — no `page.evaluate` round-trip,

// no stringified function bodies, just straight selector traversal.

const $ = await parseWithCheerio(page);

let cards = $("div.listing-link");

if (cards.length === 0) cards = $("[data-listing-id]");

const hits: SearchHit[] = [];

const seen = new Set<string>();

cards.each((_, card) => {

const link = $(card).find('a[href*="/listing/"]').first();

if (!link.length) return;

const href = link.attr("href") || "";

const absolute = href.startsWith("http") ? href : `https://www.etsy.com${href.startsWith("/") ? "" : "/"}${href}`;

const url = absolute.split("?")[0];

if (!url || seen.has(url)) return;

seen.add(url);

const titleEl = $(card).find("h3").first();

const title = titleEl.length ? titleEl.text().trim() : (link.attr("title") || null);

const idMatch = url.match(/\/listing\/(\d+)/);

const listingId = idMatch ? idMatch[1] : null;

hits.push({ listingId, url, title, rank: hits.length + 1 });

});

return hits;

}Scrolling stays on page.evaluate because it's a live DOM action (triggering Etsy's lazy-load), but every piece of parsing runs through cheerio on a page.content() snapshot. That's the same pattern used by all six enrichment extractors in Steps 6–7.

The dismissEtsyConsent call is there for non-US sessions where Etsy serves a "Cookies and Privacy" gate before the page renders. The function looks for any button labelled "Accept all" / "Reject all" / equivalent in a few languages and clicks it.

Step 5 — Navigate to Each Listing

Unlike Google Maps, Etsy's /listing/<id>/ URLs render the full panel even on direct navigation, so no click-through step is required — the scraper calls page.goto(listingUrl) directly. DataDome returns HTTP 403 on a meaningful fraction of fresh proxy IPs to this subtree, though, so the navigation wrapper checks the response status, fast-fails on 403/429 and throws if the h1 never appears — each of those conditions triggers the outer retry loop to open a fresh session on a new residential IP.

ts

const resp = await page.goto(hit.url, { waitUntil: "domcontentloaded", timeout: 60000 });

const status = resp?.status() ?? 0;

if (status === 403 || status === 429) {

throw new Error(`blocked: HTTP ${status} on ${hit.url}`);

}

await dismissEtsyConsent(page);

try {

await page.waitForSelector("h1", { timeout: 15000 });

} catch {

// No h1 after 15 s almost always means a DataDome challenge or redirect page.

// Throw so the retry loop opens a fresh session (= fresh residential IP).

throw new Error(`no h1 on ${hit.url} — likely bot-challenge page`);

}

await delay(1500);Then trigger lazy loading by scrolling the entire page in chunks. Description, materials and the shipping section all load on scroll.

Step 6 — Extract the Overview Fields

The extractor has a two-phase structure that repeats in every extractor below: browser-side (scroll + waitForFunction to hydrate lazy regions) → Node-side (pull the page's HTML once via page.content() then parse with cheerio). That split gives us the live-DOM behaviour when we need it and the typed, testable server-side parsing when we don't.

ts

async function extractOverview(page: Page): Promise<Partial<EtsyProduct>> {

// Browser-side: scroll in chunks so description / materials / shipping

// lazy-load, then wait for the buy-box to hydrate past the "Loading"

// placeholder.

await page.evaluate(`(function() {

var step = 500, total = document.body.scrollHeight, current = 0;

var iv = setInterval(function() {

current += step;

window.scrollTo(0, current);

if (current >= total) clearInterval(iv);

}, 200);

})()`);

await delay(3500);

try {

await page.waitForFunction(

// Wait until any plausible price wrapper contains a numeric value

// (not the "Loading" placeholder Etsy briefly displays). Casting a

// wider net than just the buy-box wrapper improves hit rate on slow

// listings where the price renders into .currency-value first.

`(function(){

var sels = [

"[data-selector='price-only'] span.currency-value",

"div[data-buy-box-region='price'] span.currency-value",

"p[class*='price'] span.currency-value",

"span.currency-value"

];

for (var i = 0; i < sels.length; i++) {

var el = document.querySelector(sels[i]);

if (el && /^\\s*\\$?\\d/.test((el.textContent || '').trim())) return true;

}

return false;

})()`,

{ timeout: 15000 },

);

} catch { /* extractor still tries its best below */ }

// Node-side: pull the rendered HTML once and parse with cheerio.

const $ = await parseWithCheerio(page);

const title = $("h1").first().text().trim() || null;

// Price — three-step cascade. (1) prefer the explicit `[data-selector='price-only']`

// subtree Etsy marks as the current price; (2) fall back to the first

// `span.currency-value` whose ancestors are NOT strikethrough / original-price

// wrappers; (3) last-resort body-text regex.

const isOriginalPriceWrapper = (el: any) =>

$(el).closest("[class*='strikethrough'], [class*='original'], s, .wt-text-strikethrough").length > 0;

let price: string | null = null;

const priceOnly = $("[data-selector='price-only'] span.currency-value").first();

if (priceOnly.length && /^\d/.test(priceOnly.text().trim())) {

price = priceOnly.text().trim();

}

if (!price) {

$("span.currency-value").each((_, el) => {

if (price) return false;

if (isOriginalPriceWrapper(el)) return;

const t = $(el).text().trim();

if (t && /^\d/.test(t)) price = t;

});

}

if (!price) {

const bodyPriceMatch = $("body").text().match(/(?:Now\s+)?Price:?\s*([$£€]?[\d.,]+)/i);

if (bodyPriceMatch) price = bodyPriceMatch[1].trim();

}

// Rating — any aria-label mentioning "star", "rating", or "out of".

let rating: number | null = null;

$("[aria-label*='star' i], [aria-label*='rating' i]").each((_, n) => {

if (rating !== null) return false;

const a = $(n).attr("aria-label") || "";

const rm = a.match(/(\d+(?:\.\d+)?)\s*(?:out|star|rating)/i);

if (rm) rating = parseFloat(rm[1]);

});

// Status badges — regex the page body.

const bodyText = $("body").text();

const isBestseller = /Bestseller/i.test(bodyText);

const isFreeShipping = /free shipping/i.test(bodyText);

const isStarSeller = /Star Seller/i.test(bodyText);

const inStock = !/out of stock|sold out/i.test(bodyText);

// Shop sidecar.

const shopLink = $("a[href*='/shop/']").first();

const shopName = shopLink.text().trim() || null;

const shopUrl = (shopLink.attr("href") || "").split("?")[0] || null;

return { title, _price_raw: price, rating, isBestseller, isFreeShipping, isStarSeller, inStock,

shop: { name: shopName, url: shopUrl } } as Partial<EtsyProduct>;

}The _price_raw prefix is a convention: downstream enrichProduct feeds it through extractNumber and then deletes the raw-string field before the final JSON is emitted. The snippet is abridged — the full extractOverview in index.ts also pulls currency, discountPercent, reviewsCount, favoritesCount, description, materials, itemDetails, shippingFrom, processingTime, tags, listedDate and the rest of the shop fields. Same cheerio-first pattern throughout, just more selectors.

A small currency-tolerant extractNumber helper turns "$24.99", "24,99 €", or "1,234" into a clean number — Etsy serves prices in the local format depending on the proxy country and you don't want your numeric fields to be strings.

Step 7 — Reviews, Images, Shop Sidecar, Variations, Breadcrumbs, Related Searches

Reviews. Etsy's review cards live in div[data-review-region] (with div[class*='review-card'] and div[class*='review-item'] as fallbacks for DOM revisions). Scroll into the reviews region, then map each card to author / rating / text / date plus the three sub-ratings.

ts

async function extractReviews(page: Page, max: number): Promise<EtsyReview[]> {

// Browser-side: scroll into the reviews region so lazy review cards render.

for (let i = 0; i < 8; i++) {

const found = await page.evaluate(

`!!document.querySelector('[data-reviews-section], div#reviews, div[class*="reviews"]')`,

);

if (found) break;

await page.evaluate(() => window.scrollBy(0, 700));

await delay(700);

}

await delay(1500);

// Node-side: parse the now-hydrated page with cheerio.

const $ = await parseWithCheerio(page);

const out: EtsyReview[] = [];

$(

"div[data-review-region], div[class*='review-card'], div[class*='review-item'], li[class*='review']",

).each((_, card) => {

if (out.length >= max) return false;

const $card = $(card);

const cardText = $card.text();

// Skip aggregate containers that hold many reviews at once.

if (cardText.length > 6000) return;

const author = $card.find("a[href*='/people/'], strong, p[class*='name']").first().text().trim() || null;

// Rating: `data-rating` attribute first, then aria-label.

let rating: number | null = null;

const $starEl = $card.find("[aria-label*='star' i], [data-rating]").first();

if ($starEl.length) {

const dr = $starEl.attr("data-rating");

if (dr) rating = parseFloat(dr);

else {

const rm = ($starEl.attr("aria-label") || "").match(/(\d+(?:\.\d+)?)/);

if (rm) rating = parseFloat(rm[1]);

}

}

// Text: dedicated selectors, then longest paragraph in the card.

let text: string | null =

$card.find("p[class*='review-text'], div[class*='review-text'], div[id*='review-content']")

.first().text().trim() || null;

if (!text) {

let longest = "";

$card.find("p").each((_, p) => {

const pt = $(p).text().trim();

if (pt.length > longest.length && pt.length > 20) longest = pt;

});

text = longest || null;

}

// Date: dedicated element first, then a month-name or numeric regex.

let date: string | null =

$card.find("span[class*='date'], time, p[class*='date']").first().text().trim() || null;

if (!date) {

const dm = cardText.match(/(\w{3,9}\s+\d{1,2},?\s+\d{4}|\d{1,2}\/\d{1,2}\/\d{2,4})/);

if (dm) date = dm[1];

}

// Sub-ratings — labelled rows inside the card. Match label, parse adjacent number.

const subRating = (label: string): number | null => {

let val: number | null = null;

$card.find("span, div, li").each((_, row) => {

if (val !== null) return false;

const t = $(row).text().toLowerCase();

if (t.includes(label) && t.length < 60) {

const sm = t.match(/(\d+(?:\.\d+)?)/);

if (sm) val = parseFloat(sm[1]);

}

});

return val;

};

// Reviewer-uploaded photos — same `il_fullxfull` upgrade as listing images.

const photos: string[] = [];

const photoSeen = new Set<string>();

$card.find("img[src*='etsystatic'], img[data-src*='etsystatic']").each((_, img) => {

if (photos.length >= 6) return false;

let psrc = $(img).attr("src") || $(img).attr("data-src") || "";

if (!psrc) return;

psrc = psrc.replace(/il_\d+xN/, "il_fullxfull").replace(/_\d+x\d+\./, "_1024x1024.");

if (!photoSeen.has(psrc)) { photoSeen.add(psrc); photos.push(psrc); }

});

out.push({

author, rating, text, date,

itemQuality: subRating("item quality"),

shipping: subRating("shipping"),

customerService: subRating("customer service"),

photos,

});

});

return out;

}The four card-selector alternatives cover Etsy's ongoing A/B revisions — [data-review-region] is the current hot selector; [class*='review-card'], [class*='review-item'] and li[class*='review'] are older and newer variants that still show up depending on the account and the listing. The cardText length guard at the top skips wrapper elements that accidentally match and would return an entire batch of reviews concatenated as one.

Images. Etsy serves thumbnails by default. Upgrade them to full-resolution by replacing the size suffix in the URL: il_75x75 → il_fullxfull, or _300x300.jpg → _1024x1024.jpg. Same image, much higher resolution, no extra requests.

ts

async function extractImages(page: Page, max: number): Promise<string[]> {

const $ = await parseWithCheerio(page);

const urls: string[] = [];

const seen = new Set<string>();

$("img[src*='etsystatic'], img[data-src*='etsystatic']").each((_, img) => {

if (urls.length >= max) return false;

let src = $(img).attr("src") || $(img).attr("data-src") || "";

if (!src) return;

// Upgrade thumbnail size suffix to full resolution where possible.

src = src.replace(/il_\d+xN/, "il_fullxfull").replace(/_\d+x\d+\./, "_1024x1024.");

if (!seen.has(src)) { seen.add(src); urls.push(src); }

});

return urls;

}The img[data-src*='etsystatic'] pattern in the selector matters — Etsy lazy-loads gallery thumbnails behind data-src and doesn't populate src until they enter the viewport.

Variations, breadcrumbs, related searches. Three additional extractors run after reviews and images, each wrapped in its own try/catch so a missed selector degrades to an empty array rather than breaking the row:

extractVariations(page)— pulls the size / colour / personalisation options the seller exposes, as a{name, options[]}[]list. Populates from<select>elements inside[data-selector*='variation']subtrees.extractBreadcrumbs(page)— grabs the category trail (e.g.["Homepage", "Bags & Purses", "Wallets & Money Clips", "Wallets"]) from anchor tags carryingref=breadcrumb_listingin their href. Etsy doesn't wrap these in a<nav aria-label="breadcrumb">— they're plain links with a ref parameter.extractRelatedSearches(page)— the "Explore related searches" link chips Etsy renders at the bottom of listing pages. The extractor re-scrolls to the page foot and waits for the lazy-loaded tags section before reading link text. Etsy A/B-tests image-only chips (no text) vs text-labelled chips, so expect this field to populate on roughly half of listings.

Listed date and shop-level totals are pulled inside extractOverview alongside the basic fields. listedDate parses the "Listed on Mon DD, YYYY" string that Etsy displays near the item details — note this reflects the most recent relist/auto-renew date, not the original creation date. shop.reviewsCountShop is populated only when Etsy explicitly disambiguates the number as shop-level (many listing layouts don't render it — null is the honest answer there).

Reviewer-uploaded photos live inside each review card. extractReviews now captures up to 6 photos per review via the same il_fullxfull upgrade used for listing images, giving downstream code a parallel image corpus for visual analysis or review verification.

Step 8 — Resilient Per-Product Enrichment & Error Handling

Scraping one listing is straightforward. Scraping a hundred in a row is where transient failures start to appear — Etsy occasionally serves a stale cache, the h1 doesn't populate, a single proxy request times out. Three defensive layers handle these at scale:

Fresh browser per product. After the search hits are collected, open a new Scrapeless Scraping Browser session for each enrichment. State doesn't leak between products and a session-level error doesn't poison the rest of the run. Each fresh session rolls a new residential IP, so when DataDome returns a 403 on one IP, the next attempt lands on a different one.

Up to cfg.maxRetries retry attempts (default 10) with a growing backoff. On a clean run most products succeed on attempt 1; on a bad-IP run it can take 3–6 attempts before the session lands on a clean residential IP. A high retry budget is the difference between 50% hit rate and 100%.

Categorised error taxonomy. categorizeError(err) maps every raw failure (HTTP 403/404/429, ERR_SSL_*, ERR_TUNNEL_*, h1-missing, nav timeout, WSS handshake) to one of eight ScrapeErrorKind values with a retryable: boolean flag. Retryable errors feed the backoff loop; non-retryable ones (e.g. HTTP 404 on a stale listing) short-circuit immediately. When all attempts exhaust, the product ships with error: { kind, message, attempts } populated so downstream code can tell exactly why a row came back empty.

ts

// Inside the main loop, once for each search hit h (indexed by i):

const MAX_ATTEMPTS = cfg.maxRetries; // default 10

let p: EtsyProduct | null = null;

let lastError: ScrapeErrorInfo | null = null;

let attemptsUsed = 0;

for (let attempt = 1; attempt <= MAX_ATTEMPTS; attempt++) {

attemptsUsed = attempt;

let eb;

try {

eb = await openBrowser(`etsy-enrich-${i}-${attempt}-${Date.now()}`, cfg);

} catch (e: any) {

lastError = categorizeError(new Error(`openBrowser failed: ${e?.message ?? e}`));

log(` attempt ${attempt}/${MAX_ATTEMPTS} — ${lastError.kind}: ${lastError.message.slice(0, 120)}`);

if (!lastError.retryable) break;

if (attempt < MAX_ATTEMPTS) await delay(Math.max(cfg.retryInitialBackoffMs, 3000));

continue;

}

try {

p = await enrichProduct(eb, h, cfg);

if (p.title) { lastError = null; break; }

lastError = categorizeError(new Error(`no h1 on ${h.url} — title was null`));

} catch (e: any) {

lastError = categorizeError(e);

log(` attempt ${attempt}/${MAX_ATTEMPTS} — ${lastError.kind}: ${lastError.message.slice(0, 120)}`);

if (!lastError.retryable) break; // non-retryable: 404, etc. Fail fast.

} finally {

await eb.close().catch(() => {});

}

if (attempt < MAX_ATTEMPTS) {

// Configurable growing backoff. Defaults (3000, 1500, 500) yield

// 5s, 8s, 12s, 17s, 23s, 30s, 38s, 47s, 57s between attempts.

const backoff = cfg.retryInitialBackoffMs

+ attempt * (cfg.retryBackoffLinearMs + attempt * cfg.retryBackoffQuadraticMs);

await delay(backoff);

}

}

if (!p || !p.title) {

p = emptyProduct(h.url);

p.rank = h.rank;

if (lastError) {

p.error = { kind: lastError.kind, message: lastError.message.slice(0, 200), attempts: attemptsUsed };

}

}

products.push(p);

// Inter-product pause (configurable) — breaks up back-to-back listing

// navigations so the session pattern doesn't look bot-shaped to DataDome.

if (i < hits.length - 1) await delay(cfg.interProductDelayMs);A few details that matter at scale: the session name includes the product index i and Date.now() so fresh sessions can't collide across products; openBrowser is wrapped in its own try/catch so a failed WSS handshake doesn't skip the retry; eb.close() is swallowed with .catch(() => {}) because the session is already dead by the time you're closing it; the growing backoff grows slowly enough that easy products finish fast but hard ones get the tens-of-seconds window that DataDome enforces for flagged IPs; and the inter-product pause measurably reduces the chance of correlated blocks.

The eight error kinds

Every failed product carries an error: { kind, message, attempts } object. The kind field tells downstream code how to react without parsing the free-form message:

kind |

Trigger | Retryable |

|---|---|---|

blocked |

HTTP 403 or 429 — DataDome or rate limit | ✅ yes |

not-found |

HTTP 404 — listing deleted or never existed | ❌ no (fail fast) |

tls |

ERR_SSL_* / ERR_CERT_* — transient proxy hiccup |

✅ yes |

network |

ERR_TUNNEL / ERR_CONNECTION_* / ERR_ABORTED |

✅ yes |

no-h1 |

Page loaded but <h1> never appeared — soft challenge page |

✅ yes |

timeout |

Navigation timeout exceeded pageTimeoutMs |

✅ yes |

open-browser |

WSS handshake to Scrapeless failed | ✅ yes |

unknown |

Anything else | ✅ yes (default) |

Every knob is tunable

All retry and pacing values live on ScraperInput — nothing is hard-coded. Adjust them when you need predictable throughput on a stricter plan or more aggressive retries on a harder target:

| CONFIG field | Default | Role |

|---|---|---|

maxRetries |

10 |

Total attempts per product before giving up |

retryInitialBackoffMs |

3000 |

Base of the growing-backoff formula |

retryBackoffLinearMs |

1500 |

Linear term |

retryBackoffQuadraticMs |

500 |

Quadratic term |

interProductDelayMs |

3000 |

Pause between consecutive product enrichments |

pageTimeoutMs |

60000 |

page.goto timeout |

h1TimeoutMs |

15000 |

waitForSelector("h1") timeout |

postLoadDelayMs |

1500 |

Delay after h1 appears, before extraction |

What You Get Back

One flat JSON object per product. Wide on purpose, so the same scraper feeds every downstream use case without a second pass.

Real first-result from a "leather wallet" search run on this exact template:

json

{

"listingId": "547491922",

"title": "Leather Wallet•Wallet•Man Leather Wallet•Minimalist Wallet•Personalize Wallet•Leather Anniversary•Slim Leather Wallet•Man Wallet",

"url": "https://www.etsy.com/listing/547491922/leather-walletwalletman-leather",

"rank": 1,

"price": 5.52,

"originalPrice": 68.99,

"currency": "$",

"discountPercent": 92,

"inStock": false,

"rating": 4.9,

"reviewsCount": 929,

"favoritesCount": 850,

"isBestseller": false,

"isFreeShipping": false,

"isStarSeller": true,

"tags": ["Bridesmaid gifts", "Groomsmen gifts", "Wedding gifts", "Engagement gifts"],

"materials": [],

"shop": {

"name": "TexasValleyLeather",

"url": "https://www.etsy.com/shop/TexasValleyLeather",

"location": null,

"totalSales": null,

"openedYear": null,

"reviewsCountShop": null

},

"images": [

"https://i.etsystatic.com/15980284/r/il/2456a5/3164786673/il_fullxfull.3164786673_roeh.jpg",

"... 4 more URLs"

],

"variations": [

{ "name": "Personalization", "options": ["Yes, add engraving", "No, thanks"] },

{ "name": "Color Option", "options": ["Chestnut", "Black", "Tan"] }

],

"breadcrumbs": ["Homepage", "Bags & Purses", "Wallets & Money Clips", "Wallets"],

"relatedSearches": ["Wallets for Men Leather", "Sleek Mens Wallet", "Custom Slim Leather Bifold Wallet"],

"listedDate": "Apr 15, 2026",

"priceBucket": null,

"reviews": [

{

"author": "Liz",

"rating": 0,

"text": "As described and shipped quickly. Thank you!",

"date": "Apr 12, 2026",

"itemQuality": null,

"shipping": null,

"customerService": null,

"photos": []

},

"... 9 more reviews"

],

"error": null,

"scrapedAt": "2026-04-16T17:09:48.919Z"

}When a product exhausts all retries (rare with the default maxRetries: 10 — mostly happens on genuinely-stale URLs or during a DataDome IP-reputation storm), you get a row with title: null and a structured error object so you can tell downstream why it failed:

json

{

"listingId": "1226547395",

"title": null,

"url": "https://www.etsy.com/listing/1226547395/invalid-stale-listing",

"price": null,

"rating": null,

"images": [],

"variations": [],

"breadcrumbs": [],

"relatedSearches": [],

"listedDate": null,

"shop": { "name": null, "url": null, "location": null, "totalSales": null, "openedYear": null, "reviewsCountShop": null },

"reviews": [],

"error": {

"kind": "no-h1",

"message": "no h1 on https://www.etsy.com/listing/1226547395/invalid-stale-listing — likely bot-challenge page",

"attempts": 10

},

"scrapedAt": "2026-04-16T17:10:19.187Z"

}A few honest observations about this real output, since they're worth knowing before you run it on your own niche:

materialscame back empty for this listing even though it's a leather wallet. Not every Etsy seller fills the structured Materials field — they often write materials into the description instead. Same forshop.location/shop.totalSales/shop.openedYearwhen those aren't rendered prominently on the page.reviews[].ratingcame back 0 here, and across the run a meaningful fraction of review cards came back withauthor: nullor the author name leaking into thedatefield (e.g."eTylerApr 12, 2026"). Review-card parsing is the most fragile part of the schema on the current Etsy DOM — treat reviews as partial signal and filter for non-null authors and well-formed dates before analysis.reviews[].itemQuality/shipping/customerServicecame back null — Etsy only renders the per-criterion sub-ratings on a subset of listings, typically the ones with reviewer-uploaded photos. When they're rendered, the scraper picks them up; when they aren't, you get null.discountPercent: 92— that's a real value Etsy was showing. 92% off a $68.99 wallet down to $5.52 is the kind of artifact that's worth flagging in any pricing analysis.

This is what real Etsy data looks like. Plan downstream code for null fields and sparse sub-ratings; don't assume every record is fully populated.

Conclusion: Scale Your Etsy Intelligence

Building a robust Etsy scraper in 2026 requires more than just code—it requires a cloud-native strategy. By leveraging Scrapeless, you can bypass anti-bot defenses and extract high-fidelity data at scale.

Ready to start?

Sign up for Scrapeless and join our community on Discord today, to claim your free trial of up to 100 hours browser run, and stay updated on the latest in AI Agents automation and web automation.

Discord

Telegram

FAQ

Is scraping Etsy legal?

Scraping publicly available data for price monitoring and research is generally legal, provided you respect Etsy's Terms of Use and avoid scraping personal user data. Using Scrapeless ensures your scraping activity is respectful of server resources through managed pacing.

How does Scrapeless handle Etsy's DataDome protection?

Unlike standard proxies, Scrapeless manages the entire browser fingerprint and TLS handshake. This makes your scraper indistinguishable from a real user, allowing you to bypass DataDome's sophisticated bot detection without manual stealth configuration.

Q1: Is a proxy required to scrape Etsy?

Yes. Without a residential proxy, DataDome flags datacenter traffic quickly — the fingerprint and IP-reputation combination typically scores into the reject bucket and direct-navigation requests to /listing/ pages return HTTP 403 with a JavaScript challenge page. Scrapeless Scraping Browser ships with residential proxies built in — every session routes through a different residential IP in the chosen country, verified in testing by consecutive fresh sessions returning distinct outbound IPs (api.ipify.org probe).

Q2: How do I watch what the scraper did in a past run?

Every session in this template sets sessionRecording: "true" on the WSS URL, so Scrapeless saves a full video-style replay of every page the cloud browser touched — scroll position, DOM state and network activity. Find replays at app.scrapeless.com → Scraping Browser → Sessions, and match by the sessionName value the scraper logs per attempt (e.g. etsy-enrich-3-2-1713198231047).

If the dashboard shows "Replay Unavailable — Please enable 'Web Recording' to view the session recordings", flip the Web Recording toggle on your Scrapeless account settings page. It's free on every plan; it's just off by default. Once enabled, all future sessions record automatically — past sessions that ran while recording was off cannot be recovered retroactively.

Replays are the single fastest way to debug why one row came back with title: null. Open the session, scrub the timeline to the moment page.goto fired, and you'll see whether the server returned a real listing, a DataDome challenge or a stale-URL redirect.

Q3: Why do reviews sometimes load via internal endpoints instead of the page?

Newer Etsy listings load some review batches via internal POST requests after the page has rendered. The scraper handles this by scrolling into the reviews region and waiting — by the time the parser runs, the cards are in the DOM. For products with thousands of reviews, you'll get the first ~30 (or whatever you set maxReviews to). Going deeper requires intercepting the GraphQL endpoint directly, which is out of scope here.

Q4: What about region and currency redirects?

Etsy redirects by IP to localised versions (etsy.de from a German IP, etsy.fr from French). Prices and currency strings differ per region. The scraper's extractNumber helper handles both 1,234.56 (en-US) and 1.234,56 (de-DE) formats. If you want consistent USD pricing across runs, pin proxyCountry: "US".

Q5: How do I filter by price, on-sale, free-shipping, or condition?

Set any combination of the eight filters.* keys. They compose with searchQuery and categoryUrl modes and are encoded directly onto Etsy's URL:

ts

const CONFIG: ScraperInput = {

categoryUrl: "https://www.etsy.com/c/bags-and-purses/wallets-and-money-clips/wallets",

filters: {

onSale: true, // → &is_on_sale=1

freeShipping: true, // → &free_shipping=1

customizable: true, // → &is_personalizable=1

shipsTo: "US", // → &ships_to=US (ISO country code)

minPrice: 20, // → &min=20

maxPrice: 60, // → &max=60

condition: "vintage", // "new" | "vintage" (→ &explicit=vintage)

orderBy: "price_asc", // "most_relevant" | "date_desc" | "price_asc" | "price_desc" | "highest_reviews"

},

// ...

};Two caveats worth knowing: filters.minPrice / filters.maxPrice on a /search?q=... URL is DataDome-sensitive (filtered search URLs get 403'd more aggressively than unfiltered ones), so expandStrategy: "prices" now runs a single broad search and bucket-tags results client-side via priceBucket — same user intent, no URL-filter block. On categoryUrl the price filter works normally.

Q6: Can I tune retries, timeouts and pacing?

Yes. Every retry and pacing value is a CONFIG field on ScraperInput:

| Field | Default | Role |

|---|---|---|

maxRetries |

10 |

Total attempts per product before giving up |

retryInitialBackoffMs |

3000 |

Base of the growing-backoff formula |

retryBackoffLinearMs |

1500 |

Linear term |

retryBackoffQuadraticMs |

500 |

Quadratic term (yields 5 s → 57 s progression) |

interProductDelayMs |

3000 |

Pause between consecutive product enrichments |

pageTimeoutMs |

60000 |

page.goto timeout |

h1TimeoutMs |

15000 |

waitForSelector("h1") timeout |

postLoadDelayMs |

1500 |

Delay after h1 appears, before extraction |

Stricter Scrapeless plans benefit from lower interProductDelayMs + lower maxRetries; harder anti-bot targets benefit from higher values on both.

Q7: What failure categories can I expect?

Every product that exhausts retries carries a structured error: { kind, message, attempts } field. Eight categorised kinds:

blocked— HTTP 403/429 from DataDome or rate limiting (retryable)not-found— HTTP 404, stale or deleted listing (non-retryable — fails fast)tls—ERR_SSL_*/ERR_CERT_*proxy TLS hiccup (retryable)network—ERR_TUNNEL/ERR_CONNECTION_*/ERR_ABORTED(retryable)no-h1— page loaded but<h1>never appeared, likely a soft DD challenge page (retryable)timeout— navigation timeout exceeded (retryable)open-browser— WSS handshake to Scrapeless failed (retryable)unknown— anything else (retryable by default)

Downstream code can treat kind: "not-found" as "drop this URL, don't ever re-queue it" and kind: "blocked" as "try this one again in the next hour when DataDome's IP reputation window resets".

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.