Error 403 in Web Scraping: 10 Easy Solutions

Advanced Data Extraction Specialist

📌 Key Takeaways

- 403 web scraping is caused by anti-bot measures like IP bans, missing headers, and geo-restrictions.

- Technical fixes include adding realistic headers, managing sessions, rotating proxies, and throttling requests.

- Advanced tools like Scrapeless automate these defenses, saving time and reducing failure rates.

- A combination of solutions ensures sustainable scraping without constant interruptions from 403 web scraping errors.

When scraping data from the web, nothing is more frustrating than being stopped by an HTTP 403 Forbidden response. This so-called 403 web scraping error means the server recognized your request but refuses to serve the content.

Unlike a 404 (page not found), the 403 web scraping error suggests the website is actively blocking you, often because it suspects automated activity. In this guide, we’ll dive deep into 10 practical solutions for overcoming this challenge, including advanced techniques and the use of modern tools like Scrapeless.

Why Does 403 Web Scraping Error Happen?

A 403 web scraping response is triggered when a server thinks:

- You are a bot rather than a human visitor.

- Your IP or region is blacklisted.

- Requests are malformed (missing headers, no cookies, wrong session tokens).

- The request frequency is suspicious (too many hits in a short time).

Understanding these triggers is the first step to fixing the issue.

10 In-Depth Solutions to Fix Error 403 in Web Scraping

1. Set a Realistic User-Agent String

Why it matters:

Many scrapers send requests with default libraries like Python’s requests or urllib. Servers easily detect these signatures and block them, resulting in 403 web scraping errors.

How to fix:

- Use a real browser User-Agent (e.g., Chrome, Firefox).

- Rotate different User-Agents to avoid fingerprinting.

python

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/116.0 Safari/537.36"

}

response = requests.get(url, headers=headers)Pro Tip: Pair User-Agent with other headers like Accept-Language and Referer to look more human.

2. Add Complete HTTP Headers

Why it matters:

Websites don’t just check User-Agent; they also look for missing headers. If your request looks “too clean,” the site flags it as a bot, leading to a 403 web scraping block.

How to fix:

- Add

Accept,Accept-Language,Referer, andConnection. - Send cookies when necessary.

python

headers = {

"User-Agent": "...",

"Accept-Language": "en-US,en;q=0.9",

"Referer": "https://google.com",

"Connection": "keep-alive"

}Note: Use tools like Chrome DevTools to inspect real browser requests and replicate them.

3. Respect robots.txt and Crawl Rate

Why it matters:

If your scraper floods a site with hundreds of requests per second, anti-bot systems like Cloudflare or Akamai will trigger a 403 web scraping denial.

How to fix:

- Implement delays between requests (1–3 seconds).

- Randomize pauses to mimic natural browsing.

- Follow crawl-delay rules in

robots.txt.

Risk: Too many rapid-fire requests may even get your IP permanently banned.

4. Use Proxies and IP Rotation

Why it matters:

A common reason for 403 web scraping is IP blocking. Websites maintain blacklists of suspicious addresses, especially if they notice too many requests from one source.

How to fix:

- Use residential or mobile proxies (harder to detect than datacenter ones).

- Rotate IPs regularly.

- Integrate proxy pools with scraping libraries.

python

proxies = {

"http": "http://username:password@proxy-server:port",

"https": "http://username:password@proxy-server:port"

}Note: Residential proxies are pricier but far more reliable for bypassing 403 web scraping issues.

5. Maintain Sessions and Cookies

Why it matters:

Many websites require session cookies for authenticated or persistent browsing. Without cookies, requests may be flagged as invalid and blocked with a 403 web scraping error.

How to fix:

- Store cookies after login and reuse them.

- Use a session object to keep state.

python

session = requests.Session()

session.get("https://example.com/login")

response = session.get("https://example.com/protected")Note: Some sites use rotating CSRF tokens; make sure to refresh them.

6. Switch to Headless Browsers

Why it matters:

Basic libraries (like requests) can’t handle JavaScript-heavy sites. These often trigger 403 web scraping errors because your requests look incomplete.

How to fix:

- Use Playwright, Puppeteer, or Selenium.

- Render JavaScript pages just like a human browser.

- Extract cookies and headers automatically.

python

from playwright.sync_api import sync_playwright

with sync_playwright() as p:

browser = p.chromium.launch(headless=True)

page = browser.new_page()

page.goto("https://example.com")

html = page.content()7. Throttle Requests (Human-Like Behavior)

Why it matters:

If your scraper clicks through hundreds of pages in seconds, it’s obvious you’re a bot. Sites respond with 403 web scraping errors.

How to fix:

- Add random delays (2–10 seconds).

- Scroll pages, wait for AJAX calls.

- Simulate mouse/keyboard events in headless browsers.

8. Handle Geo-Restrictions

Why it matters:

Some websites allow access only from specific countries. Requests from other regions may return a 403 web scraping denial.

How to fix:

- Use geo-specific proxies (e.g., US, EU, Asia).

- Choose proxy providers that offer city-level targeting.

Example:

If a news site only serves EU visitors, you must use an EU residential proxy to avoid the 403 web scraping block.

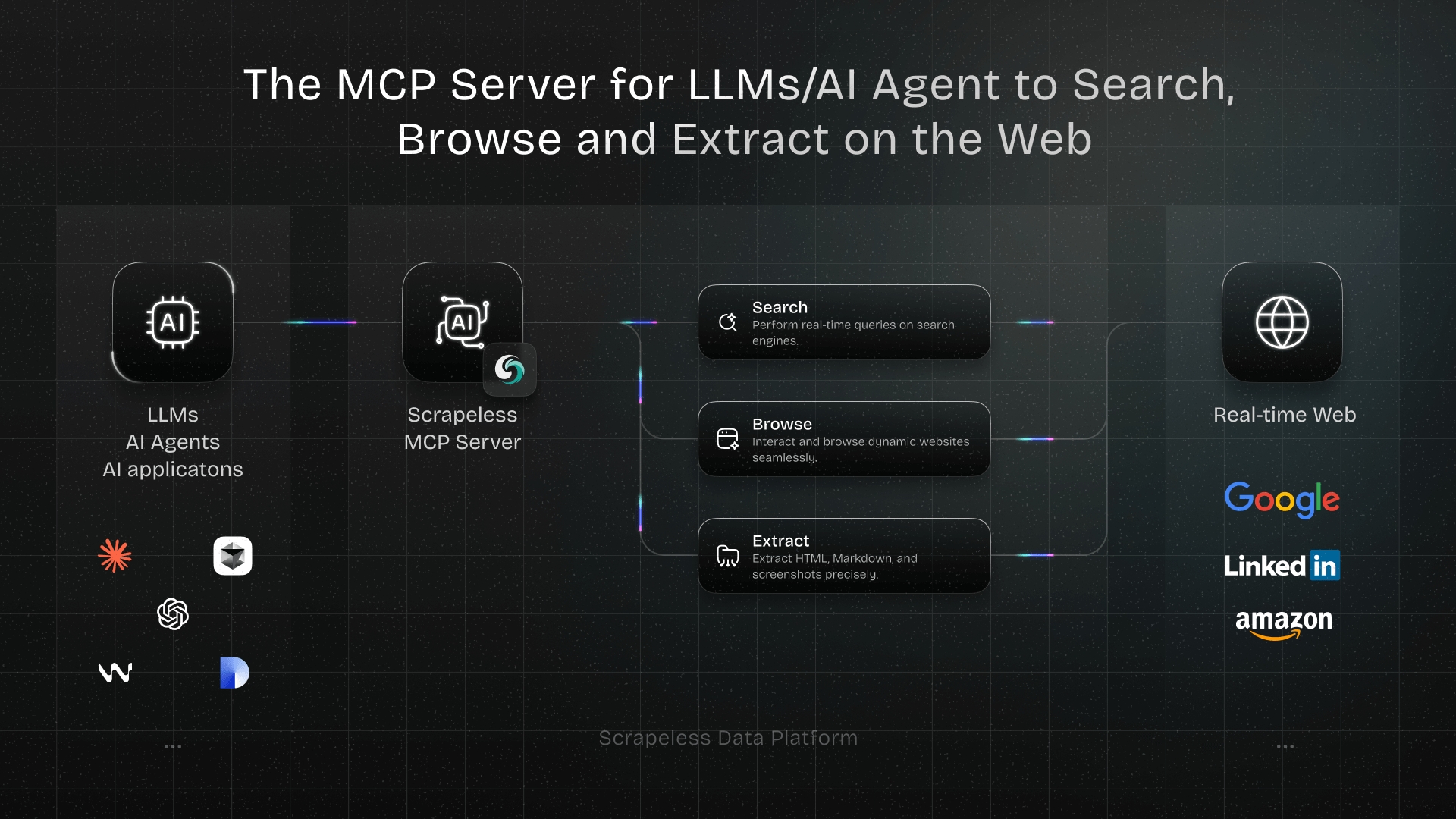

9. Use Scrapeless (Highly Recommended)

Why it matters:

Configuring proxies, headers, sessions, and browser automation manually is complex and error-prone. Scrapeless is an advanced scraping platform that automates these tasks and prevents 403 web scraping blocks out of the box.

Advantages of Scrapeless:

- Automatic IP rotation (residential + mobile)

- Smart header and cookie management

- Handles JavaScript rendering

- Built-in anti-detection algorithms

Why choose Scrapeless?

Instead of spending hours tweaking your scraper to bypass 403 web scraping, Scrapeless manages the process, allowing you to focus on extracting and analyzing data.

10. Monitor & Adapt Continuously

Why it matters:

Anti-bot systems evolve constantly. What works today might fail tomorrow, leading to fresh 403 web scraping errors.

How to fix:

- Track error rates in logs.

- Rotate strategies (proxies, headers, sessions).

- Use machine learning to adapt scraping patterns dynamically.

Pro Tip: Combining Scrapeless with manual fallback methods ensures long-term scraping resilience.

Final Thoughts

Encountering 403 web scraping errors is frustrating, but it doesn’t mean scraping is impossible. By understanding the triggers and applying the 10 solutions above, you can make your scraper more resilient and reliable.

For developers who want a shortcut, Scrapeless offers an all-in-one solution to avoid 403 web scraping headaches and keep your projects running smoothly.

At Scrapeless, we only access publicly available data while strictly complying with applicable laws, regulations, and website privacy policies. The content in this blog is for demonstration purposes only and does not involve any illegal or infringing activities. We make no guarantees and disclaim all liability for the use of information from this blog or third-party links. Before engaging in any scraping activities, consult your legal advisor and review the target website's terms of service or obtain the necessary permissions.